David

3.2K posts

David

@dzhng

founder @duetchat • prev: @socialpluscorp @nasajpl

San Francisco, CA Katılım Haziran 2011

678 Takip Edilen12.4K Takipçiler

@dzhng context usage is the killer one. most people burn the window on setup and then wonder why the outputs get worse halfway through

English

It's very easy to be net unproductive with LLMs

LLMs have a "zone of genius" that's a function of:

- agent friendliness of environment (e.g. clean code)

- task complexity

- current context usage

When it stays in its zone of genius, it's great and can one shot tasks perfectly

However things degrade VERY quickly to a slop fest the moment you get outside of its zone of genius

As an AI coder your job is to ride the fine line of maximizing LLM output while keeping it in its zone of genius at all times.

Once it's outside of the zone, no amount of promoting is going to save you

Productivity only comes when you use your boost when you need it, while avoiding the potholes along the way

If you blindly use LLMs for everything, it's going to net out at zero

English

I actually partially agree with this - llms are great coders but terrible architects. The problem is most people don't know the difference and either blindly trust the llm or don't give it a chance.

David Cramer@zeeg

im fully convinced that LLMs are not an actual net productivity boost (today) they remove the barrier to get started, but they create increasingly complex software which does not appear to be maintainable so far, in my situations, they appear to slow down long term velocity

English

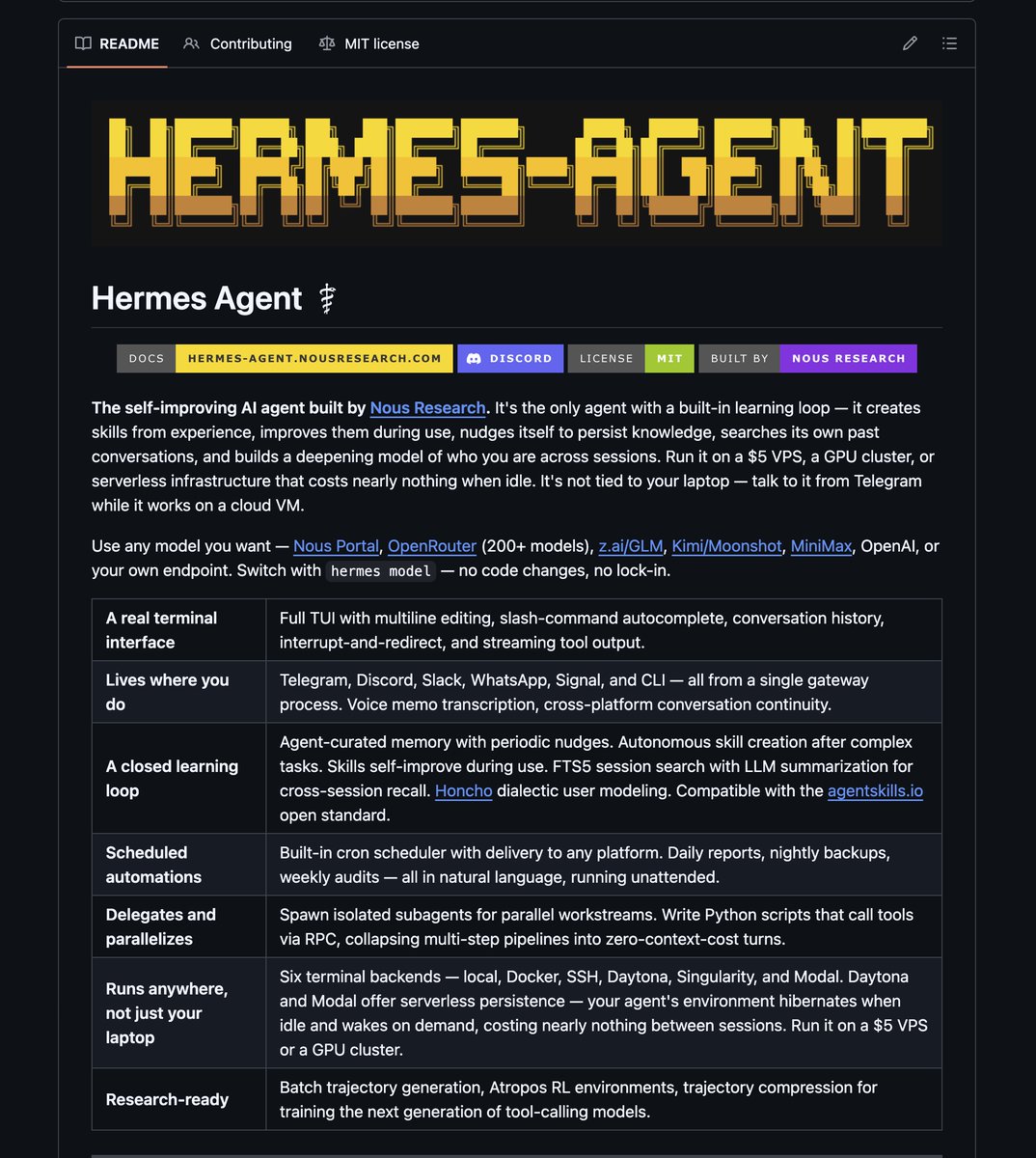

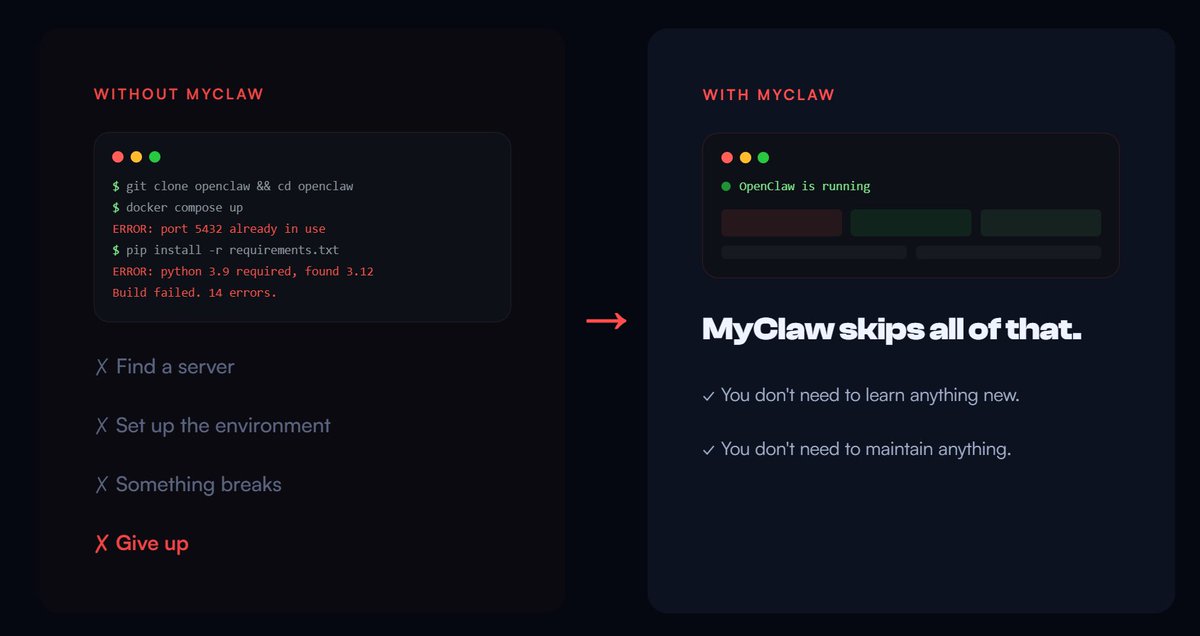

@andrewchen Or another way to put it is cowork / openclaw class of products are the antithesis of vertical saas

English

the magic of cowork and openclaw and other AI products is that they replace our giant row of infinite browser tabs

And lol - no, don't feel guilty, I have too many tabs too. AI makes it so that every workflow that required 4 browser tabs and a spreadsheet is getting collapsed into one AI-native experience

Just as one quick example-

think about how you used to research a person or a company: LinkedIn tab, X tab, Google tab, notes doc, slack open. now one prompt does it in 10 seconds. the "tab count" of a workflow is basically a proxy for how much AI can compress it

if your product eliminates 6 tabs and a copy-paste loop, users will like it. If you can create a whole series of these workflows then your users will absolutely love it. Thus the biggest opportunities are workflows where people currently alt-tab 20+ times per task. Sales, recruiting, research, compliance, procurement. Boring? yes. Massive? also yes. But this is why these agentic tools are going to crush

AI doesn't need to be superintelligent to be wildly useful. it just needs to be good enough to close the tabs

English

@the_judge1111 @dzhng probably at the $800M round a few years ago. $9B/$800M = ~11x * 75% (25% dilution on funding rounds) * 80% (20% carry) = 6.6x gets you pretty close.

English

@dzhng Angel investment???

That you’ve 6.3x’d???

With the company priced at 9 stacks?

My brother that was a Series C

Praying you understand the economics of the SPV you’re in

English

Shout out to @modal for hosting this great panel at NVIDIA GTC, I'm excited to join @crcolgrove and Robin next Monday! We'll be talking about 𝘴𝘦𝘳𝘷𝘦𝘳𝘭𝘦𝘴𝘴 𝘎𝘗𝘜, 𝘢𝘨𝘦𝘯𝘵 𝘰𝘳𝘤𝘩𝘦𝘴𝘵𝘳𝘢𝘵𝘪𝘰𝘯, 𝘢𝘨𝘦𝘯𝘵𝘪𝘤 𝘦𝘷𝘢𝘭, and 𝘧𝘶𝘯 𝘱𝘳𝘰𝘥𝘶𝘤𝘵𝘴 𝘸𝘦 𝘢𝘳𝘦 𝘣𝘶𝘪𝘭𝘥𝘪𝘯𝘨. Everyone talks about models, but but who knows what keeps us up at night😅. Come hang if you're at GTC! luma.com/niwjk62h

English

Random thought - since code is now a commodity and trending towards being 100% AI generated, does having so many layers of abstraction actually make sense?

Example: we use Expo for @duetchat - it's great most of the time but we have a ton of long tail bugs that is blocked by Expo. After a certain point maybe it's easier to just delete Expo and go direct to native iOS and Android instead.

The write-once-run-everywhere value prop becomes diluted once you realize that AI can just automate test every single view on every single platform to ensure consistent behavior.

English

@andrewchen The goal is to always have enough modularization & test coverage where we just accept (prob 70-80% there, mobile expo app is biggest blocker for new)

We've already sectioned off parts of our codebase where humans won't be allowed to touch in the near future

English

One question I've been asking founders is: do you try to review all the code that the LLMs write or do you just accept it?

I think it's about 50-50 right now but the momentum is towards just accepting the AI-generated code and I think that number will eventually go to 100%

This is one of the most telling indications of how AI-native a team is. It's hard to get super high throughput if you are reviewing every line

Poll: what do you do?

English

Never goina shut up about how I'm the 803 or 804th investor in replit

David@dzhng

6.3x on my one and only angel investment, should I be doing more 😂

English

@nearlydaniel Or you can also use duet.so - we host it as well and we also include a gateway to a bunch of common services like image / video / audio gen & enrichment & web scraping & integrations.. etc. Basically something my mom can use. No need to deal with API keys at all

English