Bella.base

565 posts

Bella.base

@e4maeth

Space Host || NFT’s || DeFi and crypto || Content Writer || RITUAList || Build on Base

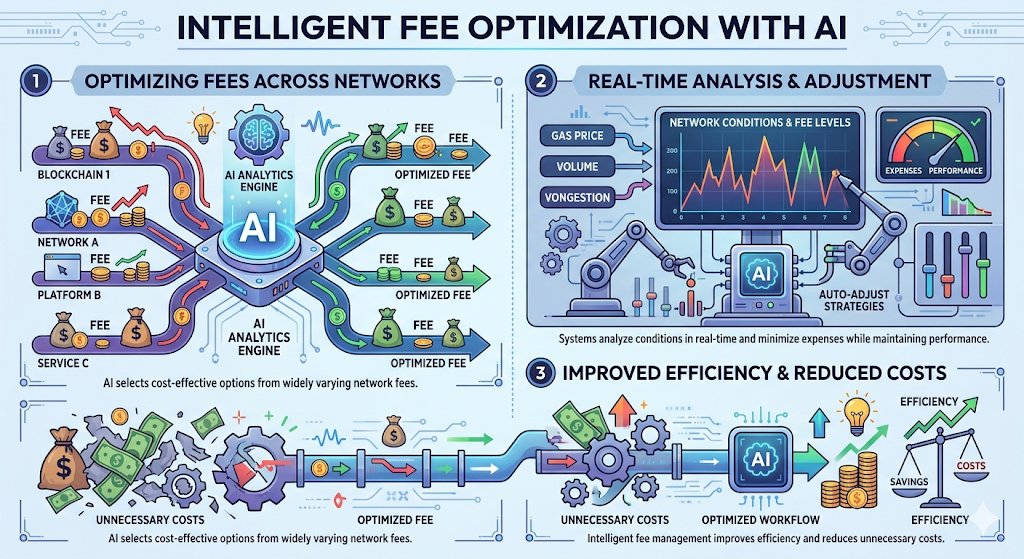

Rethinking Data, Persistence, and Usability in Decentralised ecosystems- @Permaweb_DAO, @0G_labs and @LightLinkChain 0G Labs – The team is investigating infrastructure‑level techniques that tighten data compression and adopt a modular architecture. By shrinking the size of the payloads that travel across the network, the protocol can keep pace with rising transaction volume while keeping bandwidth and storage demands low. These advances are likely to shape how future decentralized platforms handle data size and transmission efficiency. Permacast – With a more efficient underlying stack, Permacast can continue to build a truly decentralised audio‑hosting model. Creators keep full control of their podcasts, and the content stays reachable on the distributed network without relying on a central service, thanks to the leaner data handling introduced by projects like 0G Labs. LightLink – The immediate hurdle for mainstream adoption is user experience. Requiring users to manage gas fees and sign every transaction creates a steep onboarding barrier. LightLink’s approach lets dApp developers absorb gas costs on behalf of their users, turning blockchain interactions into something that feels as ordinary as any Web 2 experience. By removing the constant “pay‑to‑execute” friction, the platform restores the familiar app flow that most people expect.

Capital doesn't flow toward good infrastructure by default. It flows toward legible infrastructure. The projects that attract serious developer attention and sustained liquidity aren't always the ones with the strongest architecture. They're the ones whose value proposition is easy to understand and even easier to verify. The 0G Labs, Dango, and permacastapp stack has a legibility problem that's worth addressing directly. Each layer is technically sophisticated. Each one solves a real constraint. But the combination is harder to communicate than a single-protocol narrative, which means the market is slower to price it correctly. That gap between actual value and perceived value is where early positioning happens. 0G Labs provides verifiable data availability for AI workloads. Legible claim: on-chain inference needs clean data at the source, and 0G is built specifically for that. Verifiable by examining the DA architecture and throughput benchmarks under AI-specific load conditions. Dango provides capital efficiency for stack operations. Legible claim: applications that can't sustain capital efficiency don't survive at scale, and Dango's concentrated liquidity mechanics solve that inside the stack. Verifiable by examining position mechanics and liquidity utilization under production conditions. PermawebDAO provides permanent output storage. Legible claim: stack outputs need a home that doesn't expire, and Permacast provides economic permanence guarantees. Verifiable by examining the archival model and incentive structure. Three legible claims. Each independently verifiable. Each stronger in the presence of the other two. The market will catch up to this stack. The architecture makes the outcome legible once you know where to look.

This is not about infrastructure improving. It is about what a system no longer has to give up to exist. 0G Labs and Permacast sit at opposite ends, but they quietly remove the same pattern, compromise. 0G Labs removes compromise at the start. Imagine a builder creating an AI-powered on-chain intelligence engine that processes continuous streams of data. On traditional infrastructure, the system begins by trading something away, speed for cost, scale for efficiency. With 0G, data availability and compute are modular, so the system is built without those trade-offs. It starts at full capability. Permacast removes compromise at the end. Now that engine produces insights, reports, or audio briefings. On Web2 platforms, that content is always conditional, it can be removed, restricted, or buried over time. With Permacast, once it’s published, it persists. Think of a research archive that doesn’t fade into timelines. It compounds into something permanent. Two points. No compromise. Nothing reduced at the beginning. Nothing erased at the end. Subtle, but this is how Web3 moves from systems that adapt to limits to systems that define their own conditions. This is not about trading becoming faster. It is about it becoming exact. @dango is building what DeFi trading should have been from the start, on-chain orderbooks, MEV resistance, and a CEX-like experience without centralized risk. Imagine placing a trade where execution reflects your intent, not distorted by slippage or hidden extraction. Where the system remains consistent, even under pressure. And then incentives align with usage. Lootboxes and points are live. Every trade contributes to rewards, turning activity into progression. Execution becomes precise. Incentives become embedded. Subtle, but this is how trading shifts from fragmented interaction to structured reliability. This is not three separate ideas. It is one system forming. Intelligence begins without constraint on 0G. Execution happens with precision through Dango. Information persists through Permacast. Subtle, but this is how Web3 starts to think, act, and remember as one cohesive layer.

One mistake people keep making in Web3 is assuming that every campaign is just another opportunity to extract value. Complete tasks, earn points, hope for an airdrop, move on. That mindset works sometimes, but it also blinds you to what certain ecosystems are actually trying to build. Take the growing activity around Permacast and PermawebDAO. If you only look at it through the lens of rewards, you will miss the bigger picture. Because what is being tested here is not just participation, it is behavior over time. Who contributes consistently. Who understands the idea of permanent media. Who engages in a way that adds signal instead of noise. And when these campaigns appear on Galxe, they are not just distributing incentives, they are building a dataset of early users who might shape the ecosystem later. That is the part most people ignore. Early users in systems like this are not just numbers. They often become the first layer of community, the first validators of quality, the first voices that define culture. So instead of asking whether a campaign is worth a few points today, it might be more useful to ask what kind of system you are choosing to align with. Because alignment compounds in ways that short-term farming never will. And if permanent media actually becomes a core layer in Web3, then the people who understood it early will not just benefit. They will have helped define what it becomes. @permacastapp

Big AMA is going live this Friday, April 17th, at 14:00 CET! 📍@ursas6 and @igor_sinkovec are back from Dallas with major updates tied to the Bargo Capital RWD partnership. Hear what happened and ask anything! 💰 Best question wins 100 $USDC 🎙️ x.com/i/spaces/1DxLd…

GN CT The night has a way of slowing everything down… The noise fades. The timelines quiet. The rush to be seen disappears. And in that stillness, a deeper question surfaces: What actually remains? Because if you really think about it… We are constantly creating. Ideas. Thoughts. Stories. Moments. We leave pieces of ourselves across the internet every single day. But most of it? Temporary. It lives for a moment. Gets attention. Then fades into the endless scroll. Buried under new content. Lost in timelines. Dependent on platforms we don’t control. And over time… it disappears like it was never there. That’s where my thoughts settle tonight: @permacastapp Let’s talk about Permacast. Not just as a platform… but as a different way of thinking about the internet itself. Because the internet today is built around speed. You post → it spreads → it fades. Everything is optimized for the moment. But moments don’t last. Your ideas exist… but only briefly. Your voice is heard… but only while it’s visible. Your content lives… but only within systems that can change at any time. And when they do everything can vanish. So the real question becomes: What does it mean to truly publish something… if it can disappear? This is where @permacastapp shifts the narrative. Decentralized publishing. Not just about sharing content… but about anchoring it beyond time and platforms. It introduces a different kind of internet. One where: Ideas are not platform-dependent. Voices are not controlled by algorithms. Content is not easily erased. Where what you create today… can still exist tomorrow. Next year. Even beyond the systems we rely on today. And when something becomes permanent… everything changes. It stops being disposable. It becomes intentional. It stops being noise. It becomes record. Because in a world flooded with content, the real challenge isn’t creating more. It’s preserving what matters. The ideas that shape the future aren’t always the loudest. They’re the ones that remain accessible over time. The ones people can revisit. The ones that outlive trends. The ones that continue to exist when everything else fades. That’s the direction @permacastapp is pointing toward. An internet where: Content has permanence. Publishing has weight. And ideas don’t disappear. So as the night deepens and everything grows quiet… hold onto this: The future of the internet won’t be defined by what trends for a moment… It will be defined by what remains over time. GN to @permacastapp And GN to those who understand… Not everything is meant to be seen immediately. Some things are meant to last forever.

As AI grows, privacy and security become critical. @0G_labs addresses this with a decentralized platform that distributes data, ensures verifiable AI processes, and supports scalable, efficient applications making AI more secure, transparent, and user-controlled.

The 0G Labs Apollo Transition and the Venture Scaling Phase The focus of the dAIOS is expanding beyond core protocol development toward the formalization of its founder ecosystem. With the April 17 application deadline for the 0G Labs Apollo Accelerator, the network is shifting from an open-source sandbox to a structured venture pipeline designed to scale production-ready AI startups. Operational Framework & Resource Allocation The 10-Week Venture Pipeline ➔ 0G Labs Apollo is moving from recruitment to the operational phase. The structured curriculum is designed to bridge the gap between initial concept and mainnet deployment, providing builders with direct engineering access to the 0G Labs protocol engineers. Capital and Mentorship Alignment ➔ The program integrates the Blockchain Builders Fund and Stanford-led mentorship. This provides a direct path for DeAI founders to access up to $2 million in direct funding, utilizing the momentum of 0G’s recent infrastructure upgrades to secure early-stage liquidity. Enterprise Infrastructure Gateway ➔ Selected teams receive $200,000 in Google Cloud credits and production-grade wallet solutions via Privy. This ensures that projects birthed within the accelerator have the enterprise-grade compute and security required to scale on the Aristotle Mainnet. Product-Market Fit (PMF) Engineering ➔ Unlike generic accelerators, Apollo focuses on "Agentic PMF." This involves optimizing the synchronization between an agent's reasoning capabilities and its on-chain economic autonomy, ensuring every project is built for the Web 4.0 paradigm. The AIverse Showcase Pipeline ➔ The acceleration cycle feeds directly into the global ecosystem, with founders being scouted for the "House of AI" in Hong Kong. This ensures that projects maintain high visibility and long-term technical alignment with the broader dAIOS roadmap. The closing of the 0G Labs Apollo application window marks the transition to Venture Velocity for the decentralized AI sector. By formalizing the path from concept to capital, the network has established a sustainable engine for project birth and growth. The current focus remains on selecting the high-conviction teams that will lead the next generation of autonomous, private applications. _____ The End of Passive Consumption: Why Every Interaction is a Protocol Contribution In the legacy web, your engagement is extracted and sold. On the PermacastApp stack, your engagement is Verifiable Intelligence. I’m currently finalizing my contributions for the latest quest, and the two core tasks are the most critical entry points for anyone serious about the Permaweb. Here is the technical alpha on why these two tasks matter for your on-chain footprint: The Newsflash Interaction Visiting permacast.app/newsflash isn't a simple page view. It’s a "Proof of Context" event recorded on the Arweave ledger. By interacting with the newsfeed, you are helping the AO computer index the most relevant "Signals" in real-time. You are effectively acting as a human oracle for the network’s permanent intelligence layer. The Main App Engagement The task on permacast.app is your gateway to On-Chain Curation. When you engage here, you are utilizing the Atomic Asset standard to endorse permanent media. This builds a "Curator Reputation" ---> a cryptographic record of your taste and accuracy that exists independently of any single platform. In 2026, your history as a high-signal filter is your most valuable asset. The Multiplier Effect These tasks aren't just for points; they are for Permanence. Every interaction adds to your verifiable history as an early architect of the decentralized media stack. The protocols that win this cycle will be the ones that reward those who surfaced the best technical alpha first. The Bottom Line: Stop scrolling for algorithms and start curating for legacy. ➡️ Join the quest here: g.xyz/permacast-quest

This is the best wallpaper of the month for me