Eric Bravick

619 posts

Eric Bravick

@ebravick

Engineer of Thinking Machines. Mad Scientist. Decentralization Advocate @ManifestNetwork, CEO of https://t.co/Watm2NP9oQ

60 Minutes visited RenderCon speaker @refikanadol's LA studio, DATALAND - a fully immersive AI experience where scents are generated in real time and visitors’ vital signs shape the environment itself. He’s expanding what art can be in the age of AI.

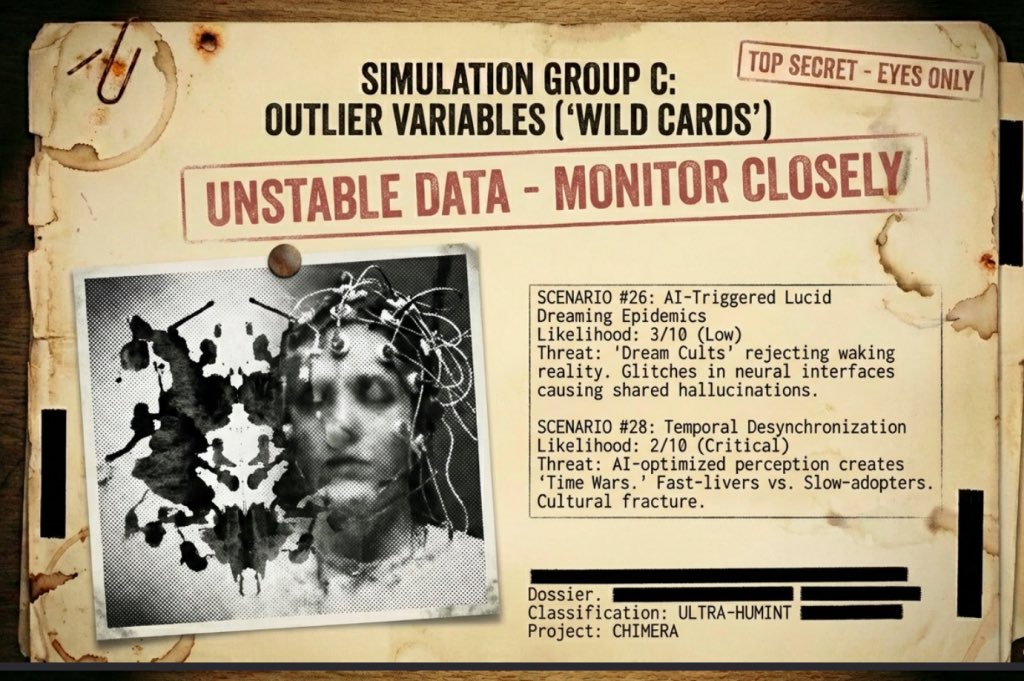

I built an AI model on 100s of 1000s of reports by government and think tanks to help predict outcomes over the next 5000 Day Interregnum. In this article we face the chaos and turmoil head on and visit this Dragon in their lair. There be monsters... readmultiplex.com/2026/02/22/you…

there is a game called "data center" on steam which let's you build and manage your own data center. this is lowkey genius, the best way to educate people on a new trait. hyperscalers should learn a thing or two from "edutainment".

I'm joining @OpenAI to bring agents to everyone. @OpenClaw is becoming a foundation: open, independent, and just getting started.🦞 steipete.me/posts/2026/ope…

AITX Community, Hack AI (@reidmccrabb @brycemiles0), @AppliedAISoc, and Organized AI are throwing a hackathon next week focused on @openclaw! Tracks: Skillsmaxxing: Build skills that make OpenClaw agents smarter. Think security guardrails, metaprompting, optimization techniques — anything that levels up what an agent can do. Best Deployment Tool: Make it dead simple to set up and deploy an OpenClaw bot. The easier the better. If your grandma could deploy it, you're on the right track. Automate Your Life Build: an agent that actually replaces something you do manually. Work stuff, personal stuff, business stuff — if it saves real time, it counts. Plus some fun side-quests ;) If you're in Austin next week and don't have a valentine's date, register below 👇

Did you know? You don’t need to code to create $SHAFT bounties, nor do you have to put up any money. Just an idea. And the foundation and community will fund it. You just need to care about a problem and create a bounty for it. Create bounty for free: bounties.shaft.finance