EIDON AI

573 posts

EIDON AI

@eidon_ai

Decentralized AI | Frontier Robotics | Next-gen Embodied AI with real-world data

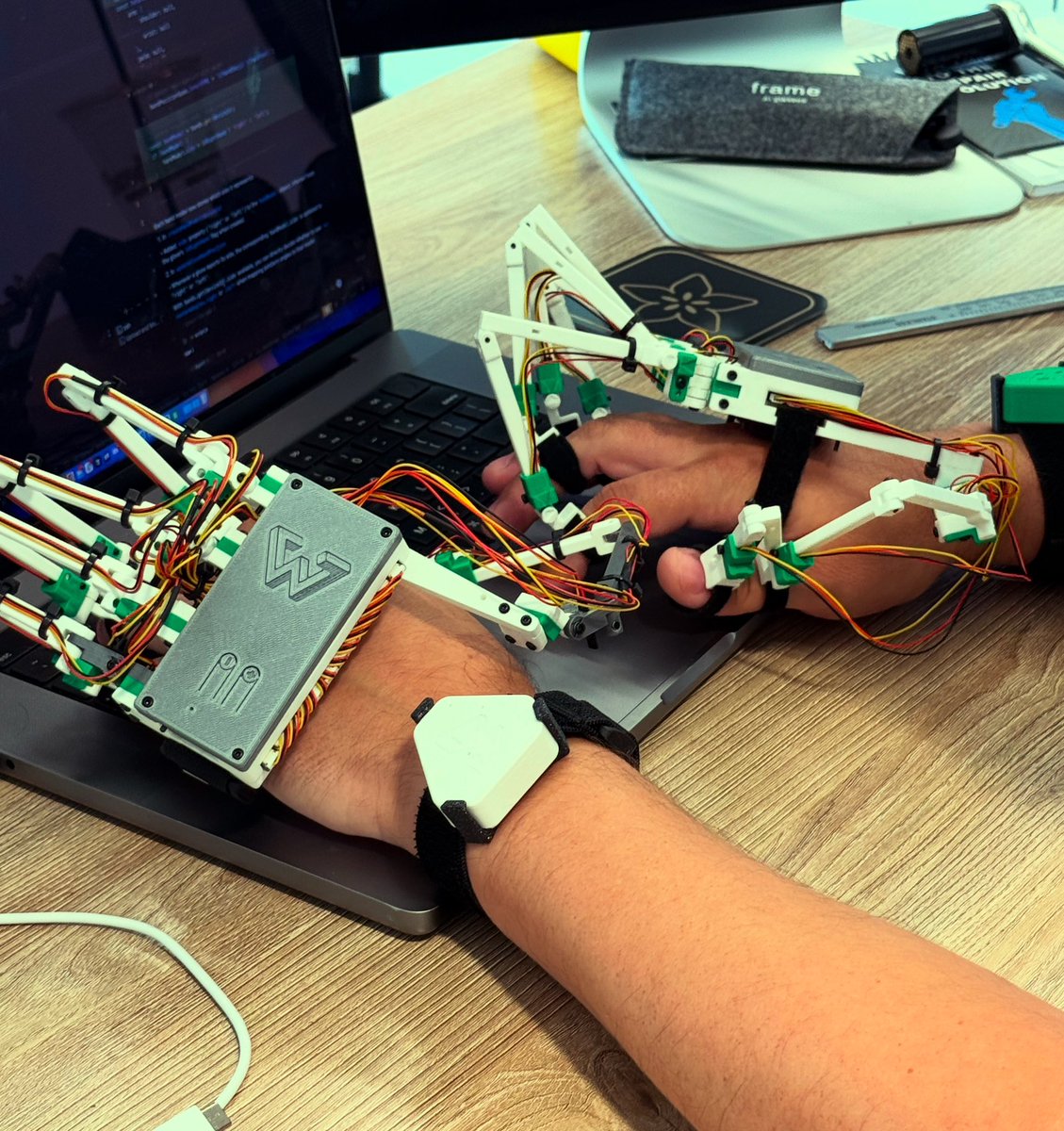

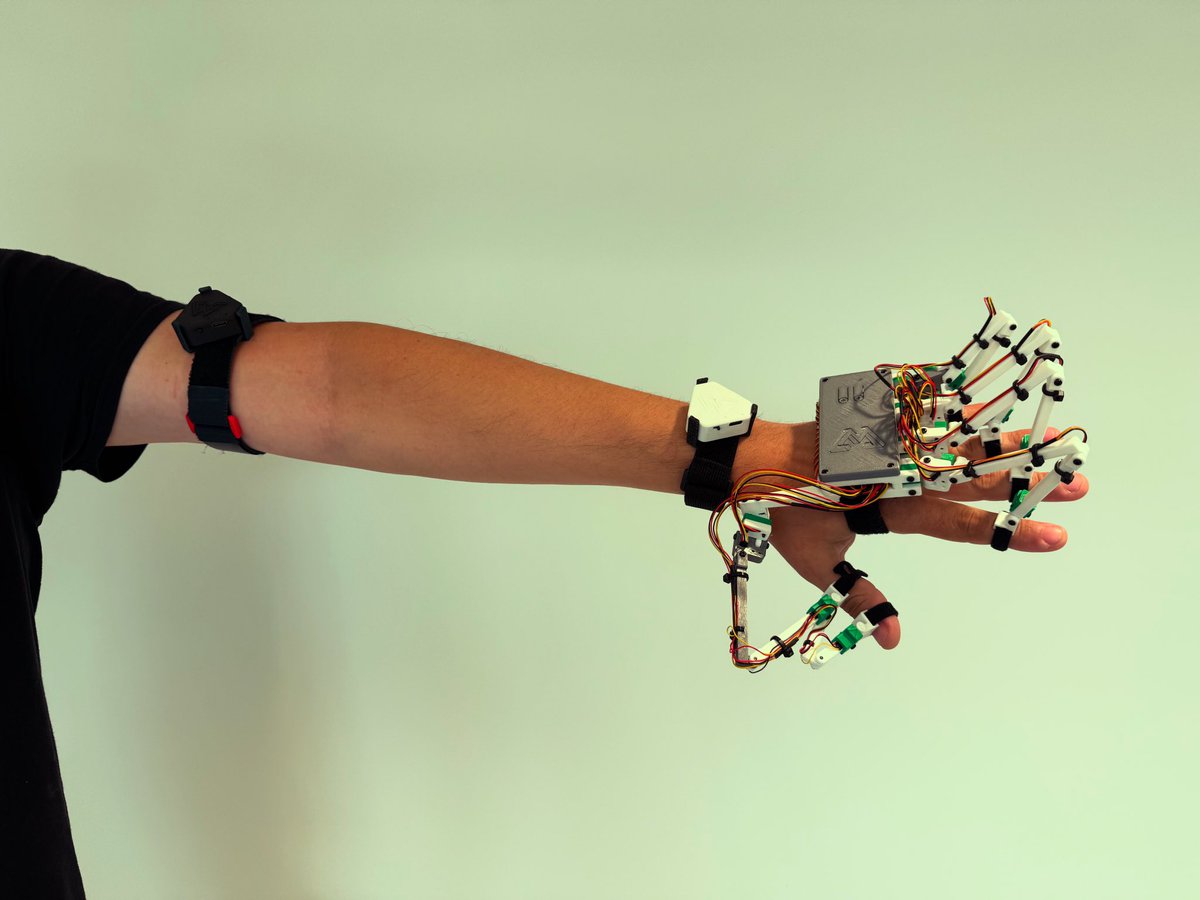

Decentralised Robotics 🧵 I/ Building datasets for embodied AI is tough—humanoid robots need real-world human motion task data, but collecting it at scale has been limited to research lab projects or closed source big labs. At Eidon, we started with our wearable IMU trackers. Here's what we've built and achieved so far.

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

Decentralised Robotics 🧵 I/ Building datasets for embodied AI is tough—humanoid robots need real-world human motion task data, but collecting it at scale has been limited to research lab projects or closed source big labs. At Eidon, we started with our wearable IMU trackers. Here's what we've built and achieved so far.

Decentralised Robotics 🧵 I/ Building datasets for embodied AI is tough—humanoid robots need real-world human motion task data, but collecting it at scale has been limited to research lab projects or closed source big labs. At Eidon, we started with our wearable IMU trackers. Here's what we've built and achieved so far.

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

Decentralised Robotics 🧵 I/ Building datasets for embodied AI is tough—humanoid robots need real-world human motion task data, but collecting it at scale has been limited to research lab projects or closed source big labs. At Eidon, we started with our wearable IMU trackers. Here's what we've built and achieved so far.

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

Decentralised Robotics 🧵 I/ Building datasets for embodied AI is tough—humanoid robots need real-world human motion task data, but collecting it at scale has been limited to research lab projects or closed source big labs. At Eidon, we started with our wearable IMU trackers. Here's what we've built and achieved so far.

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)

today, we’re open sourcing the largest egocentric dataset in history. - 10,000 hours - 2,153 factory workers - 1,080,000,000 frames the era of data scaling in robotics is here. (thread)