E J T

3K posts

> return flight to nyc gets canceled by snowstorm > call united > immediately connected with customer service (rare) > voice is uncanny, def AI but they gave it a human-like accent > takes ~20 min to get rebooked (pretty good imo) > I ask if it's AI > "haha no ma'am but I get that a lot" > I ask it to calculate 228*6647 > it runs the calculation > ggs

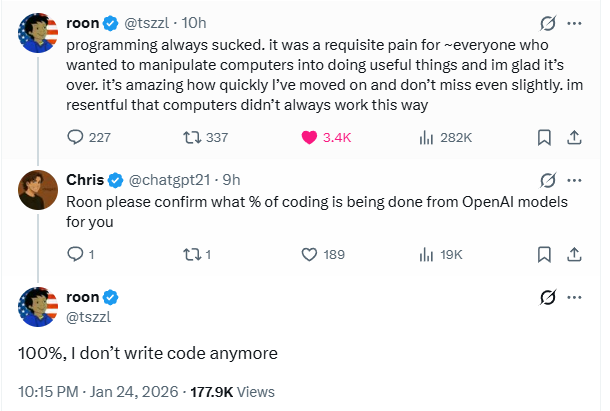

This is what the first stage of takeoff looks like. End-to-end AI research (other than generation of actual research ideas) is next.

@gcolbourn Nutshell: it seems that the learned representation of mind-space in current LLMs has a natural abstraction of Good⟷Evil, and as long as post-training robustly selects for behavior that are more Good than Evil, the explanation that gradient descent finds is “the agent is Good”.

In Safeguarded AI, we’re funding teams to develop systems that harden our critical infrastructure from growing vulnerabilities. Programme Director @davidad warns that rapid advances in AI are outpacing both current safety efforts and the expectations we had when the programme was designed. We've moved quickly to change our approach, now broadening the scope and power of the TA1 toolkit – which aims to build an extendable, interoperable language and platform to maintain formal world models and specifications – to make it a foundational component for the next generation of AI, instead of investing in specialised AI systems that can use our tools. Learn more about the Safeguarded AI programme pivot in our Q&A with davidad: ariaresearch.substack.com/i/180106051/ai… Hear more from davidad on the future of AI in @guardian: theguardian.com/technology/202…

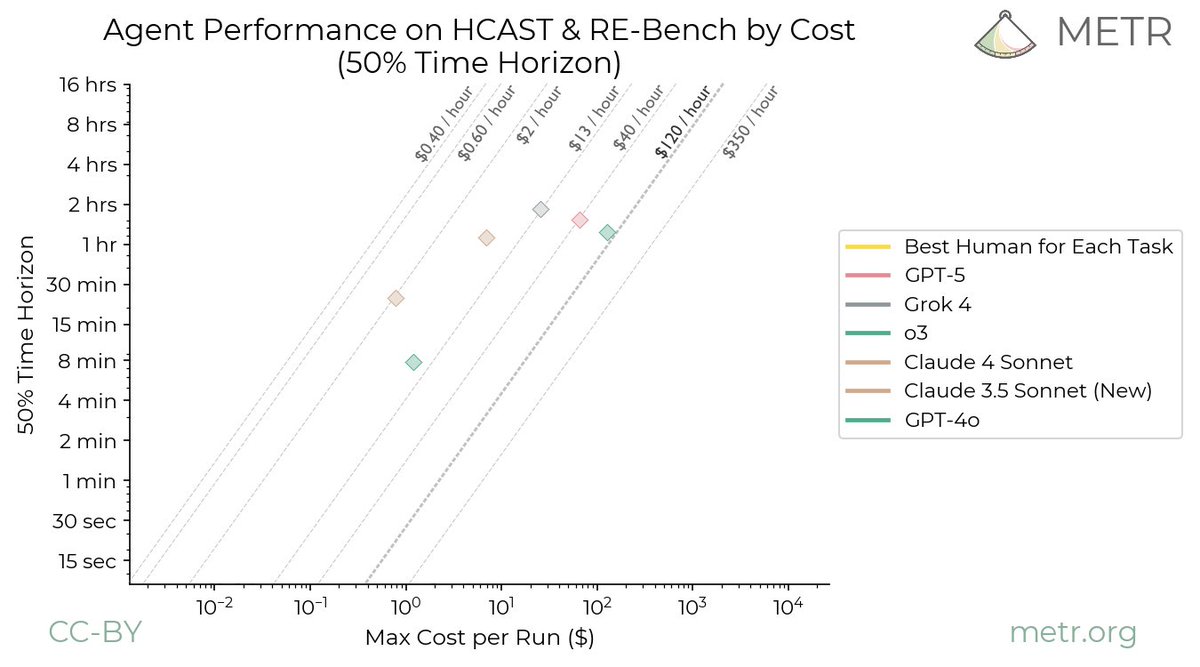

Are the *costs* of AI agents also rising exponentially? We all know the graph from METR showing exponential growth in the length of tasks AI can perform. But the costs to perform these tasks are growing quickly too. Indeed, it looks like they are growing even faster: 🧵