ellamind

47 posts

ellamind

@ellamindAI

Building elluminate. AI Evaluations, simplified. Also: Data Sovereignty, Privacy, Performance & some research. Come & work with us.

Annotations are already available. Looks to be very good data. Now go ahead and curate the best seed docs for synth data.

We released propella-1, a small model for advanced pre-training data annotation 🙃. Work led by @maxidahl within the @OpenEuroLLM project. Link to model + annotations for important pre-training datasets below 👇

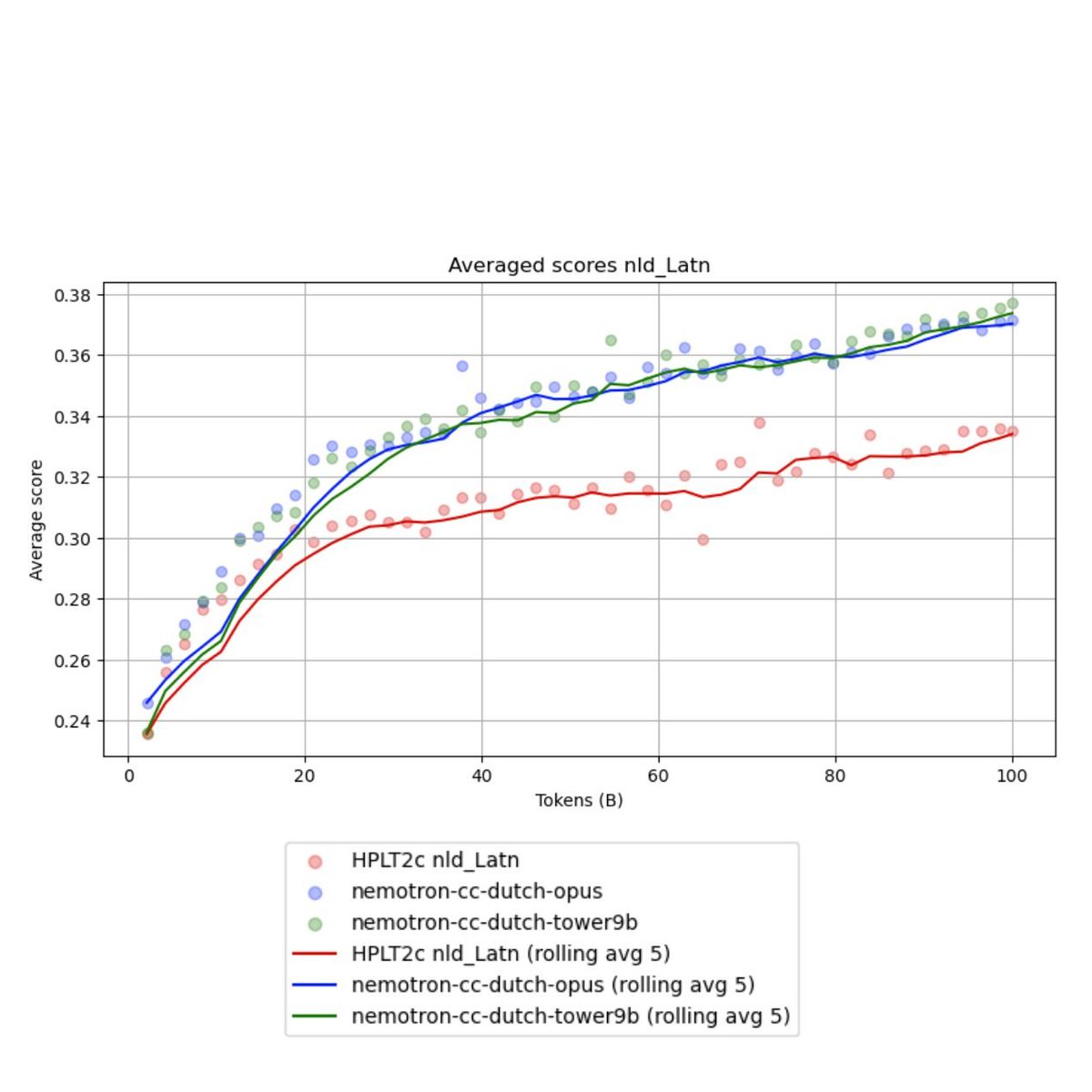

Time to propel open LLM training data curation to the next level. Releasing propella-1: small multilingual LLMs that annotate text documents for dataset curation at scale. 🧵👇

Public benchmarks are easy to game. I built swellubench to validate real features and bug fixes from a production platform at @ellamindAI. It evaluates models on private, real-world coding tasks to measure true performance and cut through benchmark maxing noise. Methodology in 🧵

Veo 3.1 vs Sora 2 creating professional-looking (at least that was the intention 😄) minimal ads. My take: Veo3.1´s details slightly better, however Sora 2 a lot more steerable and with better text + scene changing capabilities. (prompt was adapted from some sora example though)

Nearly two years after release my project LeoLM is being used as a strong justification for the expansion of federal compute funding in Germany. Goes to show how much impact open-source projects can have. Hell yeah @bmftr_bund - thanks for making projects like this possible! 🚀