Eric Chan

62 posts

Eric Chan

@ericryanchan

Chief Scientist of Rhoda AI prev. PhD Student, Stanford University

Here’s something we’ve never seen done before. Real-world tasks are long and ambiguous. Solving them requires visual memory and state tracking. Most robot policies only see the last few frames. Ours doesn't. We put our DVA, FutureVision, to the perfect testbed: the shell game 🐚. The DVA nails it.

To bring generalist intelligent robots to the real world, we have to overcome the data scarcity problem. At Rhoda, we are solving it by reformulating robot policies as video generation. Today, we introduce the Direct Video-Action Model (DVA)

To bring generalist intelligent robots to the real world, we have to overcome the data scarcity problem. At Rhoda, we are solving it by reformulating robot policies as video generation. Today, we introduce the Direct Video-Action Model (DVA)

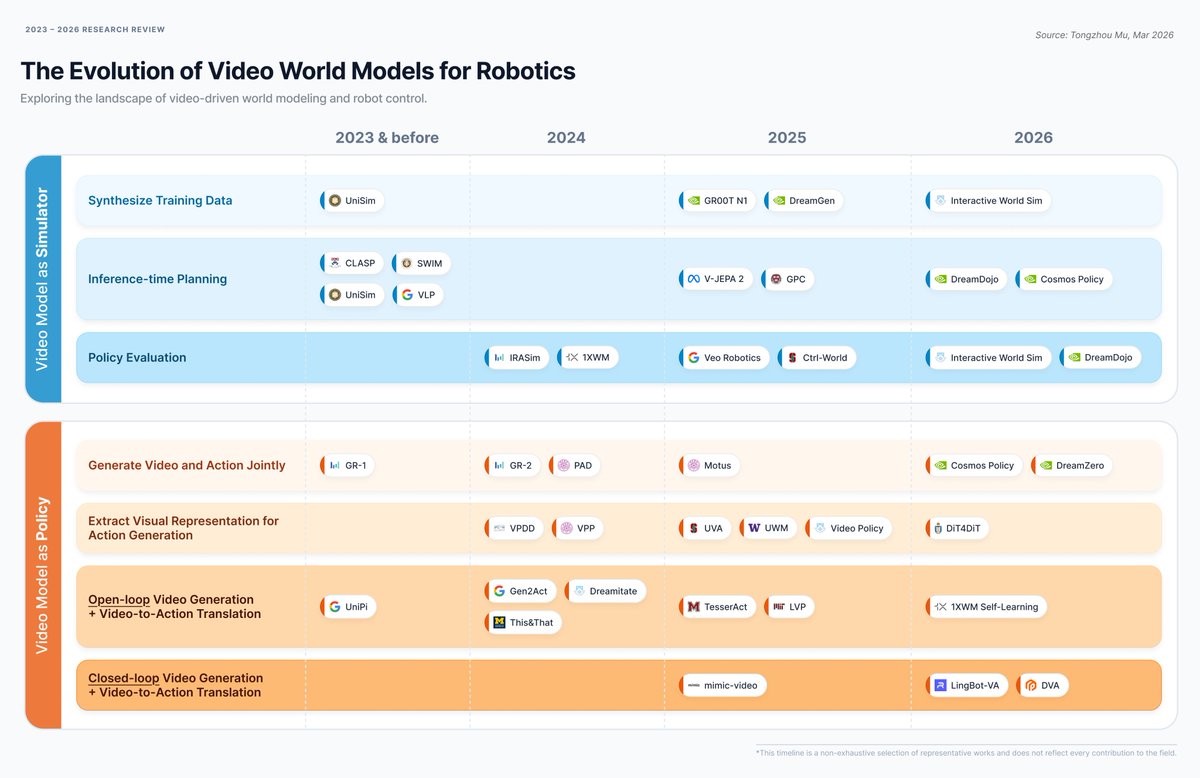

These are very impressive results! The Rhoda team has decisively gotten "video models for robotics" to work. They train a generalist real-time, causal video model that they then quickly fine-tune using task-specific data to generate video plans (1/n)

Very excited to share our exploration of a new robotics foundation model at Rhoda AI. We train a causal video model from scratch, unlocking new capabilities for robust, long-horizon closed-loop robot control. Learn more: rhoda.ai/research/direc…

Because we support long-context visual memory, our robots can learn on the fly. Show the robot a single human demonstration, and it understands both the intent and the motion. It can even extrapolate to novel objects and environments it's never seen before. 🧺✍️

To bring generalist intelligent robots to the real world, we have to overcome the data scarcity problem. At Rhoda, we are solving it by reformulating robot policies as video generation. Today, we introduce the Direct Video-Action Model (DVA)

Because we support long-context visual memory, our robots can learn on the fly. Show the robot a single human demonstration, and it understands both the intent and the motion. It can even extrapolate to novel objects and environments it's never seen before. 🧺✍️

Most robots have "amnesia": they only see a few frames at a time. 🧠 In contrast, our model natively supports hundreds of frames of visual context, enabling it to: → Keep track of the world state → Handle complex, multi-step tasks end-to-end

@startupjag @rhodaai Incredibly excited to introduce a new type of foundation model for robotics. At its core, robotics is a data problem, but that doesn't mean collecting data directly is the only solution.

To bring generalist intelligent robots to the real world, we have to overcome the data scarcity problem. At Rhoda, we are solving it by reformulating robot policies as video generation. Today, we introduce the Direct Video-Action Model (DVA)

After operating in stealth for the last 18 months @rhodaai , we’re excited today to finally show the world what we’ve been working on. We believe we’re on a path to physical AGI with the launch of our brand new foundation model, the Direct Video Action (DVA) model.