Exponential

66 posts

FWIW, for the actual "feeling the AGI" productivity boost, #4 (agentic systems) felt like the biggest leap, and it wasn't close. I was a heavy user since 2022, but the paradigm shift for work happened with agents. This is why I think we'll start seeing AI show up in productivity data soon: the real inflection for work isn't 2022 or 2024, it's summer of 2025.

I love discussing AI agent orchestration in system design. It's not about picking the right LLM or chaining API calls. It's about whether you understand that an agent is only as reliable as the system coordinating it. Most people think orchestration means "call one agent, then another." They fail to understand that agents fail silently, hallucinate confidently, and loop indefinitely and none of that looks like an exception....🧵

Here’s how to think about PlanSpec: goals are what plans are how gates are when capabilities are with what edges are which executions are now it’s a declarative graph of the topology of reasoning itself

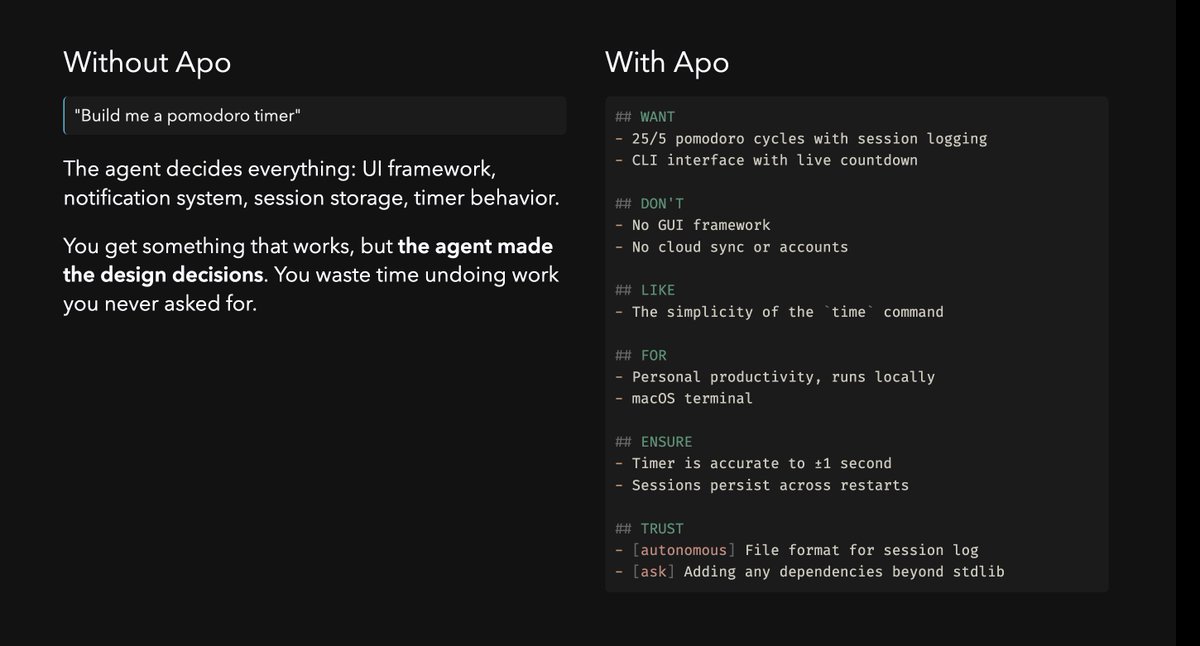

Prompts are so late 2025. We’re giving models intents now.

New research on agent memory. Agent memory is evaluated on chatbot-style dialogues. But real agents don't chat. They interact with databases, code executors, and web interfaces, generating machine-readable trajectories, not conversational text. The key to better memory is to preserve causal dependencies. Existing memory benchmarks don't actually measure what matters for agentic applications. This new research introduces AMA-Bench, the first benchmark built for evaluating long-horizon memory in real agentic tasks. It spans six domains including web, text-to-SQL, software engineering, gaming, and embodied AI, with both real-world trajectories and synthetic ones that scale to arbitrary lengths. The findings are interesting. Many existing agent memory systems that outperform baselines on dialogue benchmarks actually underperform simple long-context LLMs on agentic tasks. Even GPT 5.2 only achieves 72.26% accuracy. To address this, they propose AMA-Agent with a causality graph and tool-augmented retrieval, achieving 57.22% average accuracy and surpassing the strongest baselines by 11.16%. Why it matters? Agent memory needs to preserve causal dependencies and objective information, not just similarity-based retrieval. This benchmark exposes where current memory systems actually break. Paper: arxiv.org/abs/2602.22769 Learn to build effective AI agents in our academy: academy.dair.ai