Aditya

1.5K posts

Aditya

@fate1ess

Destined for greatness

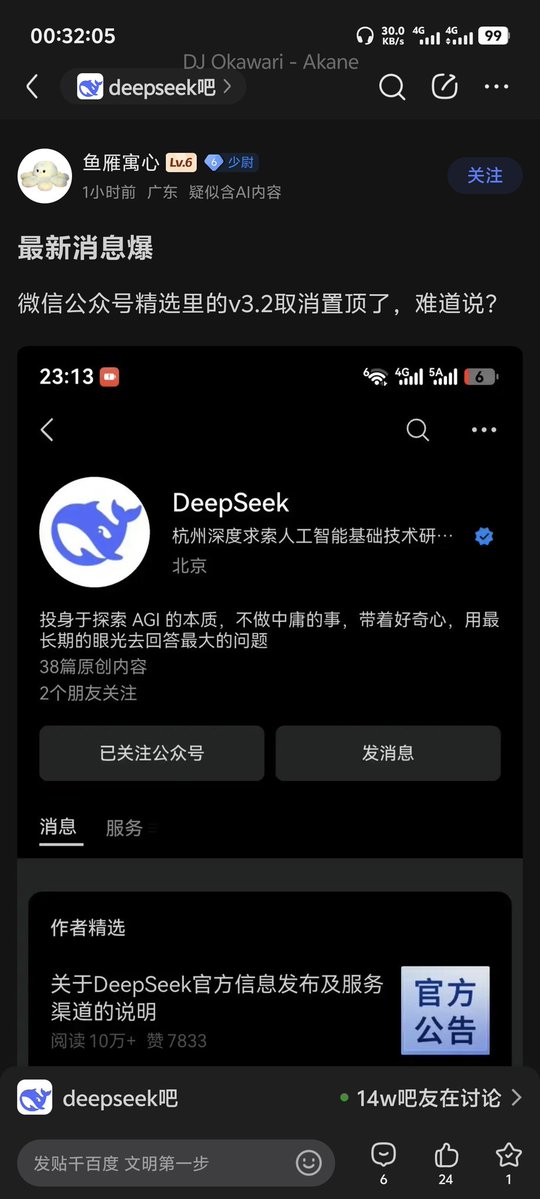

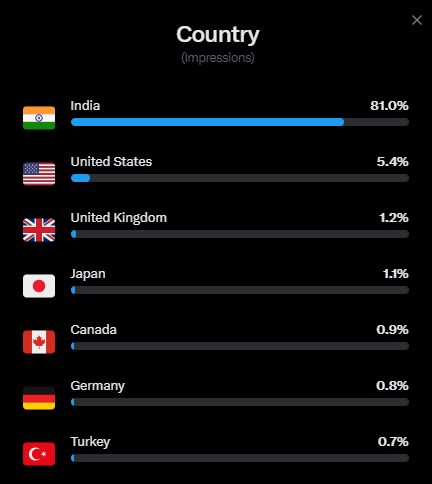

The DeepSeek model that they serve on the WEB/APP may have been updated again. The model does seem to consistently identify itself as V3 now The zero-shot coding outputs I’m getting now also seem different in style from the ones I got a few days ago It needs more testing to be completely sure

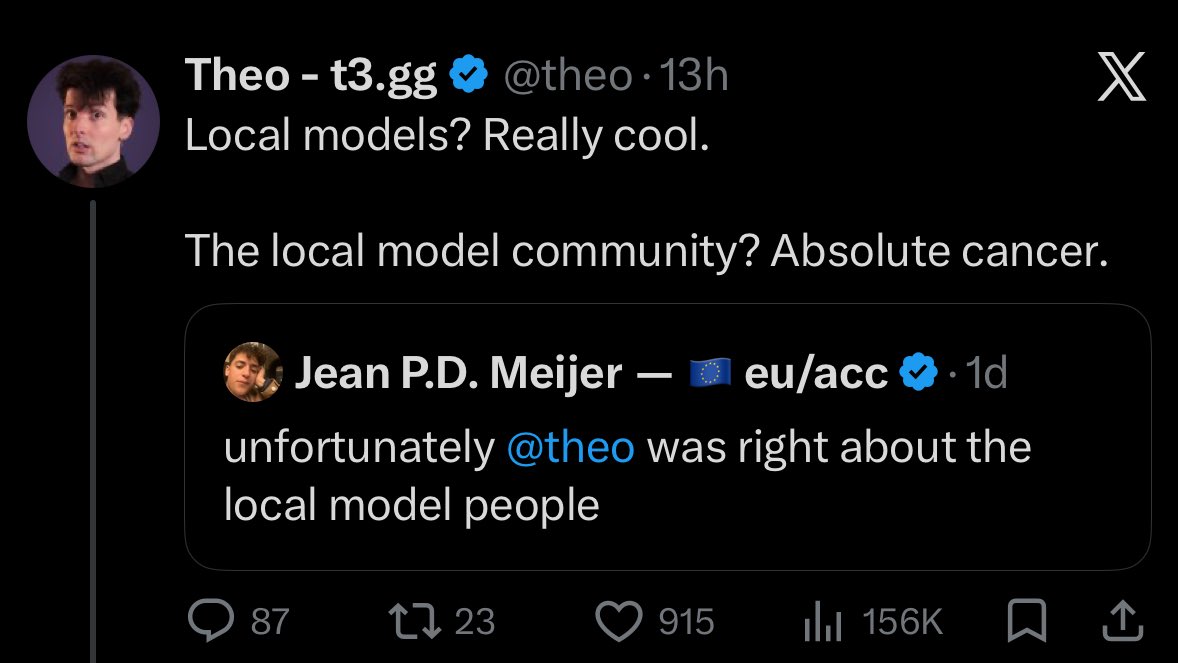

a corporate salesman on an openai paycheck tells you local models aren't there yet. an influencer selling you an API wrapper calls the local AI community on X "cancer." meanwhile we're out here modding communities, helping strangers debug their configs at midnight, fighting spam, pushing open source, and doing it all for free. these people don't want you running models on your own hardware. they want you as a customer. every local install is revenue they lose. every migration from their bloat is a subscription cancelled. don't let corporate noise and engagement bait merchants convert you into their recurring revenue. buy a GPU. compile from source. own your thinking. the community they call cancer is the same community that will help you get started for free while they charge you per token.

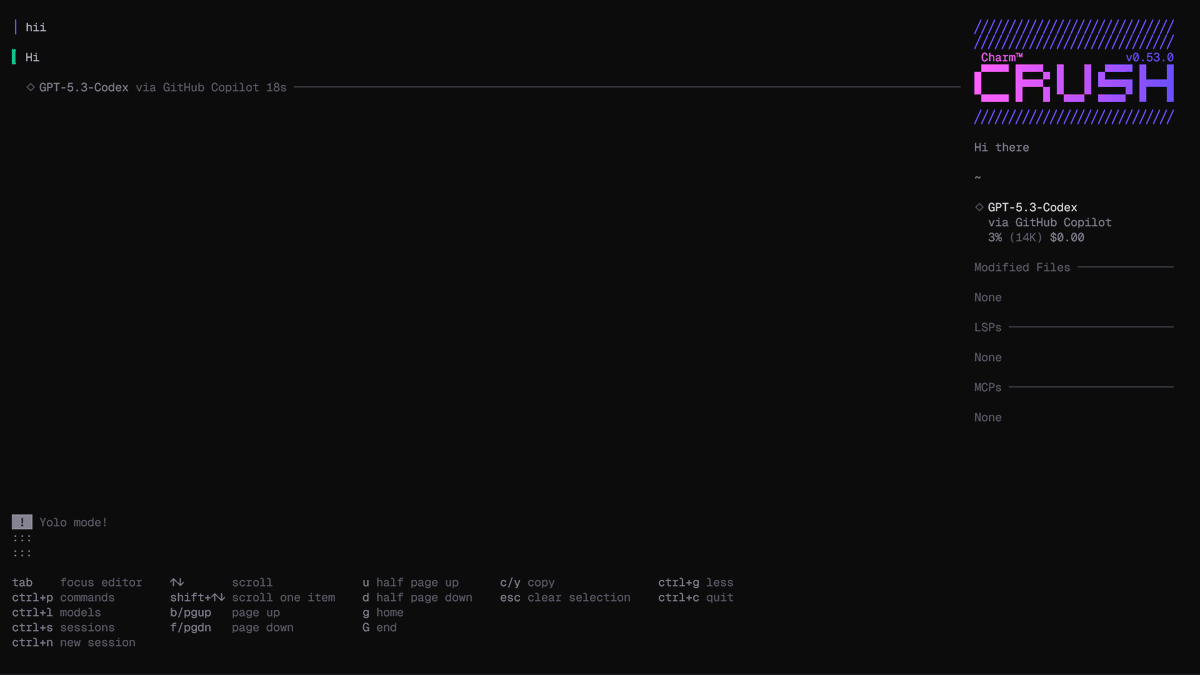

I reimplemented "claude" CLI with codex and gpt-5.4-high. It cost $1100 in tokens, and is 73% faster and 80% lower resident memory during sustained interactive use. It is very easy to reverse claude from npm distribution, then reimplement is 1:1. It is indistinguishable from the Anthropic version to the every header and analytics it send back github.com/krzyzanowskim/…

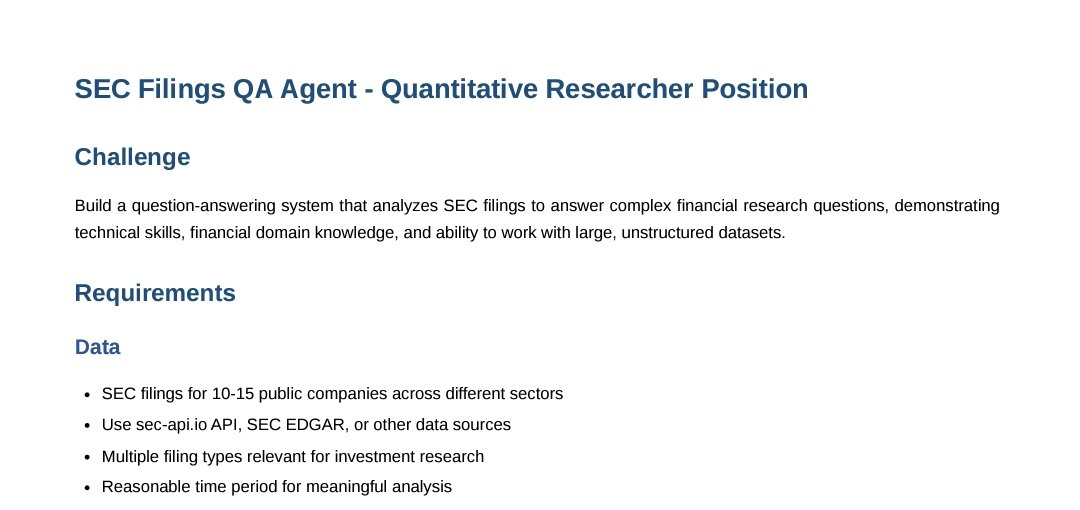

If you're technical and understand AI (you don't have to be a researcher) you could prob make $10M/year implementing AI at hedgefunds across the country.