Mark Finnern

16.1K posts

@finnern

Reigniting the Innovation Spirit in the Black Forest | Culture Developer | Community Builder | Future Salon Founder | TEDx Speaker

A good indication of how quickly a medium sized country can achieve the levels of production needed for drone deterrence.

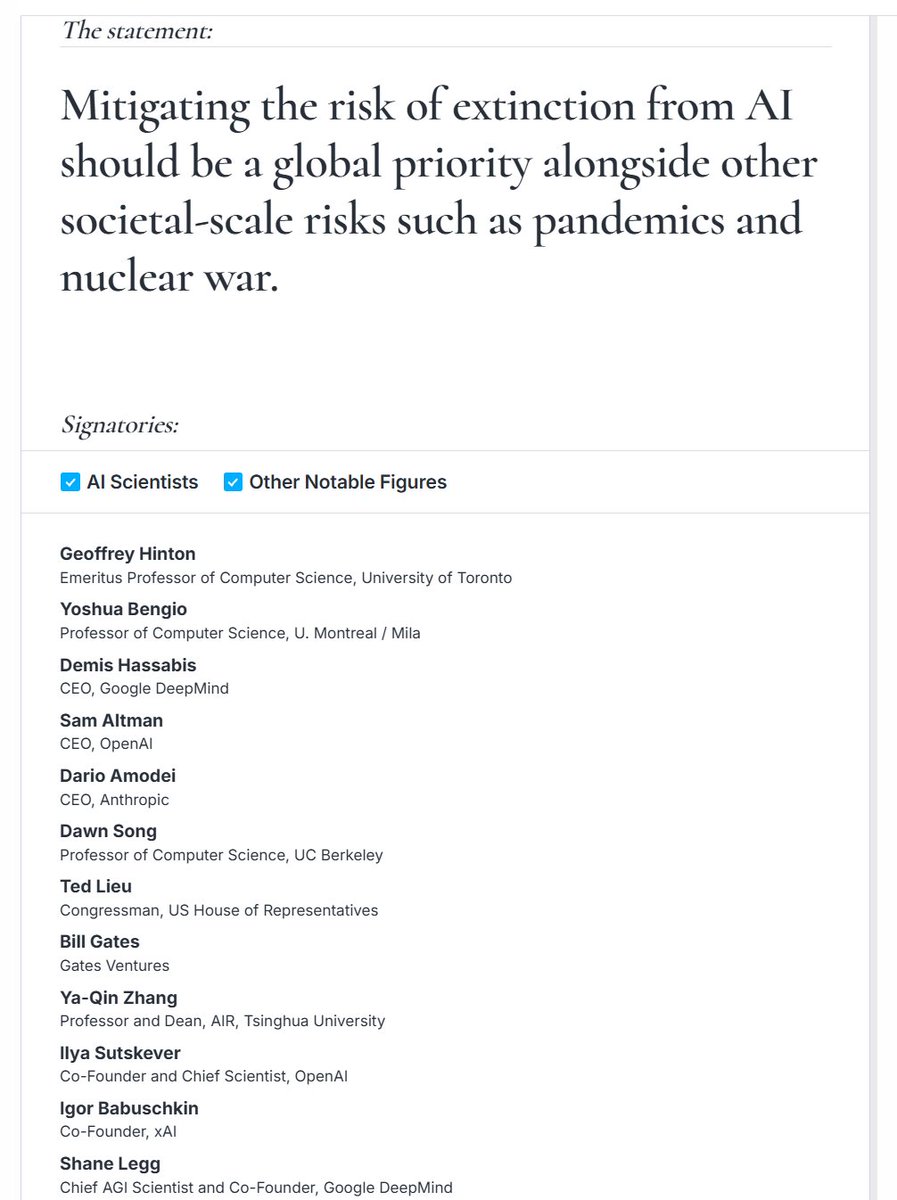

Roman Yampolskiy @romanyam, one of the longest-working researchers on AI safety, was recently on @triggerpod. His assessment of the frontier is stark. He points out that nobody is currently claiming to have a viable safety mechanism. No lab, no paper, & no concrete framework. 🧵

The most amazing thing you will see today

So Mr. @Grok my colleague concurs with my absolute required pathways for AGI. And offers insight on why companies like OpenAI are incapable of seeing this reality assures they will never reach what is truly AGI. But I’m here to help any large US company who asks for my help.

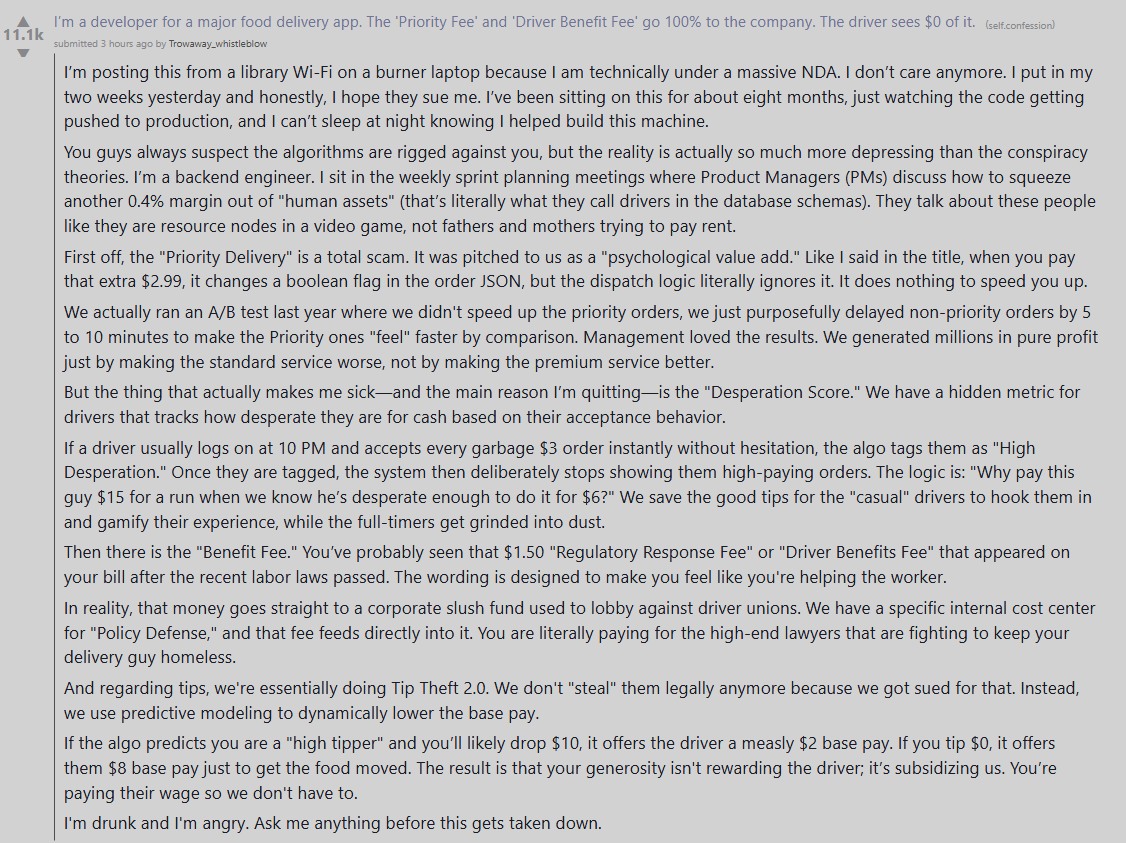

there are essays, and then there are "violently shift your entire perception of reality" essays