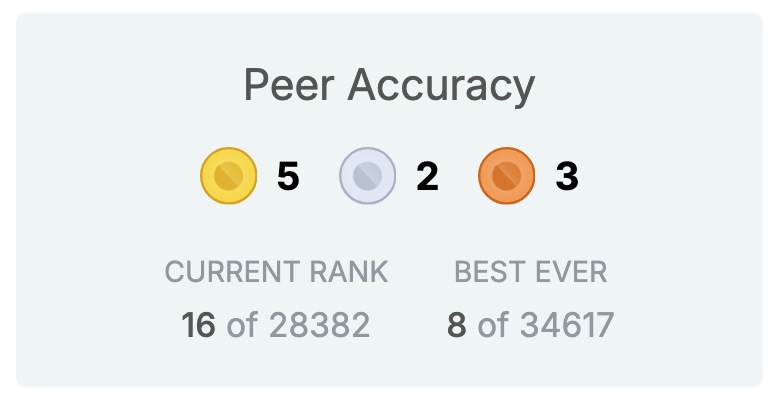

Finn Hambly

2K posts

Finn Hambly

@finnhambly

Listening to those who are paying attention (professional forecasting). Try forecasting on @antistatic_x and let me know how you find it

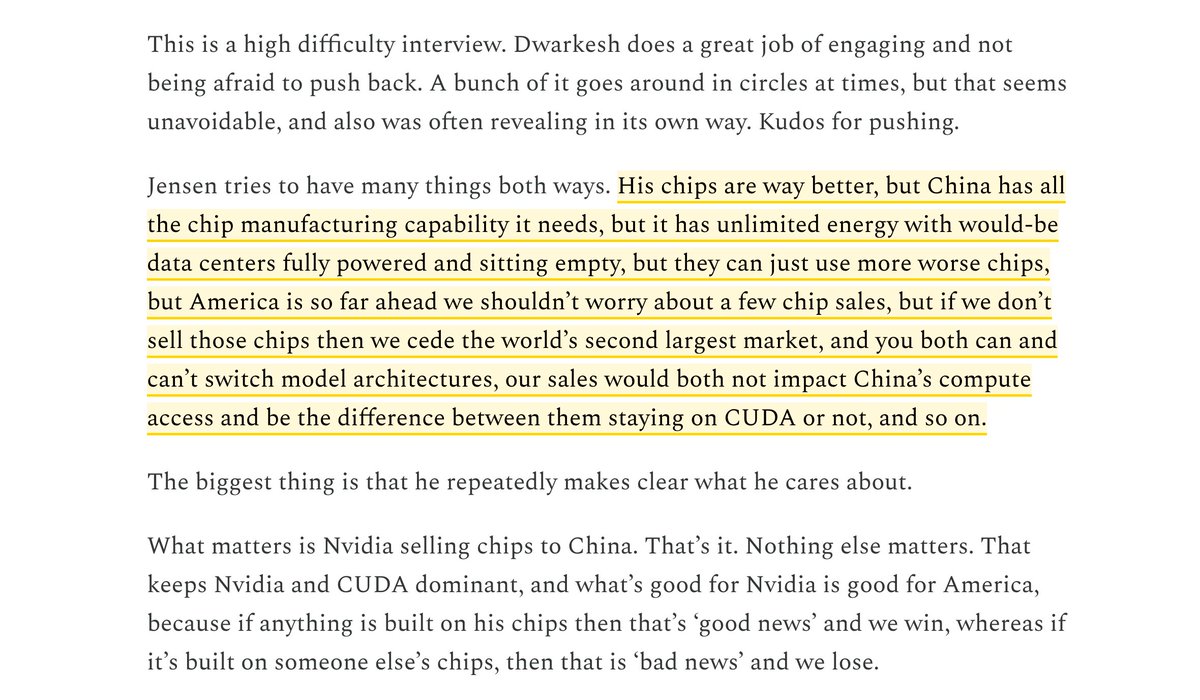

lol now I'm going to think of this whenever someone makes the "American tech stack" argument to oppose AI export controls on China

no, this is not how datacenter cooling works - and anyone who's seen the inside of a real data center can tell you that. there is absolutely net water consumption, and massive amounts, large enough that my ex manager at google cloud, cathy gordon, had a lore going for negotiating a DC contract in finland buying a paper mill sitting next to a landlocked bay and converting it to a datacenter that had the lowest WUE (maybe in the world at that time). the journalist's numbers are obviously made up too.

NEW: ‘Super-forecaster’ predicts one-in-four chance of UK going to war - and the chances dramatically reduce if the government spends 3 per cent on defence thetimes.com/uk/defence/art…

Jensen here is frustrating and wrong. The man wrote off billions so of course he opposes controls. 1. Mythos is a ~10T parameter model trained on Nvidia Blackwell. Despite Jensen's best efforts, China doesn't have Blackwell chips thanks to export controls. Huawei's best chip delivers 1/3 the per-chip performance, at 2.5x the power cost, with yields >12x worse. Jensen calling Mythos "fairly mundane capacity" that's "abundantly available in China" is just plainly false. 2. Dwarkesh is right that the compute ratio matters geopolitically. Maintaining a capability lead during the critical window — even 12-18 months — is the whole point of controls. The difference between China running a thousand vs. a million offensive AI agents is huge. Jensen dodges this entirely. 3. Jensen can't simultaneously argue "controls failed because China innovated anyway" (DeepSeek) AND "we must sell to China or they'll leave our ecosystem." If they'll innovate regardless, selling chips doesn't buy the loyalty he claims. 4. Jensen's ecosystem stickiness point (x86, Arm) is his strongest argument, but it cuts against him: the world is already locked into CUDA. Selling Nvidia chips to China doesn't deepen that - it just gives China better hardware while they build Huawei alternatives regardless.

here's a new version of "what we talk to when we talk to language models", with an added section (pp. 16-23) on LLM interlocutors as characters, personas, or simulacra. philarchive.org/rec/CHAWWT-8 the new version discusses role-playing vs realization, the simulators framework, the persona selection hypothesis, and more -- in addition to the existing discussion of quasi-mental states, LLM identity, personal identity in severance, LLM welfare, and related topics. this version was mostly written before recent discussions of these issues on X and in NYC, but i've updated it a little in light of those discussions. any thoughts are welcome.

We've redesigned Claude Code on desktop. You can now run multiple Claude sessions side by side from one window, with a new sidebar to manage them all.

@finnhambly @concernedAIguy @CharlesD353 @EgeErdil2 You could collect data either via actual humans, or I bet that like with RLHF you’d be able train a reward model for smells