Will Finger

907 posts

Will Finger

@fiwill

Product Designer, Web3 On-chain, AI engineer. Married. Father. Learning love with Jesus.

Starting Thursday, we'll be updating our revenue sharing incentives to better reward the content we want on X: We will be giving more weight to impressions from your home region—to encourage content that resonates with people in your country, in neighboring countries and people who speak your language. While we appreciate everyone's opinion on American politics, we hope this will disincentivize gaming the attention of US or Japanese accounts and instead, drive diverse conversations on the platform. We invite creators to start building an audience locally. X will be a much richer community when there's relevant posts for people in all parts of the world.

wtf chrome has vertical tabs now. finally

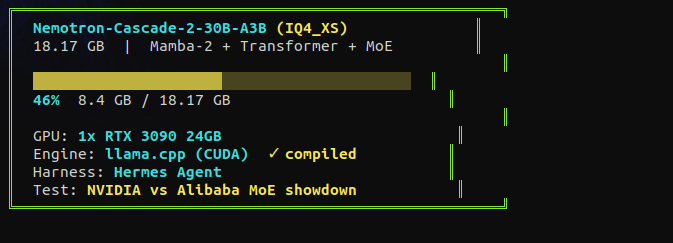

the hype around this model settled fast. good. now i can test it without the noise. NVIDIA released nemotron cascade. 30B total, 3B active. fits on a single RTX 3090. hybrid mamba MoE. gold medal on the international math olympiad with only 3 billion active parameters. they say it beats qwen on math, code, and reasoning. i tested qwen 3.5 35B-A3B on a single 3090 at 112 tok/s. now same card, same tests, different architecture. mamba vs deltanet. nvidia vs alibaba. receipts incoming tonight.

I cracked it. Qwen 3.5 35B Local! 120 t/s generation. 120K context. Vision ENABLED. All on 16GB VRAM. All GPU. Zero compromise. Here's exactly how 👇