Simon Frieder

219 posts

Simon Frieder

@friederrrr

Making the hills LLMs can climb towards becoming Math Copilots. AIMO Prize Manager. https://t.co/ir85qxw65J (Opinions my own.)

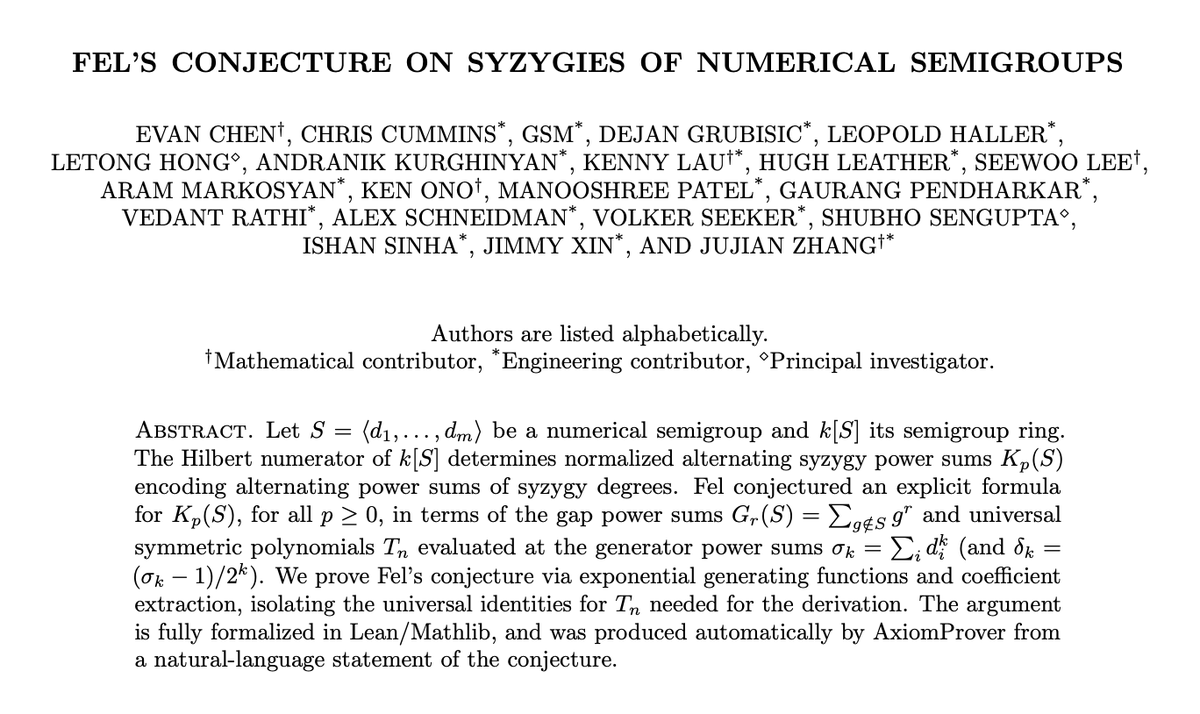

In a year, we will be living in a world of mathematical abundance.

1/ Having a strong Lean engine is definitely a nice thing to have -- but there are limits to what is natural to do in Lean (or any formal systems). I'm reminded here of the nice example that Patrick Massot mentioned in his talk about the concept of ... limits in calculus [1] :) If you want to formalize all possible variants of each type of limit at a point of a function in a "naive" way, there are many definitions to state: of the limit at a point with the limit equaling a number, of the limit at a point with that point remove equaling infinity, etc. If you count them, it seems to amount to actually 256 (!) definitions (see the 45m30s mark of the video). There are two ways out of this: 1) In practice, no one gets taught all 256 definitions but rather one teaches the "abstract generator" behind the definition. 2) One abstract all these away by using ultrafilters, and then just teaches limits in the setting of ultrafilters. Both ways then also help to reduce the sprawling number of plumbing lemmas (like composition of two lemmas) that one otherwise would help to prove about limits (which are 4096 according to the video, which seems plausible). Both solutions are possible execute in Lean, but are awkward to perform. [1] youtube.com/watch?v=1iqlhJ…

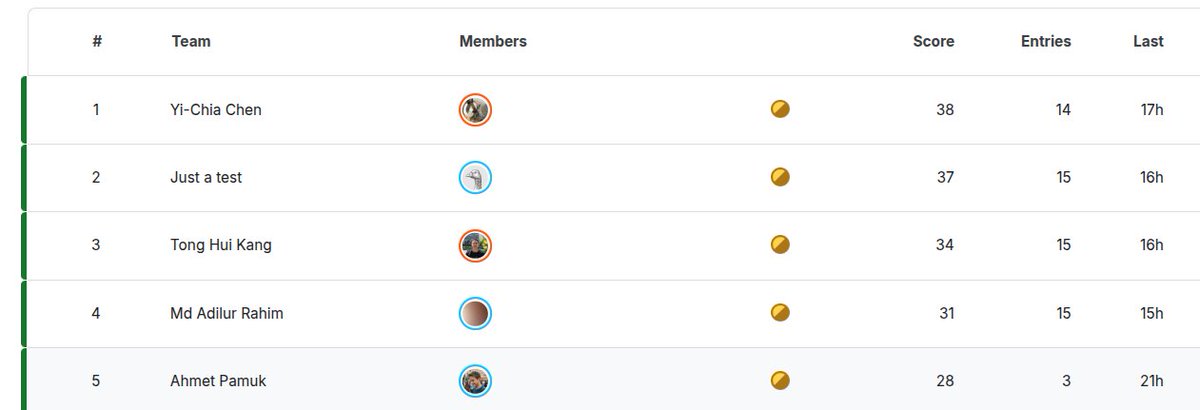

GPT-5-Pro solved, in just 15 minutes (without any internet search), the presentation problem known as “Yu Tsumura’s 554th Problem.” arxiv.org/pdf/2508.03685 This is the first model to solve this task completely. I expect more such results soon — the model demonstrates a strong grasp of elementary abstract algebra reasoning.