Pavlo

7.7K posts

Pavlo

@fxposter

Systems engineer @WixEng, husband and father

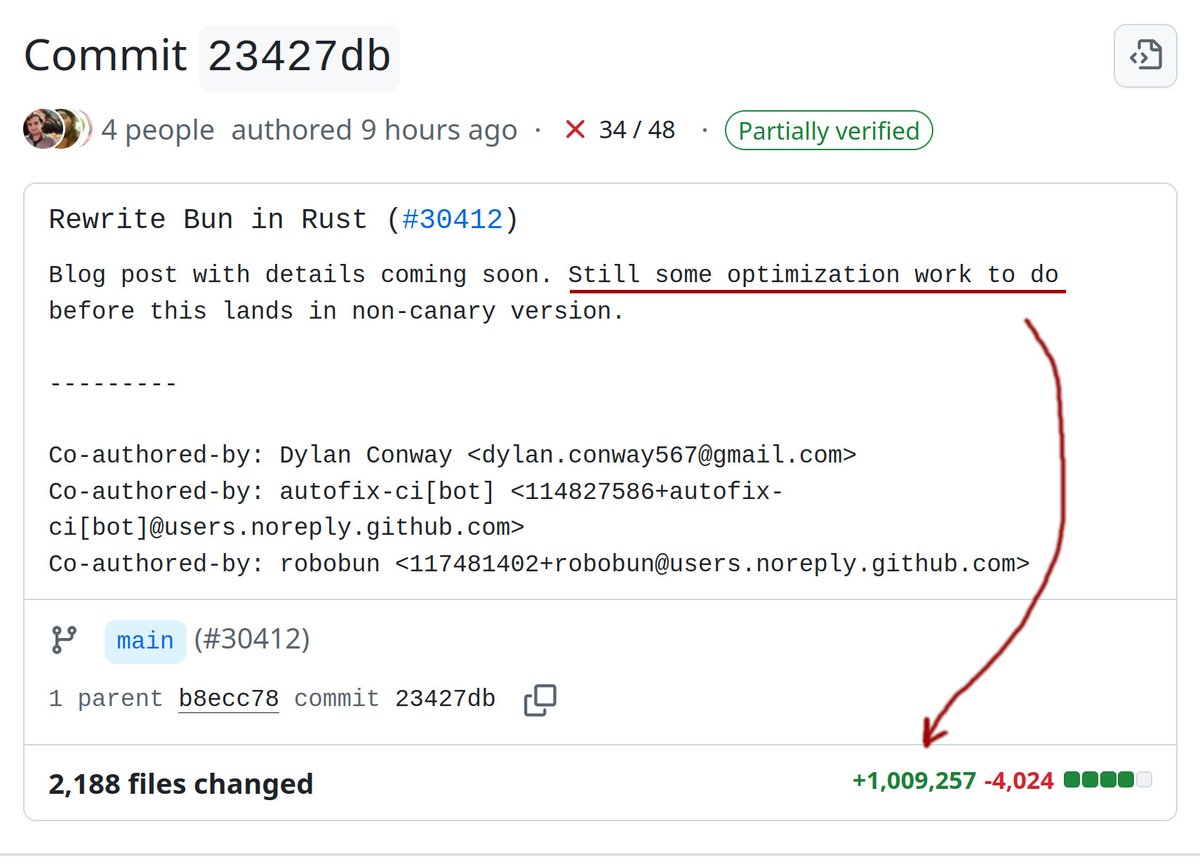

Bun v1.3.14 releases tomorrow. If we do merge the Rust rewrite, this would be the last version in Zig

Similar space, but very different approach: - Built-in harness, so concepts like "session" and "subagent" are first-class. You could build something like a vibe-coding platform much easier on Flue vs. AI SDK. - Built-in sandbox, so the agent is expected to be able to navigate a file-system, run bash commands, etc. Bring your own hosted sandbox, or use our in-memory virtual sandbox (just-bash). - Framework vs. SDK. So you literally run `flue build` to get a deployable agent, or `flue run` to run the agent locally (great for CI, etc). A few other things but that's the meat of it. Appreciate the Q!

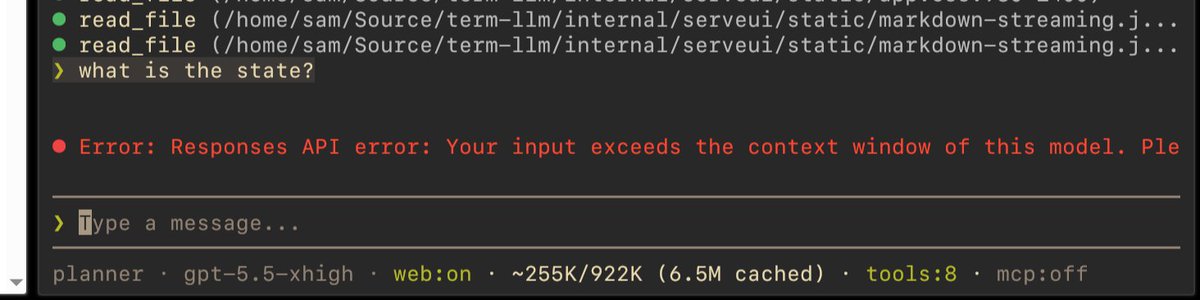

Introducing GPT-5.5 A new class of intelligence for real work and powering agents, built to understand complex goals, use tools, check its work, and carry more tasks through to completion. It marks a new way of getting computer work done. Now available in ChatGPT and Codex.