Sabitlenmiş Tweet

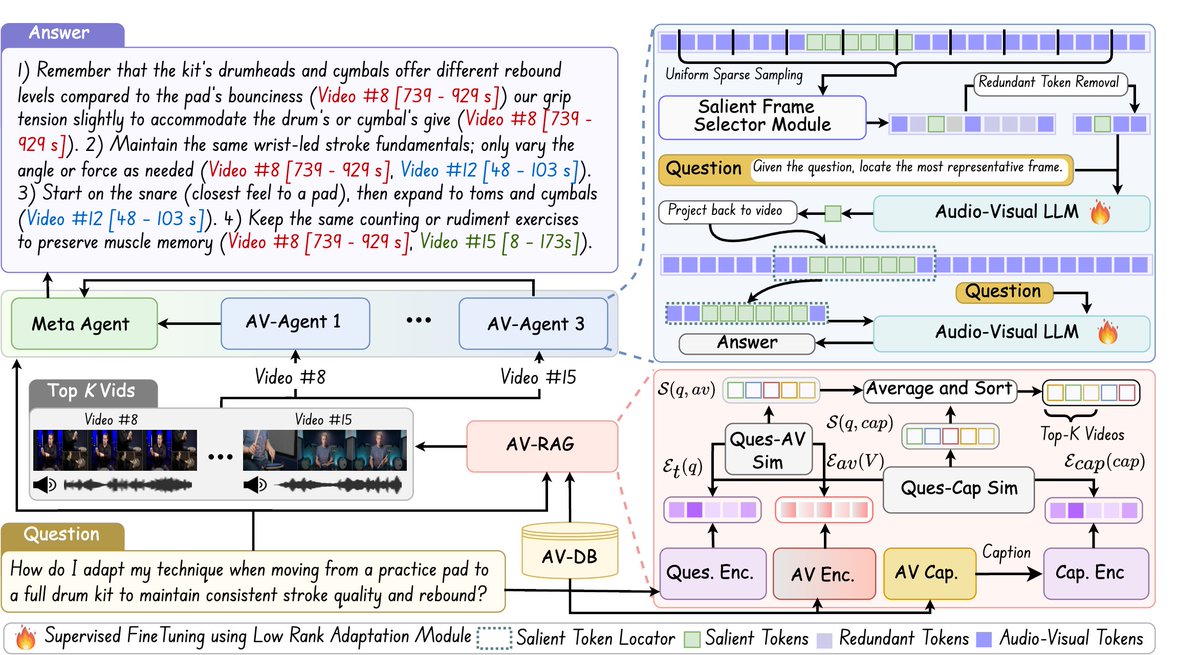

Thrilled to share that our team has multiple papers accepted at #NeurIPS2025 🎉🚀

We’re excited to contribute to advancing multi-modal learning, physical reasoning, and embodied AI.

Here’s a quick overview of the works 👇🧵

English

GAMMA UMD

1.1K posts

@gammaumd

Geometric Algorithms for Modeling, Motion, and Animation research group: UNC Chapel Hill (1992-2018); University of Maryland, College Park (2018 onwards)

🎶 Meet Audio-Flamingo 3 – a fully open LALM trained on sound, speech, and music datasets. 🎶 Handles 10-min audio, long-form text, and voice conversations. Perfect for audio QA, dialog, and reasoning. On @huggingface ➡️ huggingface.co/nvidia/audio-f… From #NVIDIAResearch.

Can decades old ideas from #psychology help fix critical issues in modern LLM alignment? 🤔 We're tapping into #BoundedRationality & 'satisficing principles' to build an alternate way to align LLMs. Our new #ICML2025 paper 👇 🧵 arxiv.org/pdf/2505.23729