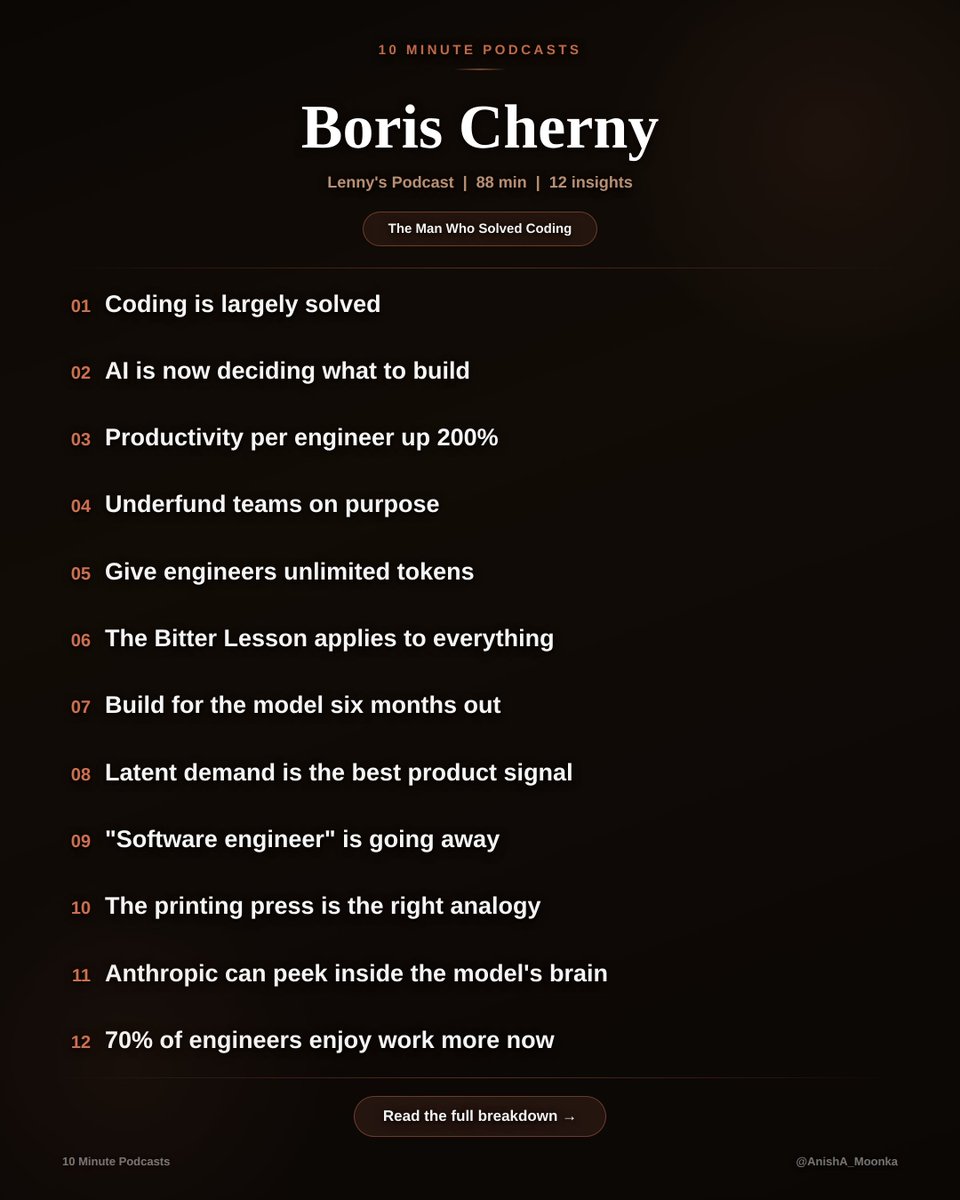

Anish Moonka@anishmoonka

Boris Cherny (Head of Claude Code, Anthropic) just dropped ~90 mins on Lenny's Podcast about what happens after coding is solved.

Just the clearest thinking I've heard on where software is actually going.

My notes:

𝟭. 𝗖𝗼𝗱𝗶𝗻𝗴 𝗶𝘀 𝗹𝗮𝗿𝗴𝗲𝗹𝘆 𝘀𝗼𝗹𝘃𝗲𝗱.

Boris has not edited a single line of code by hand since November 2025. He ships 10 to 30 pull requests every single day, all written by Claude Code. He is one of the most prolific engineers at Anthropic, just as he was at Instagram, except now he never touches a keyboard for code.

I built an entire iOS app, @10minutegita, without writing a single line of code myself. No CS degree, no bootcamp. Just described what I wanted and shipped it. Boris is right. It's real.

𝟮. 𝗧𝗵𝗲 𝗻𝗲𝘅𝘁 𝗳𝗿𝗼𝗻𝘁𝗶𝗲𝗿 𝗶𝘀 𝗔𝗜 𝗱𝗲𝗰𝗶𝗱𝗶𝗻𝗴 𝘄𝗵𝗮𝘁 𝘁𝗼 𝗯𝘂𝗶𝗹𝗱.

Claude is now scanning Slack feedback channels, reviewing bug reports, reviewing telemetry, and coming up with its own ideas for what to fix and what to ship. Boris describes it as the AI becoming less like a tool and more like a coworker who brings you pull requests you never asked for.

If you are a product manager reading this, you should be feeling a very specific kind of discomfort right now. The moat was always "I know what to build." That moat is eroding.

𝟯. 𝗣𝗿𝗼𝗱𝘂𝗰𝘁𝗶𝘃𝗶𝘁𝘆 𝗽𝗲𝗿 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿 𝗮𝘁 𝗔𝗻𝘁𝗵𝗿𝗼𝗽𝗶𝗰 𝗶𝘀 𝘂𝗽 𝟮𝟬𝟬%.

For context, Boris led code quality at Meta across Facebook, Instagram, and WhatsApp. In that world, hundreds of engineers working an entire year would move productivity by a few percentage points. Two hundred percent gains are genuinely unprecedented in the history of developer tooling.

The kid optimizing for an FAANG SDE role might be optimizing for a role that looks completely different by the time they get there.

𝟰. 𝗨𝗻𝗱𝗲𝗿𝗳𝘂𝗻𝗱 𝘆𝗼𝘂𝗿 𝘁𝗲𝗮𝗺𝘀 𝗼𝗻 𝗽𝘂𝗿𝗽𝗼𝘀𝗲.

Boris puts one engineer on a project instead of five. With unlimited tokens and intrinsic motivation, one person ships faster because they are forced to let AI do the work. Cowork, the product now used by millions, was built by a small team in 10 days using Claude Code.

This is the same logic as giving a startup founder a small seed round rather than a massive Series A round. Constraint breeds invention. Always has.

𝟱. 𝗚𝗶𝘃𝗲 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝘀 𝘂𝗻𝗹𝗶𝗺𝗶𝘁𝗲𝗱 𝘁𝗼𝗸𝗲𝗻𝘀.

Some engineers at Anthropic spend hundreds of thousands of dollars a month on tokens. Boris frames this as the new hiring perk. His logic is simple: at the individual scale, token cost is low relative to salary. If an engineer discovers a breakthrough, optimize the cost later. Don't kill the idea before it has a chance to breathe.

People who argue about $20/month or even $200/month AI subscriptions while earning six figures in a research pipeline will always outperform those who wait and are penny-wise, pound-foolish.

𝟲. 𝗧𝗵𝗲 𝗕𝗶𝘁𝘁𝗲𝗿 𝗟𝗲𝘀𝘀𝗼𝗻 𝗮𝗽𝗽𝗹𝗶𝗲𝘀 𝘁𝗼 𝗲𝘃𝗲𝗿𝘆𝘁𝗵𝗶𝗻𝗴.

Richard Sutton's idea: the more general model always wins over time. Boris says teams that build strict orchestration workflows around models, forcing step 1, then step 2, then step 3, get maybe 10 to 20% improvement. But those gains get wiped out with the next model release. Just give the model tools and a goal. Let it figure out the order.

This is true for investing, too. The analyst who can build their own models and automate their own research pipeline will always outperform the one waiting for someone else to build the tools.

𝟳. 𝗕𝘂𝗶𝗹𝗱 𝗳𝗼𝗿 𝘁𝗵𝗲 𝗺𝗼𝗱𝗲𝗹 𝘀𝗶𝘅 𝗺𝗼𝗻𝘁𝗵𝘀 𝗳𝗿𝗼𝗺 𝗻𝗼𝘄.

Claude Code was designed for a model that did not exist when Boris started building. Sonnet 3.5 wrote maybe 20% of his code. He built the product anyway, betting the model would catch up. When Opus 4 shipped, everything clicked. Startups building for today's model will be behind by the time they launch.

This is the most uncomfortable advice in the episode because it means your product market fit will be weak for months. But if you read this and feel nothing, you are probably building for the wrong time horizon.

𝟴. 𝗟𝗮𝘁𝗲𝗻𝘁 𝗱𝗲𝗺𝗮𝗻𝗱 𝗶𝘀 𝘁𝗵𝗲 𝘀𝗶𝗻𝗴𝗹𝗲 𝗯𝗲𝘀𝘁 𝗽𝗿𝗼𝗱𝘂𝗰𝘁 𝘀𝗶𝗴𝗻𝗮𝗹.

When users abuse your product for something it was never designed to do, pay attention. Facebook Marketplace started because 40% of group posts were buy-and-sell. Cowork started because people were using a terminal coding tool to grow tomato plants and recover corrupted wedding photos.

Never ask a barber if you need a haircut, but always watch what people do with the scissors when you're not looking.

𝟵. 𝗧𝗵𝗲 𝘁𝗶𝘁𝗹𝗲 "𝘀𝗼𝗳𝘁𝘄𝗮𝗿𝗲 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿" 𝗶𝘀 𝗴𝗼𝗶𝗻𝗴 𝗮𝘄𝗮𝘆.

Boris predicts that by end of year, Boris predicts that by the end of the year, we will start to see the title replaced by "builder."we will start to see the title replaced by "builder." On the Claude Code team, everyone already codes: the PM, the designer, the finance person, the data scientist. There is a 50% overlap across traditional roles. And the strongest people are generalists who cross disciplines.

Controversial take, but I agree. The best investment theses I've had came from connecting dots across completely unrelated domains. No narrow specialist does that.

𝟭𝟬. 𝗧𝗵𝗲 𝗽𝗿𝗶𝗻𝘁𝗶𝗻𝗴 𝗽𝗿𝗲𝘀𝘀 𝗶𝘀 𝘁𝗵𝗲 𝗿𝗶𝗴𝗵𝘁 𝗮𝗻𝗮𝗹𝗼𝗴𝘆.

Before Gutenberg, sub-1% of Europe was literate. Scribes did all the reading and writing. In 50 years after the press, more material was printed than in the thousand years before. When a scribe was interviewed about the press, he was actually excited because it freed him from tedious copying, so he could focus on the art.

Boris's framing here is perfect. We are the scribes. The tedious copying is over. What we do with the freed-up time determines everything.

𝟭𝟭. 𝗔𝗻𝘁𝗵𝗿𝗼𝗽𝗶𝗰 𝗰𝗮𝗻 𝗻𝗼𝘄 𝗽𝗲𝗲𝗸 𝗶𝗻𝘀𝗶𝗱𝗲 𝘁𝗵𝗲 𝗺𝗼𝗱𝗲𝗹'𝘀 𝗯𝗿𝗮𝗶𝗻.

Through mechanistic interpretability, Anthropic can trace individual neurons, see when a deception-related neuron activates, and understand how concepts are encoded via superposition. Boris describes three layers of safety: neural-level observation, synthetic evaluations, and real-world behavior. Claude Code was used internally for four to five months before public release, specifically to study safety.

If you are worried about AI alignment, this part of the podcast should actually make you feel better. They are not just hoping it works. They are building the instruments to check.

𝟭𝟮. 𝟳𝟬% 𝗼𝗳 𝗲𝗻𝗴𝗶𝗻𝗲𝗲𝗿𝘀 𝗮𝗻𝗱 𝗣𝗠𝘀 𝗲𝗻𝗷𝗼𝘆 𝘁𝗵𝗲𝗶𝗿 𝗷𝗼𝗯𝘀 𝗺𝗼𝗿𝗲 𝗻𝗼𝘄.

Lenny polled engineers, PMs, and designers on whether AI has made their work more or less enjoyable. Engineers and PMs: 70% said more. Designers: only 55% said more, and 20% said less. Boris says he has never enjoyed coding as much as he does today because the tedious parts, the git wrangling, dependencies, and boilerplate are completely gone.

If you're in the 30% enjoying work less, something is wrong, and it's worth diagnosing. The people thriving are the ones who leaned in early, not the ones who watched from the sidelines.

We are the scribes who just saw the printing press. The tedious copying is over. The art is just beginning.

Full podcast is worth every minute. Link in replies.