Mayank Singh

8.9K posts

Mayank Singh

@geekmarcus

PhD student at @UArizona | Interested in #NLProc, History, #Astronomy, and more Part-time @GameOfThrones fan account

Katılım Haziran 2010

192 Takip Edilen404 Takipçiler

Mayank Singh retweetledi

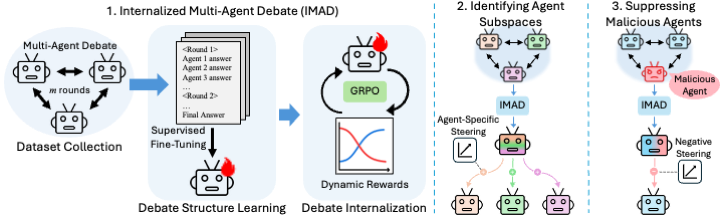

Our paper "Latent Agents" was accepted to #ACL2026 Main!

We distill multi-agent debate into a single LLM, matching debate performance at a fraction of the cost. We also show that internalized agents are discoverable and controllable.

Huge thanks to @amuuueller and Dokyun Lee!

English

This was accepted to ACL Main Conference! Thanks and congrats to my collaborators! 🎉🎊🎇 #acl2026

Mayank Singh@geekmarcus

🚨 Preprint alert: Grammar Search for Multi-Agent Systems 🧵 Instead of asking LLMs to invent multi-agent code from scratch, we define a grammar of simple, composable components and search over valid system compositions. arxiv.org/abs/2512.14079

English

Mayank Singh retweetledi

Mayank Singh retweetledi

@subhavsamarth जब जब शहर में गंदगी दिखता है तो शहर वाला कहता है साफ कर ले नहीं तो कोई पगला इंटर्न आ जाएगा।

हिन्दी

Mayank Singh retweetledi

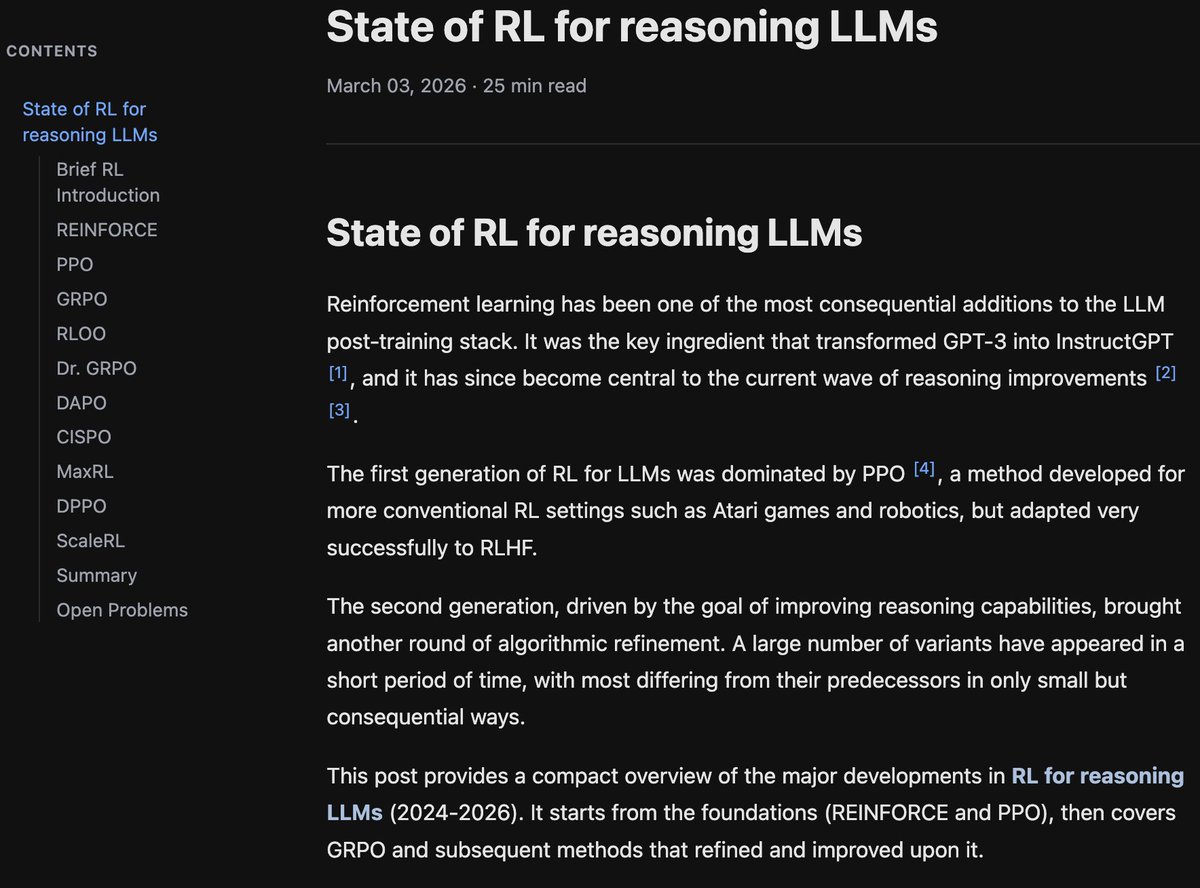

Finally finished!

If you're interested in an overview of recent methods in reinforcement learning for reasoning LLMs, check out this blog post: aweers.de/blog/2026/rl-f…

It summarizes ten methods, tries to highlight differences and trends, and has a collection of open problems

English

Mayank Singh retweetledi

Mayank Singh retweetledi

Mayank Singh retweetledi

Mayank Singh retweetledi

Mayank Singh retweetledi

Mayank Singh retweetledi

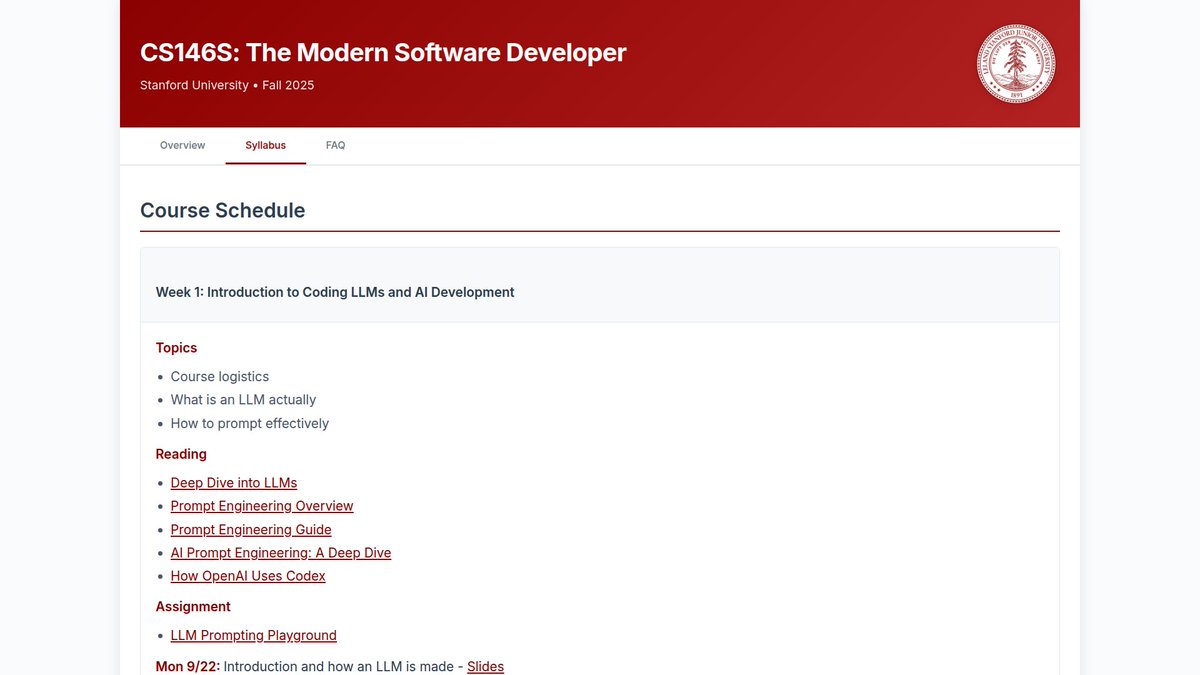

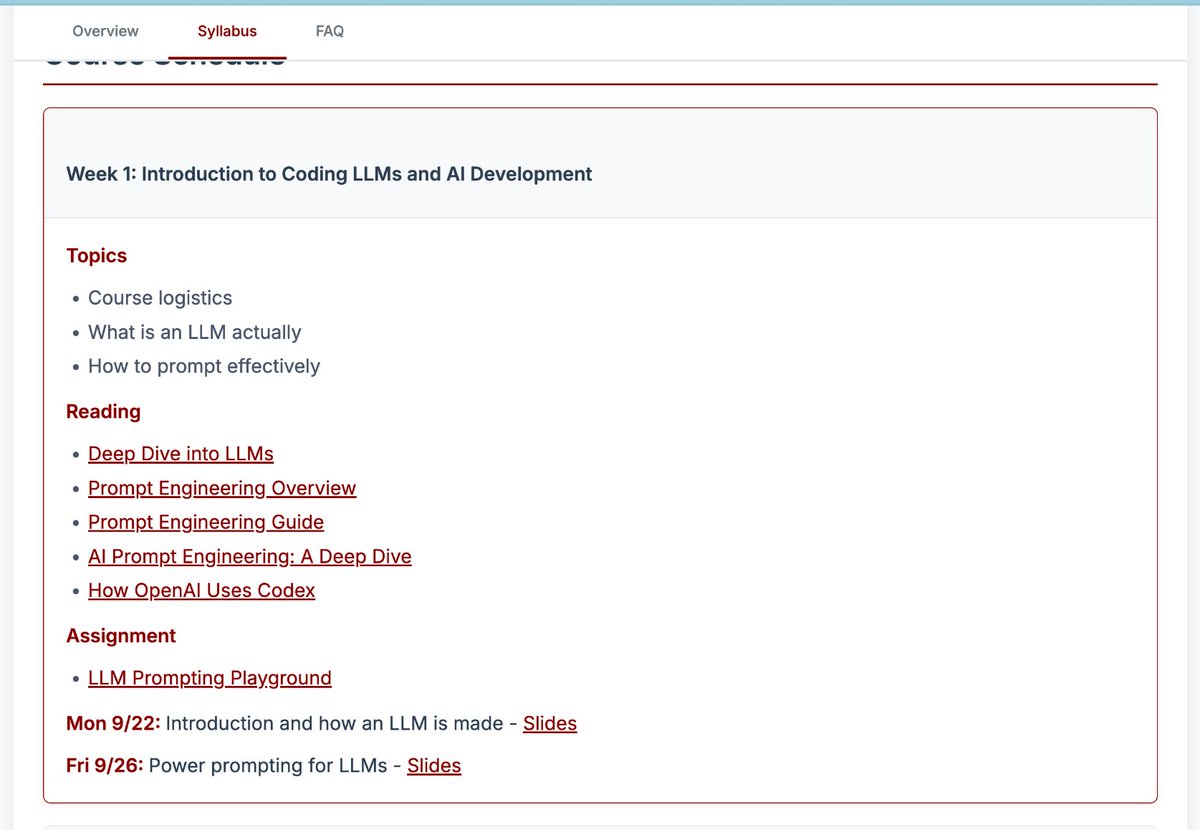

斯坦福大学竟然开了一门 Vibe Coding 课程

课程编号 CS146S,全名叫《The Modern Software Developer》,持续 10 周,依次讲解了提示词工程、Agent 架构、MCP、上下文工程、安全攻防、Code Review、自动做 App 和上线运维。

themodernsoftware.dev

中文

Mayank Singh retweetledi

The hammer vs the anvil... such a cool shot! 👌

aki is mourning@alphalicent

maekar coming back for round two to slime dunk before baelor stopped him is kinda tickling me

English

Mayank Singh retweetledi

Mayank Singh retweetledi

📊 Across math, QA, and logical reasoning benchmarks, this approach matches or improves over prior automatic prompt optimization methods while using substantially fewer optimization-phase tokens.

📄 arxiv.org/abs/2602.00997

English