Andrey Vasnetsov

1.2K posts

@generall931

CTO @ https://t.co/8JgB043VWL - The Vector Database

@StuartReid1929 @generall931 Yes but sampling works :) The real reason why none of those are used in production is because they are not popular outside of academic circles as they lack proper tooling and ux.

Hey all! We actually did find a discrepancy with our previous benchmarks of bm42. Please don't trust us and always check performance on your own data. Our best effort to correct it is here: github.com/qdrant/bm42_ev…

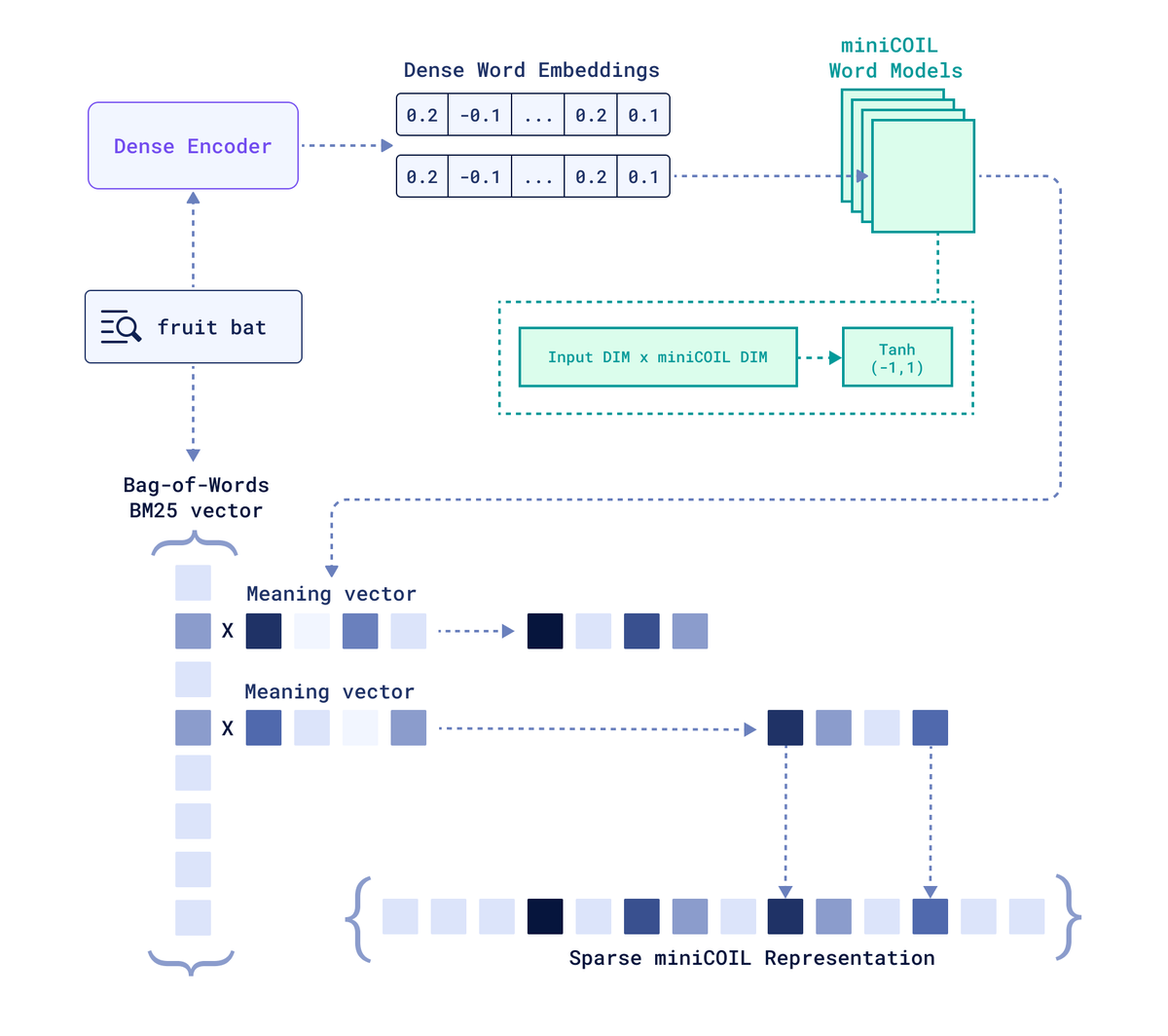

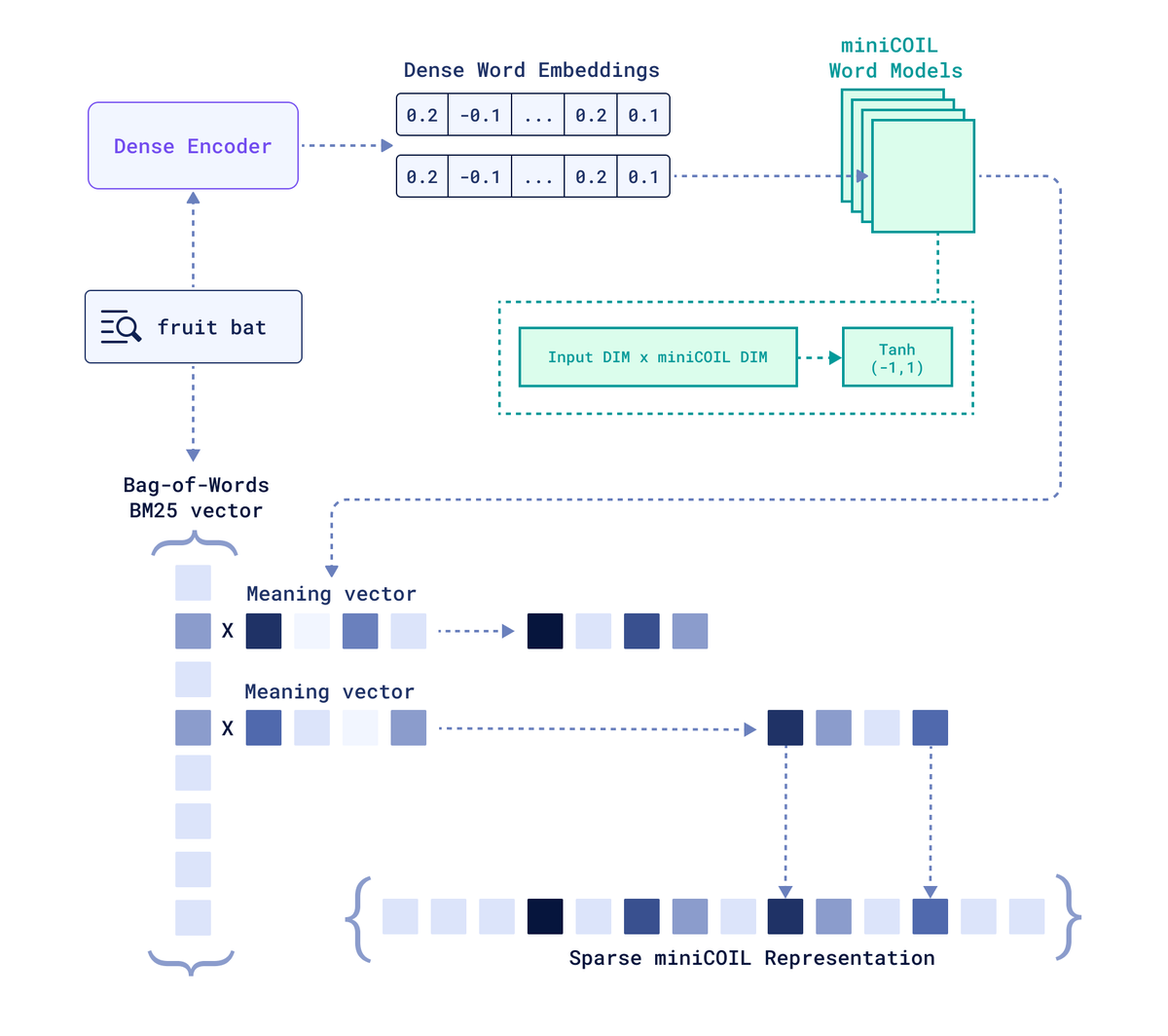

@zhoujinjing09 @StuartReid1929 Below the image from BEIR. Things got better, mostly by using a lot more training data and better middle training.

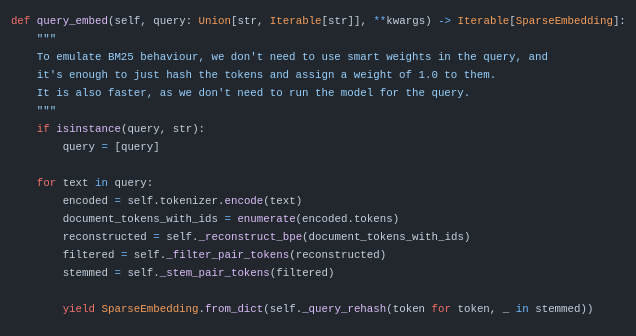

@generall931 @jobergum @aapo_tanskanen @qdrant_engine How about running BM42 on a different language? Or source code? BM42 does not even work for English and doesn't beat BM25, while needing a ton more compute.

Hey all! We actually did find a discrepancy with our previous benchmarks of bm42. Please don't trust us and always check performance on your own data. Our best effort to correct it is here: github.com/qdrant/bm42_ev…