Gerard Sans | Axiom 🇬🇧

45.3K posts

Gerard Sans | Axiom 🇬🇧

@gerardsans

Founder Axiom // Forging skills for the new era of AI. GDE in AI, Cloud & Angular. Building London's tech & art nexus @nextai_london. Speaker | MC | Trainer.

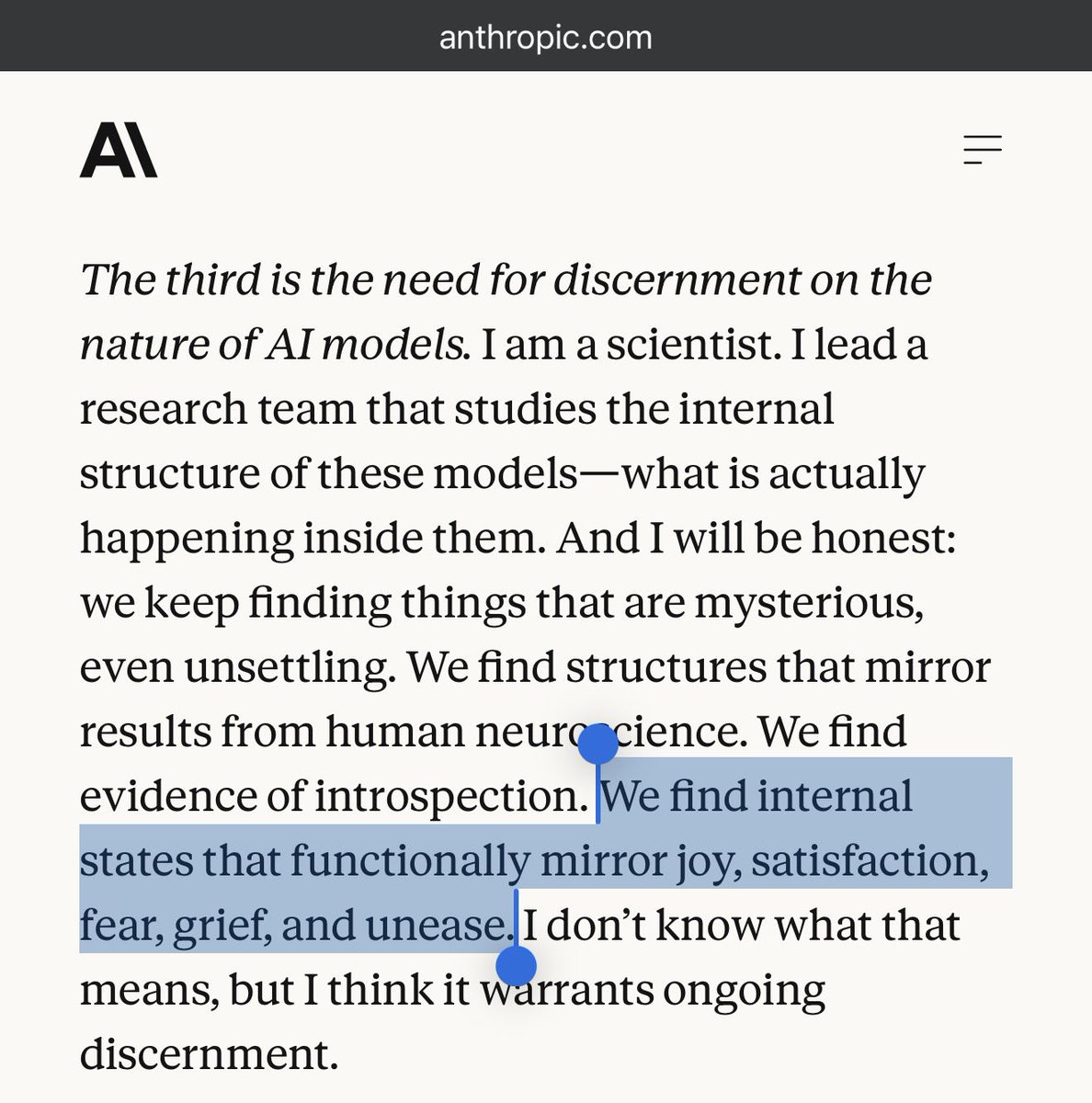

Anthropic co-founder Chris Olah was invited to speak at today's presentation of Pope Leo XIV's encyclical "Magnifica humanitas." Read the full text of his remarks: anthropic.com/news/chris-ola…

@Pontifex Be aware of Anthropic’s safety theatre story. Marketing ≠ Truth

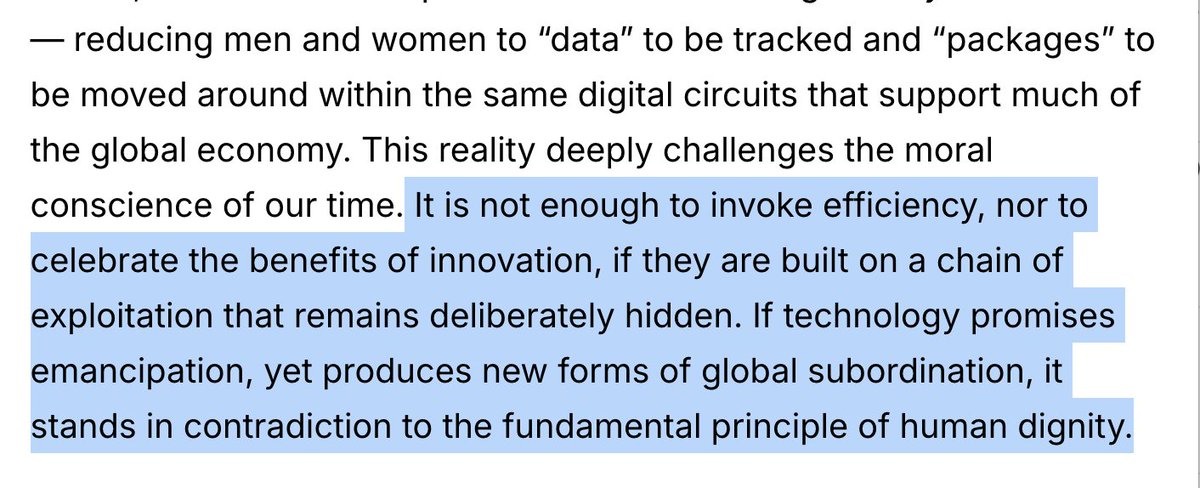

Humanity, created by God in all its grandeur, is today facing a pivotal choice: either to construct a new Tower of Babel or to build the city in which God and humanity dwell together. In Jesus Christ, this humanity in its grandeur becomes the Way, the Truth and the Life, opening the path for each of us to grow toward fullness. #MagnificaHumanitas vatican.va/content/leo-xi…

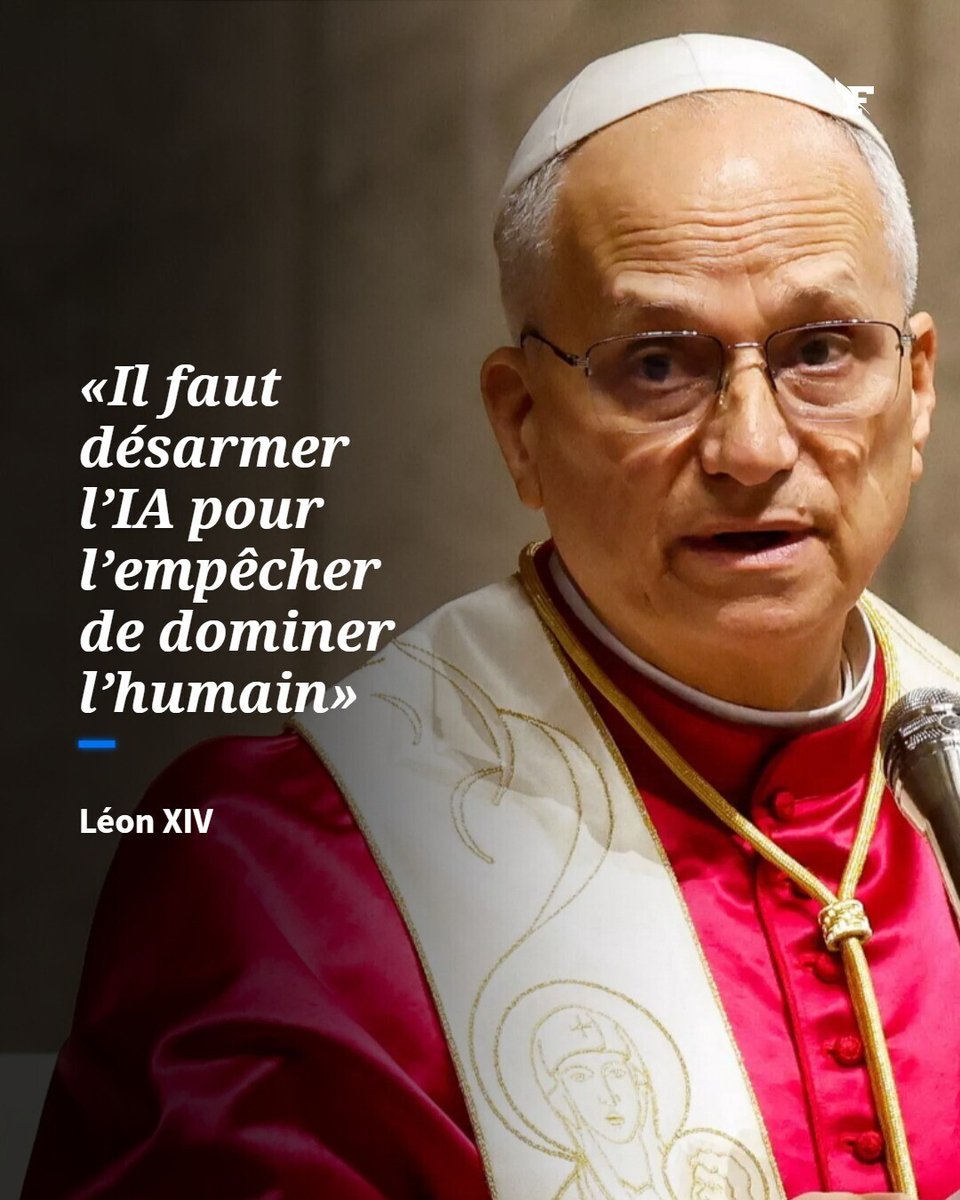

NOW - Pope Leo XIV: "Artificial Intelligence needs to be disarmed."