gruad

4.5K posts

The United States just deployed the one weapon system that tells you exactly what comes next. 11 F-22 Raptors landed at Ovda Airbase in Israel’s Negev Desert today. They flew from RAF Lakenheath in England, supported by seven aerial refueling tankers, covering thousands of miles to reach Israeli soil. One of the original twelve turned back with a suspected fuel leak. The rest completed the transit and are now sitting on Israeli tarmac. This has never happened before. Not during the ISIS campaign. Not during Blue Flag exercises. Not during Operation Midnight Hammer in June 2025. The F-22 Raptor, the most advanced air superiority fighter ever built, has never been based in Israel for a combat-oriented mission. Until today. Now understand what the F-22 does, because it does not do what you think. The F-22 does not bomb nuclear facilities. It does not carry bunker busters. It is not a strike aircraft. The F-22 is a Suppression of Enemy Air Defenses platform. It kills the systems that protect targets. It neutralizes radar. It destroys surface-to-air missile batteries. It eliminates the S-300 and S-400 systems that Iran has spent decades layering around Fordow, Natanz, and Isfahan. The F-22 is not the punch. It is the hand that moves the shield out of the way so the punch can land. Iran’s air defense architecture is the single obstacle between the B-2 Spirit bombers carrying 30,000-pound GBU-57 bunker busters and the centrifuge halls buried under 80 meters of granite at Fordow. The F-22’s entire purpose in this theater is to carve a corridor through that architecture, blind Iranian radar, destroy missile batteries along the ingress route, and ensure the bombers reach their targets without being engaged. When you deploy your SEAD package to the theater, you are not deterring. You are building the ingress corridor. Only 195 F-22s were ever built. Approximately 180 remain operational. The United States just committed 11 of them, roughly 6 percent of the entire operational fleet of its most valuable airframe, to a single base in southern Israel. You do not forward-deploy 6 percent of an irreplaceable weapons system for signaling. You deploy it because the mission it was designed for is approaching. Now connect this to what is already in theater. Two carrier strike groups with 150-plus aircraft. 500-plus total combat aircraft. 700 tonnes of munitions via C-17. 40 aerial refueling tankers. Three AWACS for airborne command and control. A P-8A mapping the Strait of Hormuz. Every ship cleared from Bahrain. Hundreds evacuated from Al Udeid. The IRGC massing at the Iraqi border. Khamenei’s shadow government activated. Modi landing in Israel tomorrow to collect alliance signatures. Geneva on Wednesday. The 48-hour deadline expiring before any of it begins. And now the SEAD package has arrived. There is a sequence to air campaigns that has not changed since Desert Storm. First, you position your strike aircraft. Done. Second, you stage your munitions. Done. Third, you deploy your tankers for sustained operations. Done. Fourth, you establish airborne command and control. Done. Fifth, you map the retaliation corridor. Done. Sixth, you deploy your SEAD assets to suppress enemy air defenses along the ingress route. That sixth step happened today. The seventh step is the phone call. Germany has advised its citizens in Israel to prepare for airspace closures. India told its citizens to leave Iran. The US Embassy in Beirut is evacuating. Khamenei is dispersing his leadership. Iran is practicing closing the Strait of Hormuz. Everyone on every side of this conflict is preparing for the same event. The only people not preparing are the ones who think this is still about deterrence. The F-22s are not in Israel to deter. They are in Israel to clear the sky. And you only clear the sky when something is about to fly through it.

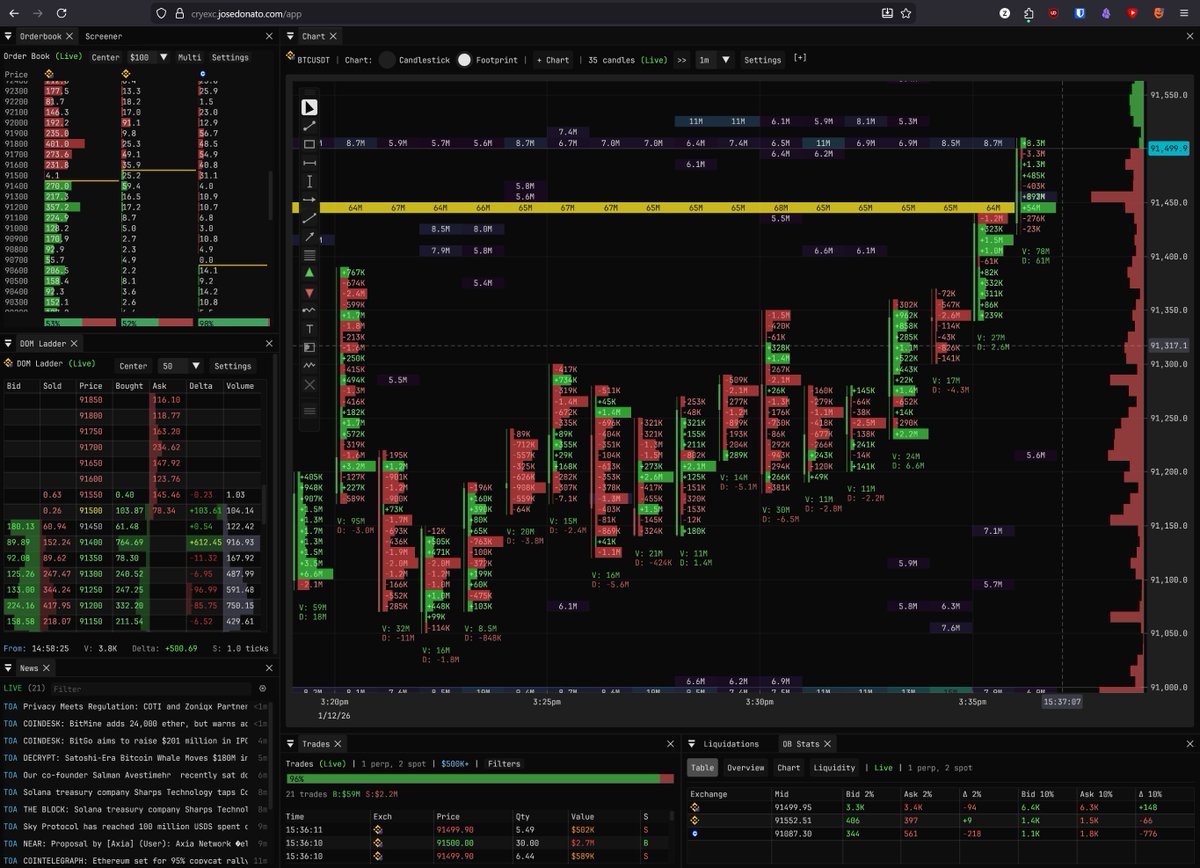

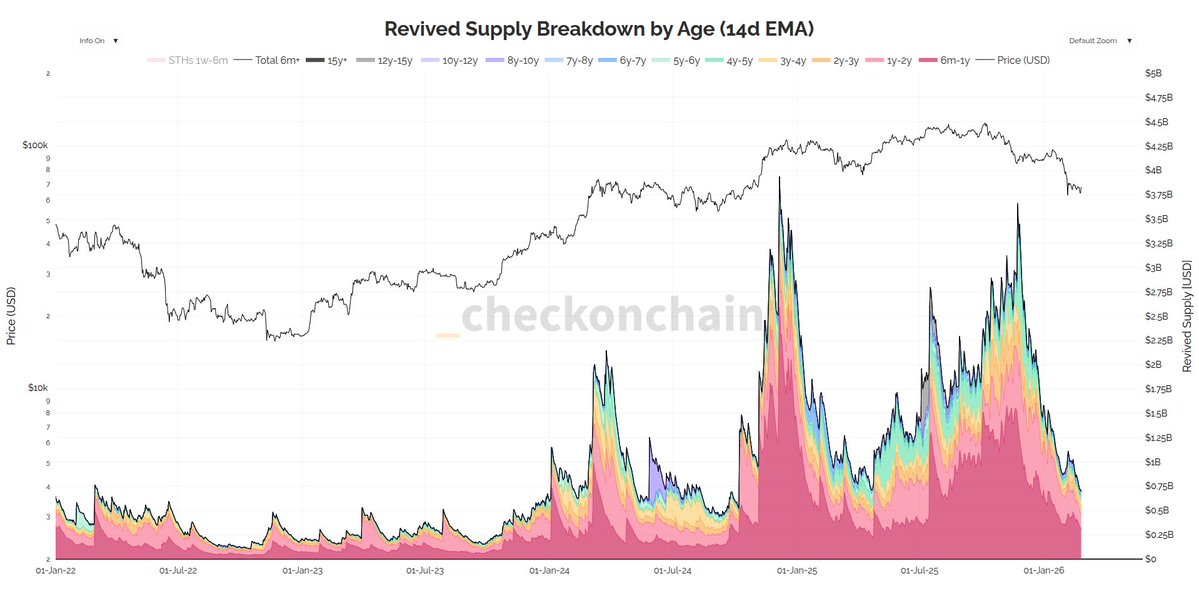

Everyone is asking: "Is Jane Street why Bitcoin isn't at $150k?" As expected, the answer is trickier than the question. But it's also more structurally unsettling than the conspiracy theory itself—and once you understand the actual mechanics, you won't be able to unsee them👇

Today the HHS DOGE team open sourced the largest Medicaid dataset in department history. This dataset contains aggregated, provider-level claims data for a specific billing code over time. For example, using this dataset, it would have been possible to easily detect the large-scale autism diagnosis fraud seen in Minnesota. Download the data yourself: opendata.hhs.gov

Breakthrough: Game-Theoretic Pruning Slashes Neural Network Size by Up to 90% with Near-Zero Accuracy Loss: Unlocking Edge AI Revolution! I am testing this now on local AI and it is astonishing! introduced Pruning as a Game. Equilibrium-Driven Sparsification of Neural Networks, a novel approach that treats parameter pruning as a strategic competition among weights. This method dynamically identifies and removes redundant connections through game-theoretic equilibrium, achieving massive compression while preserving – and sometimes even improving – model performance. Published on arXiv just days ago (December 2025), the paper demonstrates staggering results: sparsity levels exceeding 90% in large-scale models with accuracy drops of less than 1% on benchmarks like ImageNet and CIFAR-10. For billion-parameter behemoths, this translates to drastic reductions in memory footprint (up to 10x smaller), inference speed (2-5x faster on standard hardware), and energy consumption – all without the retraining headaches of traditional methods. Why This Changes Everything Traditional pruning techniques – like magnitude-based or gradient-based removal – often struggle with “pruning regret,” where aggressive compression tanks performance, forcing costly fine-tuning cycles. But this new equilibrium-driven framework flips the script: parameters “compete” in a cooperative or non-cooperative game, where the Nash-like equilibrium reveals truly unimportant weights. The result? Cleaner, more stable sparsification that outperforms state-of-the-art baselines across vision transformers, convolutional nets, and even emerging multimodal architectures. Key highlights from the experiments: •90-95% sparsity on ResNet-50 with top-1 accuracy loss <0.5% (vs. 2-5% in prior SOTA). •Up to 4x faster inference on mobile GPUs, making billion-parameter models viable for smartphones and IoT devices. •Superior robustness: Sparse models maintain performance under distribution shifts and adversarial attacks better than dense counterparts. This isn’t just incremental – it’s a paradigm shift. Imagine running GPT-scale reasoning on your phone, real-time video analysis on drones, or edge-based healthcare diagnostics without cloud dependency. By reducing the environmental footprint of massive training and inference, it also tackles AI’s growing energy crisis head-on. The implications ripple across industries: •Mobile & Edge AI: Affordable on-device intelligence explodes. •Green Computing: Lower power draw for data centers and devices. •Democratized AI: Smaller models mean broader access for startups and developing regions. As AI scales toward trillion-parameter frontiers, techniques like this are essential to keep progress practical and inclusive. Pruning as a Game: Equilibrium-Driven Sparsification of Neural Networks (PDF: arxiv.org/pdf/2512.22106) I will continue my testing but thus far results are robust!