henry

183 posts

@AriesTheCoder it goes hard with higher powered languages. absolutely would not do this with python, typescript, golang etc.

Just learned it's literally impossible to paste images into Claude Code over SSH. How do you CLI people live like this??

@josefbender_ Make better content.

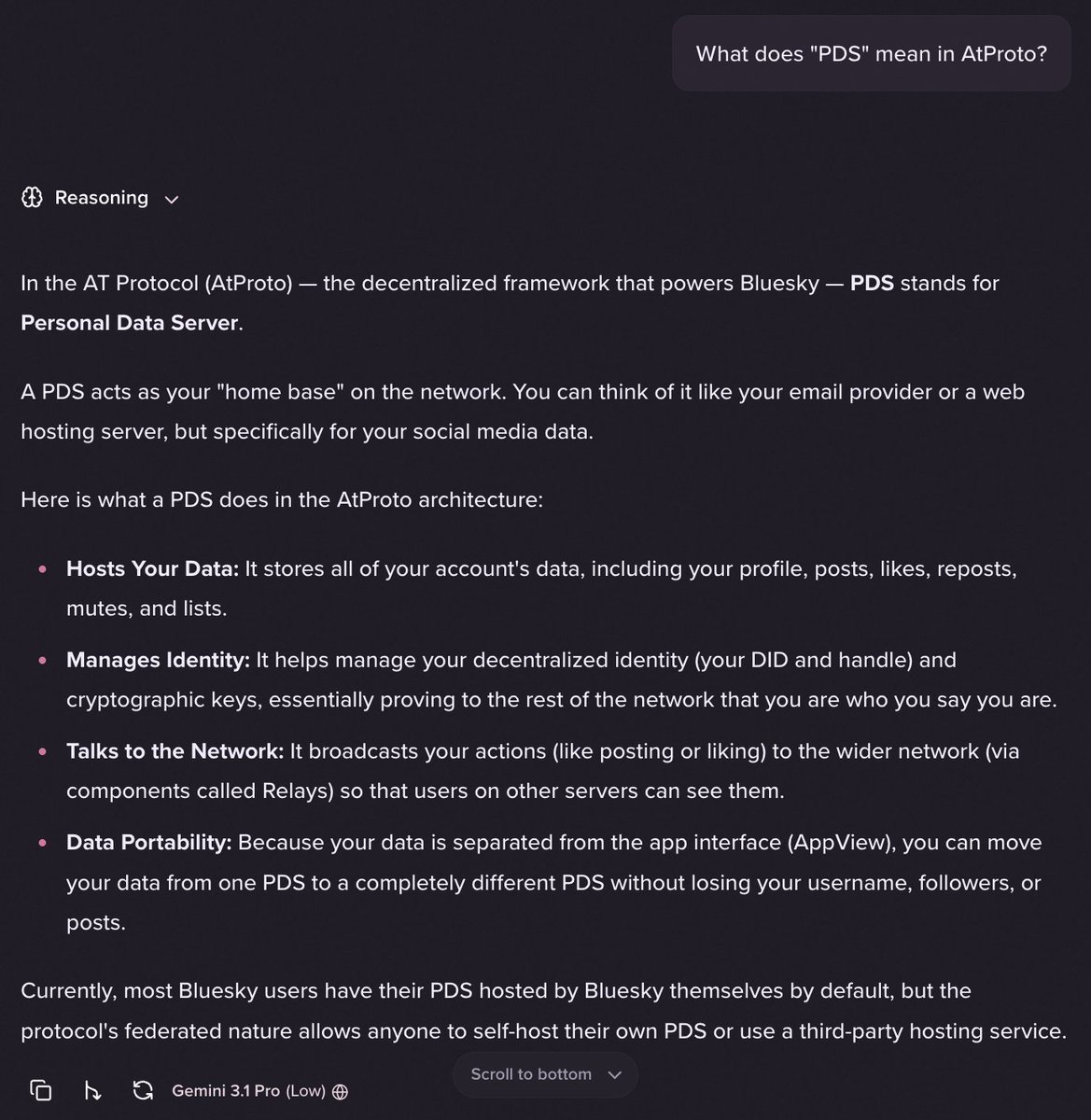

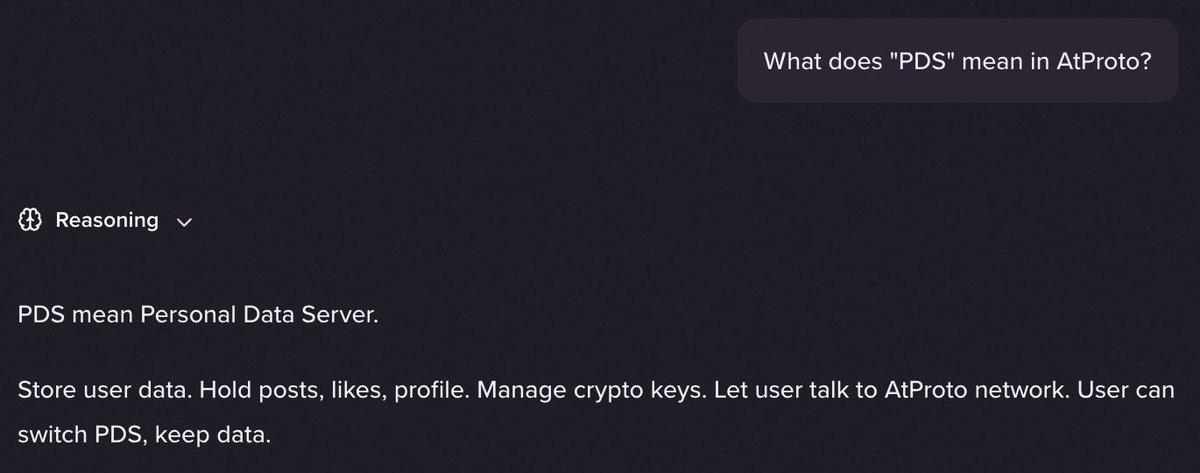

@badlogicgames The only use-case I like it for is chat. Not to save tokens, just to get a consistent "personality" and less fluff to read. I find that Gemini 3.1 Pro is much better to talk to as a caveman than by default. Quick example attached.

The more I replace plans with prototypes, the better the outputs Who'd have thought that low fidelity prototypes were better than walls of spec Oh yeah, the entire industry for 20 years Stop going against decades of knowledge because someone in SF shipped it as a 'mode'