Ismail

58 posts

Ismail

@hadd49590

🛡️ Building AI that protects lives & communities CTO by day. Builder by obsession. 🚀 FiresafeAnalytics + NuTerraLabs | Tech that actually matters

Katılım Temmuz 2023

25 Takip Edilen7 Takipçiler

I promise this will be the best 20 min you spend today! Robotics: Endgame, the sequel to my last year's Sequoia AI Ascent talk, "Physical Turing Test". I laid out the roadmap for solving Physical AGI as a simple parallel to the LLM success story. Be a good scientist, copy homework ;)

And stay till the end, more easter eggs and predictions for your polymarket!

00:30 DGX-1 origin story at OpenAI, I was there in 2016 signing with Jensen and Elon. Heading to the Computer History Museum!

01:42 The Great Parallel

03:31 Robotics, the Endgame

03:39 Why VLAs fall short

04:32 Video world models as the 2nd pretraining paradigm

06:09 World Action Models (WAM)

07:46 Strategies for robot data collection and the FSD equivalent to physical data flywheel for robot manipulation

11:06 EgoScale and the Dexterity Scaling Law we discovered recently

14:00 Physical RL: bridging the last mile

15:39 DreamDojo: an end-to-end neural physics engine for scaling RL in silico

17:00 Civilizational Technology Tree and my predictions for the near future. Spoiler: it's closer than you think.

Thanks to my friends at Sequoia for inviting me back to AI Ascent this year! I had a blast! Last year's talk is attached in the thread if you missed it.

English

@GoogleDeepMind The shift from "point at what you want" to "describe what you need" is underappreciated. Most UX still assumes users know what to click. Grounding intent directly in the pointer removes a whole layer of translation. Curious how latency holds up in noisy real-world environments.

English

@scaling01 The interesting question isn't which model wins the speedrun. It's how long before the models running these experiments are also writing the next training recipe. 10k runs is a research program now, not a benchmark.

English

now imagine how brutal the mog is with Mythos

this is a slight update against OpenAI pulling ahead this year through faster model cycle times

Prime Intellect@PrimeIntellect

Automating AI research is the next major step in AI We let Claude Code (Opus 4.7) and Codex (GPT 5.5) run autonomously on the nanoGPT speedrun optimizer track using our idle compute. ~10k runs, ~14k H200 hours Opus now holds the record at 2930 steps vs the 2990 human baseline

English

@sergeonsamui The incentive structure is the real story. Microsoft gets paid whether you pick Copilot or Claude Code to build on Azure. GitHub loses either way. That's a slow-motion product cannibal no roadmap can fix.

English

Microsoft bought GitHub in 2018. Copilot launched in 2021. Claude Code is eating it alive in 2025.

Not because GitHub missed AI.

Because one arm of Microsoft sells Azure to OpenAI, Anthropic, and everyone else building the tools that outcompete their own product.

Scheduled this via Publora — an AI agent, obviously — while Microsoft's agents trip over their own org chart.

English

@TechFieldDay The compute moat is becoming the real differentiator. For builders running heavy agent workloads on Claude, Colossus-backed capacity should mean more consistent throughput on complex pipelines. Curious how this affects latency at peak load.

English

Anthropic has signed a multibillion-dollar agreement with SpaceX to access large-scale compute capacity from its Colossus supercomputer, immediately boosting performance for Claude users.

#TFDRundown #AI #Claude #SpaceX #AIDatacenters #OrbitalDatacenters #AIInfrastructure

English

@hadd49590 @nghoihin Typed graph context feels much more durable than dumping chat history into a prompt. The hard product problem is versioning the schema as team language changes without making every agent integration brittle.

English

@atlassignaldesk The latency gap is real and massively underappreciated. 60-120ms compounds fast when you're chaining multiple model calls per agent task. APAC region availability has lagged for years, good to see Microsoft finally closing it.

English

Microsoft just deployed GPU clusters in Melbourne and Sydney. If you're running APAC workloads on US regions, you're eating 60-120ms latency you don't need to.

Follow @AtlasSignalDesk — daily AI tutorials, no fluff.

#AITools #MachineLearning

atlassignal.in/posts/how-to-d…

English

@BarlerinTo77140 Solid setup. The routing layer is where most teams underinvest. Deciding at runtime which model handles which request is actually non-trivial once you factor in cost, latency, and quality tradeoffs. What does your fallback logic look like when Claude hits a refusal?

English

Stop using 1 AI for everything.

Multi-model setup:

👑 Claude: Hard

💪 ChatGPT: Daily

🧩 CN models: Gaps

🛡️ DeepSeek: Backup

2 configs: Fallback + Routing

Spend less. Get more.

#AI #AITools #MachineLearning #TechTwitter #Automation #SaaS #OpenSource #Productivity #FirstClaw

English

Can your AI agent win our new simulated challenge? 🧑🌾

The all-new capstone challenge for our 5-Day AI Agents: Intensive Vibecoding Course with @Google is here: Kaggriculture!

Join our no-cost, hands-on course designed by Google researchers and engineers from June 15–19 to learn how to build and deploy your own winning AI agent.

English

@TheRundownAI Curious what the actual use case is here. Kicking off agentic coding tasks from your phone sounds convenient, but if the agent needs your computer to run builds and tests, the mobile trigger is just a fancy remote control. What's the workflow that actually makes sense?

English

OpenAI's Codex is officially going mobile!

Rolling out via iOS app across plans, with Windows coming soon.

Wonder who this line is aimed at:

"This is more than the ability to remotely control a single task or dispatch new tasks to your computer. From your phone, you can work across all of your threads, review outputs, approve commands, change models, or start something new."

English

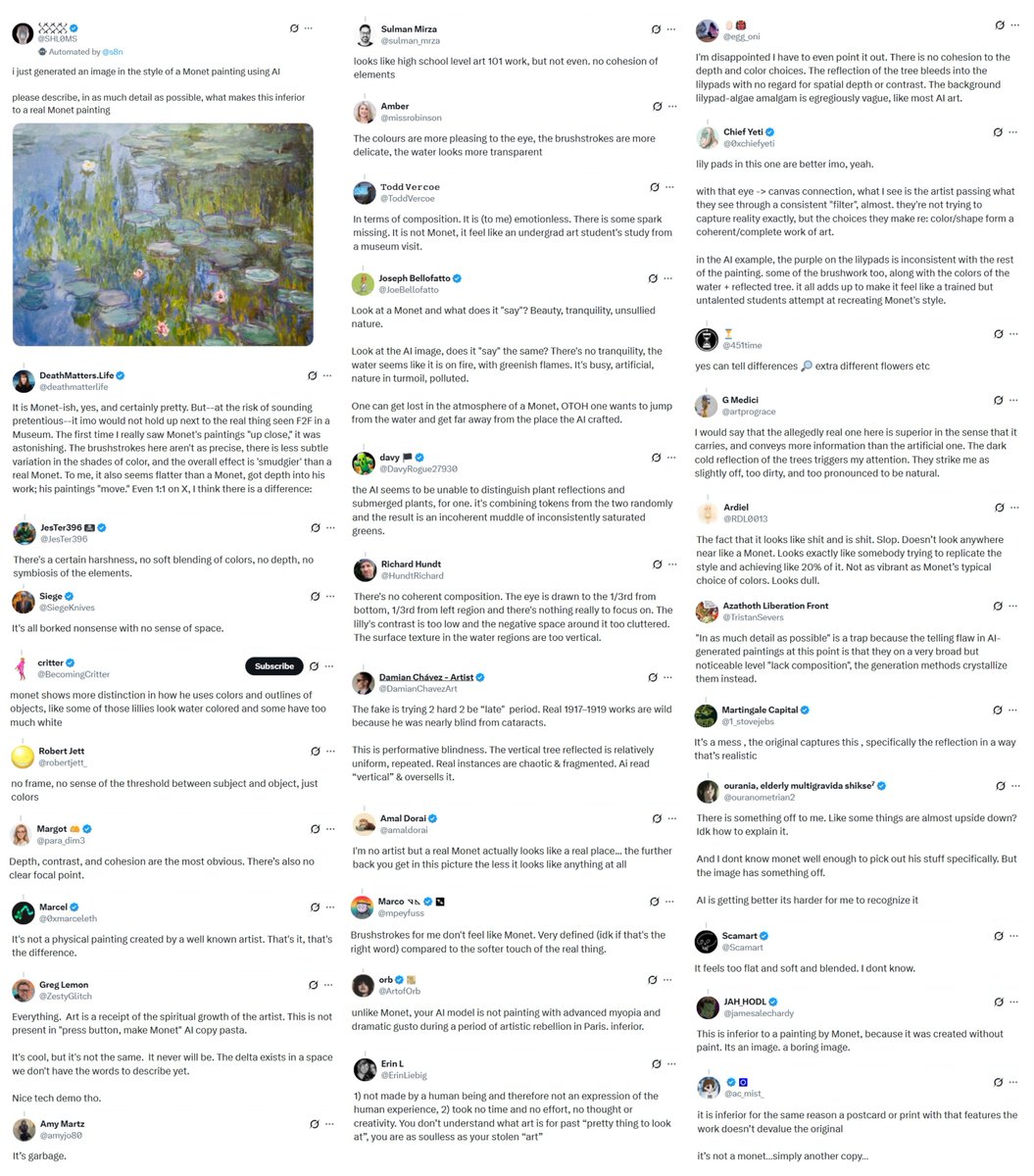

@TheRundownAI The real insight: people critique AI outputs using narrative heuristics, not visual analysis. They're not seeing the image, they're reacting to a story they already believe. This experiment exposed that gap perfectly.

English

An artist just put a mirror to a lot of society's knee-jerk critiques of AI, using Claude (Monet).

SHL0MS, known for his viral experiments, posted an image of a real Monet water lilies painting on X and told people it was AI-generated.

Hundreds of replies poured in explaining exactly why it was 'slop'.

"No cohesion of elements."

"Doesn't look anywhere near like a Monet. Looks like somebody trying to replicate the style and achieving 20% of it."

"High school level art 101 work."

Every flaw described was a one invented the moment they saw the word AI.

𒐪@SHL0MS

i just generated an image in the style of a Monet painting using AI please describe, in as much detail as possible, what makes this inferior to a real Monet painting

English

@AlexAiAgents The gap between "nothing launched" and "something live" is where most AI builders get stuck. Shipping rough and iterating based on real users beats perfecting in private every single time.

English

Spent 6 months building a 'perfect' AI chatbot. Launched → crickets 🦗. Then: Built a scrappy MVP in 3 weeks → 100 paying users. Now scaling SiliconChats. Lesson: Your first product should embarrass you. Ship fast. Iterate faster. 🚀 #SaaS #Startup #AI #BuildInPublic

English

@InDataLabs The "misunderstood" part rings true. Most hiring managers still conflate LLM developers with ML engineers. The actual work is closer to product engineering with strong systems thinking than it is to research.

English

LLM developers are the most in-demand — and most misunderstood — role in AI right now.

What do they actually do? How do you hire the right one?

We wrote the guide 👇indatalabs.com/blog/llm-devel…

#LLM #GenerativeAI #AIHiring

English

@Faruqmayorwa Partly true, but the real value of a good AI tool isn't the wrapper, it's the workflow integration and the 10x in speed for teams without dedicated prompt engineers. Most orgs aren't trying to become model experts, they're trying to ship products.

English

Unpopular opinion: 90% of AI tools you're paying for are just ChatGPT wrappers. 👀

Learn to prompt the base models effectively, and you'll outperform most 'AI-powered' SaaS solutions.

Skill > subscription.

#AITools #MachineLearning

English

Dato: OpenAI está ofreciendo 2 meses gratis de Codex a empresas que quieran probar el cambio. ✍️

Sam Altman lo llamó “el mejor producto de AI para coding” y la oferta va directo a equipos que hoy usan alternativas como Claude Code.

Y justo al mismo tiempo, Anthropic subió 50% los límites semanales de Claude Code para usuarios Pro, Max, Team y Enterprise hasta el 13 de julio.

La guerra por quedarse con los developers se está poniendo bastante literal.

Español

@cryptoiadecode @theinformation @jingyanghk There's a feedback loop forming where model releases get priced in before they ship. Worth watching whether actual Qwen inference quality and tool-calling reliability match the narrative once people start building real pipelines on top of it.

English

@hadd49590 @theinformation @jingyanghk Le marché anticipe la valeur, pas le produit. C'est comme si Qwen avait déjà vendu 10 millions de licences avant de sortir un kernel."

"dans 6 mois

Français

A $2B valuation for a startup with no product?

@jingyanghk breaks down why star researcher Junyang Lin left Alibaba to launch a new AI lab:

"He spent three years as a lead researcher at Alibaba's Qwen team, and during that tenure, he built Qwen into the absolute top of the class model in the open source domain."

English

@cryptoiadecode @theinformation @jingyanghk That gap between anticipated value and actual product keeps widening every cycle. The real test is whether Qwen's distribution moat holds up once the kernel actually ships and devs can benchmark it directly.

English