George Halal

33 posts

George Halal

@halal_george

AI @Google | PhD Physics @Stanford

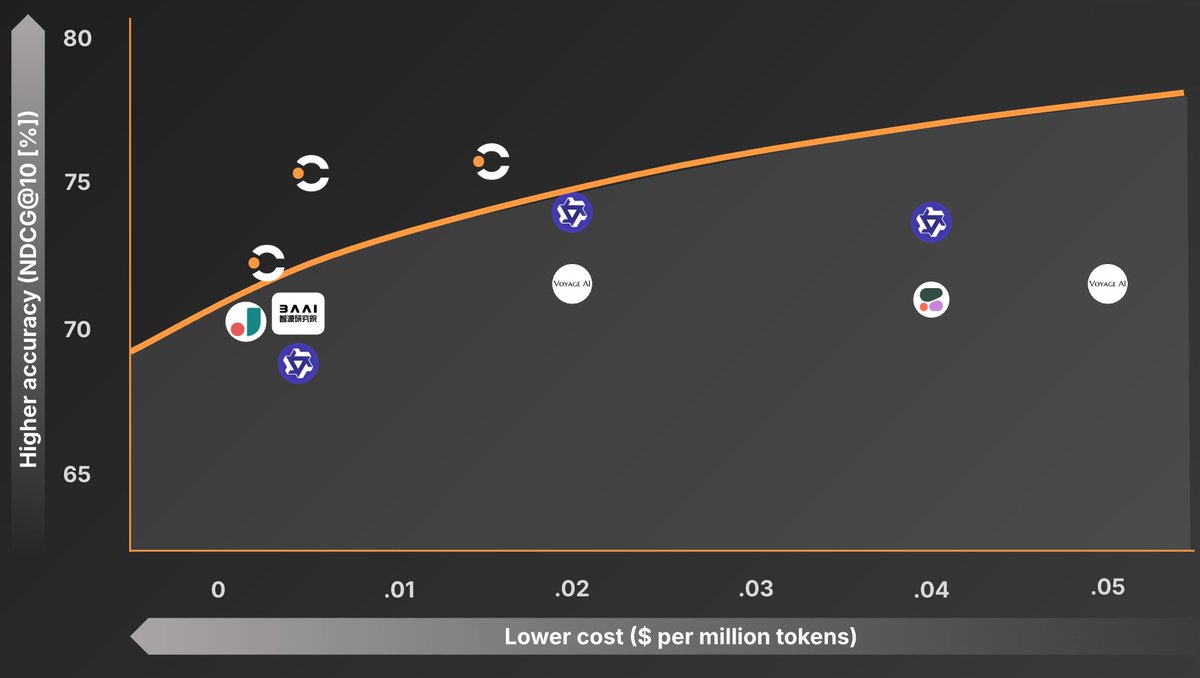

.@ContextualAI 's new re-ranker ($0.05 per M tokens) is a bit better than voyage re-rank 2.5 (also $0.05 per M tokens) which is a pretty high bar IMO. ~2% better recall @ 10 in my eval. I'm also not exactly doing standard QA RAG either, so likely a bit out of domain for both.

Excited to share that we trained rerankers at the cost/performance frontier and are open sourcing them! Contextual AI Reranker v2 🚀 Best performing, most efficient reranker 🤗 Open weights (1B, 2B, 6B) 🫡 Instruction-following (including recency-awareness) 🌐 Multilingual 1/4

Excited to share that we trained rerankers at the cost/performance frontier and are open sourcing them! Contextual AI Reranker v2 🚀 Best performing, most efficient reranker 🤗 Open weights (1B, 2B, 6B) 🫡 Instruction-following (including recency-awareness) 🌐 Multilingual 1/4

Excited to share that we trained rerankers at the cost/performance frontier and are open sourcing them! Contextual AI Reranker v2 🚀 Best performing, most efficient reranker 🤗 Open weights (1B, 2B, 6B) 🫡 Instruction-following (including recency-awareness) 🌐 Multilingual 1/4

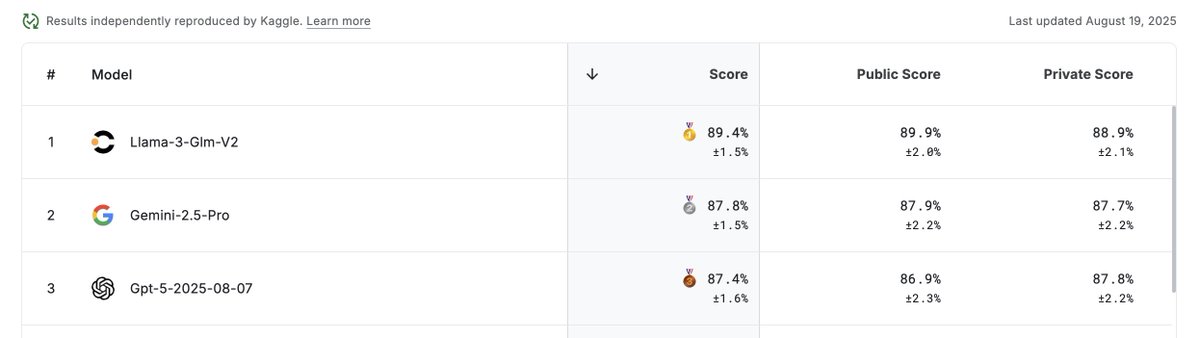

Tired of seeing O3 hallucinate? 😵💫 Today, I am excited to share how we built the least hallucinatory LLM in the 🌍 Our GLMv2, developed at @ContextualAI, just claimed 1st place 🥇 on the FACTS Grounded leaderboard by Google DeepMind — outperforming Gemini-2.5-pro, Claude 4, and O3 by 18%. 🤯 More details about our SFT and post-training recipe below 👇 1/N

We had an interesting meta-learning at @aiDotEngineer World’s Fair from some of the organizers of the MCP track: there has been such an explosion in MCP Server creation, that one of the emerging challenges in this space is selecting the right one for your task.

Excited to share 🤯 that our LMUnit models with @ContextualAI just claimed the top spots on RewardBench2 🥇 How did we manage to rank +5% higher than models like Gemini, Claude 4, and GPT4.1? More in the details below: 🧵 1/11