ham3798

162 posts

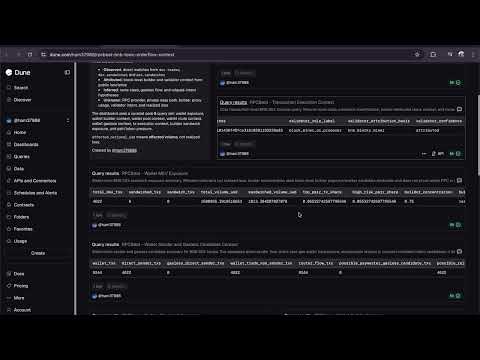

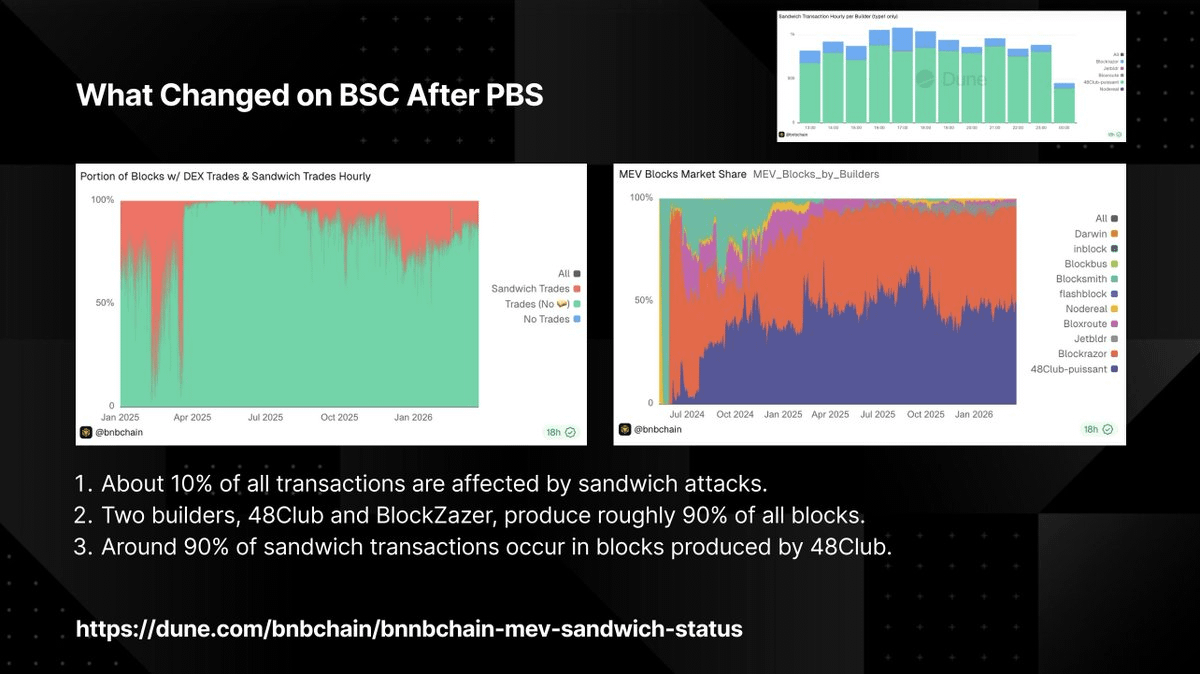

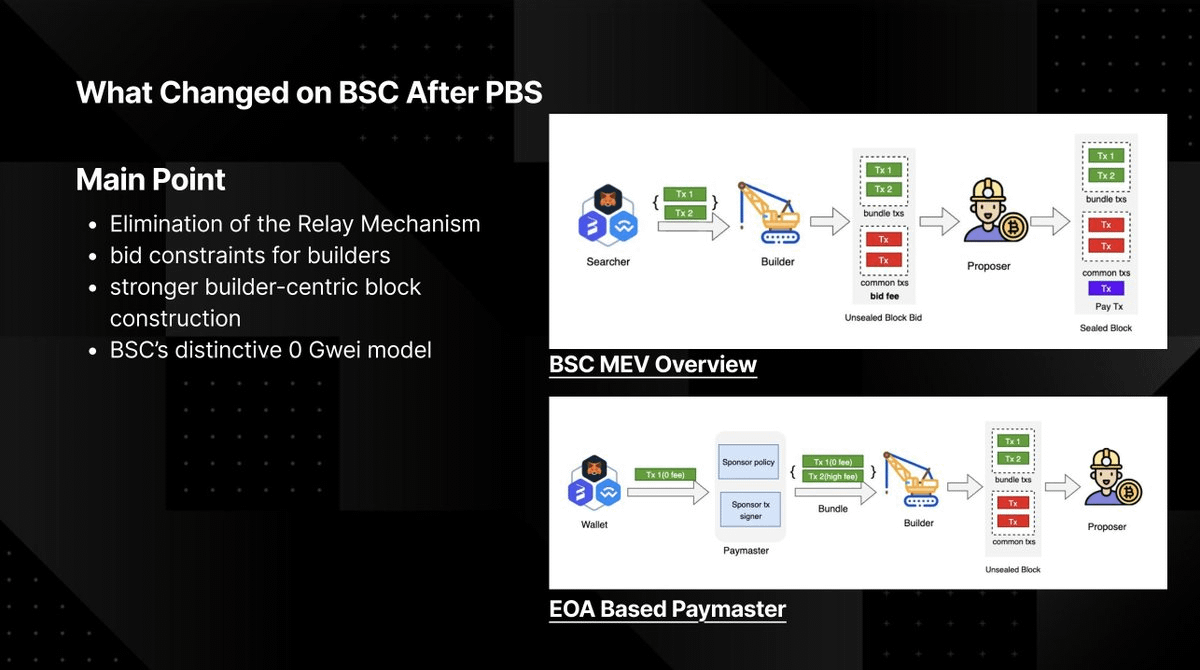

BNB BUIDL Camp - 스프린트 세션 Recap 지난 토요일, BNB BUIDL Camp 스프린트 세션이 열렸습니다. 4시간 동안 빌더들이 모여 BNB 체인 위에서 직접 만들고, 배우고, 소통한 시간. • @Unibase_AI의 에이전트 이코노미 세션 • @NoditPlatform의 온체인 데이터 접근을 가볍게 만드는 인프라 세션 • BNB 체인 엠버서더 @ham379888 의 "AI 에이전트가 BSC에서 트랜잭션을 보낼 때 무슨 일이 일어나는가" 딥다이브 • 그리고 멘토링과 함께한 실전 빌딩 스프린트까지 멘토로 함께해 준 @postech_dao, 커뮤니티 파트너로 함께해 준 @FutureHouseKR에도 감사드립니다. 다음은 4/9 온라인 AMA. BNB 체인 BD + Tech 팀이 직접 참여해 생태계, 트랙 전략, 빌더 지원에 대해 이야기합니다. BNB 체인 트랙 제출 전 궁금한 점을 직접 물어볼 수 있는 마지막 기회입니다. AMA 신청하기 👇 luma.com/8f0bt0aq

1/🧵 after hack 6 - 국세청 시드문구 유출2 코인 두 번 털린 국세청 니모닉 유출된 지갑에서 $PRTG 토큰이 0xcecb6d3e02103e6ccd088bfedd5eae35e9386d20로 빠져나갔음. 그런데 다시 28일 00시30분에 돌려줬음. 해당 작업?을 한 사람은 경찰에 자수를 했음. 그런데 3시간 뒤 28일 3시쯤 다시 $PRTG토큰들이 0x00B084456D81840FB133d83F03c392dc7Cf7777c로 빠져나갔음. 0x8efa52827c229c434fe9c915b2d99841aa3e114d 0xfb5a2208b9eccfacd4e06ac3f2981795b496c7e5 0xffb929c83865b4c4b527e98d358852492dea92a1 지갑으로 부터 각각 1M, 1M, 2M $PRTG 토큰을 가져갔음. 경찰에 자수한 사람이 한 방식은 유출된 니모닉을 통해서 지갑에 import하고 다른 지갑주소로 그냥 transfer하였음. 그러나 두번째 경우에는 7702 서명을 통해서 0xb771198a7568940d7811ff84bf6409fa80a5e4fd 컨트랙트에 권한을 위임했음.

intern final boss just connected with me on linkedin

"AI becomes the government" is dystopian: it leads to slop when AI is weak, and is doom-maximizing once AI becomes strong. But AI used well can be empowering, and push the frontier of democratic / decentralized modes of governance. The core problem with democratic / decentralized modes of governance (including DAOs on ethereum) is limits to human attention: there are many thousands of decisions to make, involving many domains of expertise, and most people don't have the time or skill to be experts in even one, let alone all of them. The usual solution, delegation, is disempowering: it leads to a small group of delegates controlling decision-making while their supporters, after they hit the "delegate" button, have no influence at all. So what can we do? We use personal LLMs to solve the attention problem! Here are a few ideas: ## Personal governance agents If a governance mechanism depends on you to make a large number of decisions, a personal agent can perform all the necessary votes for you, based on preferences that it infers from your personal writing, conversation history, direct statements, etc. If the agent is (i) unsure how you would vote on an issue, and (ii) convinced the issue is important, then it should ask you directly, and give you all relevant context. ## Public conversation agents Making good decisions often cannot come from a linear process of taking people's views that are based only on their own information, and averaging them (even quadratically). There is a need for processes that aggregate many people's information, and then give each person (or their LLM) a chance to respond *based on that*. This includes: * Inferring and summarizing your own views and converting them into a format that can be shared publicly (and does not expose your private info) * Summarizing commonalities between people's inputs (expressed as words), similar to the various LLM+pol.is ideas ## Suggestion markets If a governance mechanism values "high-quality inputs" of any type (this could be proposals, or it could even be arguments), then you can have a prediction market, where anyone can submit an input, AIs can bet on a token representing that input, and if the mechanism "accepts" the input (either accepting the proposal, or accepting it as a "unit" of conversation that it then passes along to its participant), it pays out $X to the holders of the token. Note that this is basically the same as firefly.social/post/x/2017956… ## Decentralized governance with private information One of the biggest weaknesses of highly decentralized / democratic governance is that it does not work well when important decisions need to be made with secret information. Common situations: (i) the org engaging in adversarial conflicts or negotiations (ii) internal dispute resolution (iii) compensation / funding decisions. Typically, orgs solve this by appointing individuals who have great power to take on those tasks. But with multi-party computation (currently I've seen this done with TEEs; I would love to see at least the two-party case solved with garbled circuits vitalik.eth.limo/general/2020/0… so we can get pure-cryptographic security guarantees for it), we could actually take many people's inputs into account to deal with these situations, without compromising privacy. Basically: you submit your personal LLM into a black box, the LLM sees private info, it makes a judgement based on that, and it outputs only that judgement. You don't see the private info, and no one else sees the contents of your personal LLM. ## The importance of privacy All of these approaches involve each participant making use of much more information about themselves, and potentially submitting much larger-sized inputs. Hence, it becomes all the more important to protect privacy. There are two kinds of privacy that matter: * Anonymity of the participant: this can be accomplished with ZK. In general, I think all governance tools should come with ZK built in * Privacy of the contents: this has two parts. First, the personal LLM should do what it can to avoid divulging private info about you that it does not need to divulge. Second, when you have computation that combines multiple LLMs or multiple people's info, you need multi-party techniques to compute it privately. Both are important.

dude idk but the intersection of ai and crypto is really inevitable like on multiple dimensions