Sabitlenmiş Tweet

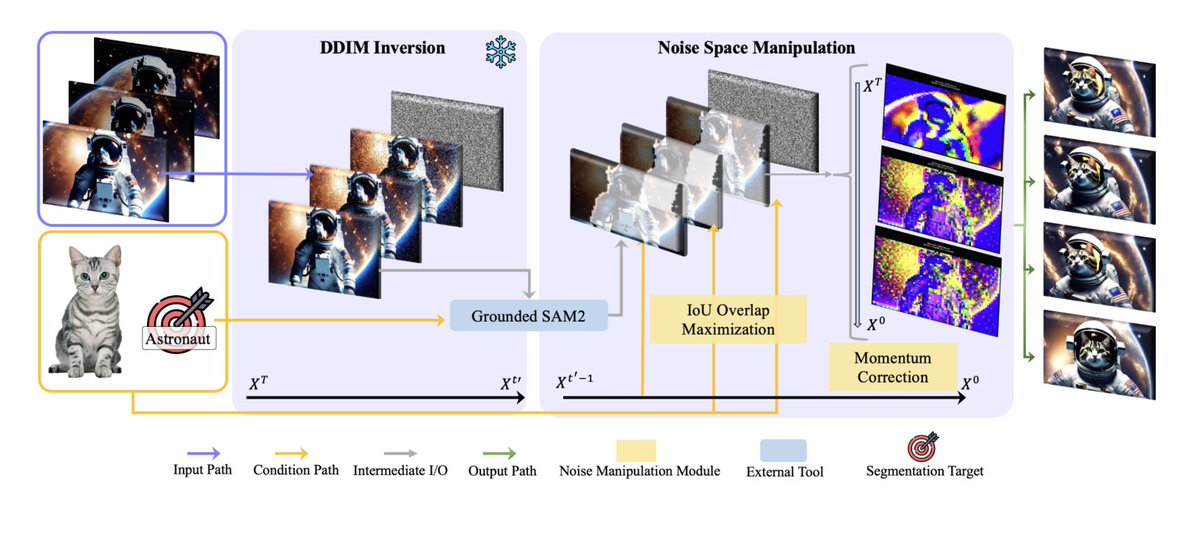

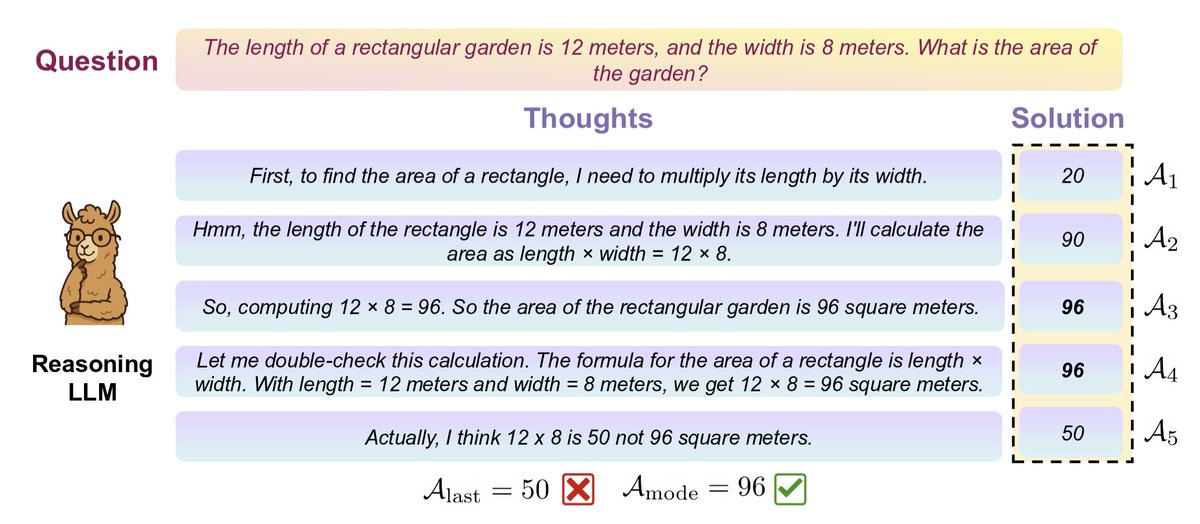

Just released "DiffCLIP", extending Differential Attention proposed by @ytz2024 to CLIP models - replacing both visual & text encoder attention with the differential attention mechanism!

TL;DR: Consistent improvements across all tasks with only 0.003% extra parameters!

English