hampelman.algo

13K posts

hampelman.algo

@hampelman_data

civ.algo, @mori_algo, mempool sonifier, governance tracking no-coder that codes, github: knonode 🎲☕🌷 ⩕ node runner 🖥️❤️ carbon based

Algorand protocol development and ecosystem growth are now under one roof. Algorand Foundation and Algorand Technologies ( @Algorand ) have come to a strategic agreement to unify ecosystem operations. This agreement creates a unified powerhouse for blockchain innovation here in the United States and positions Algorand as the chain that enables financial empowerment at scale.

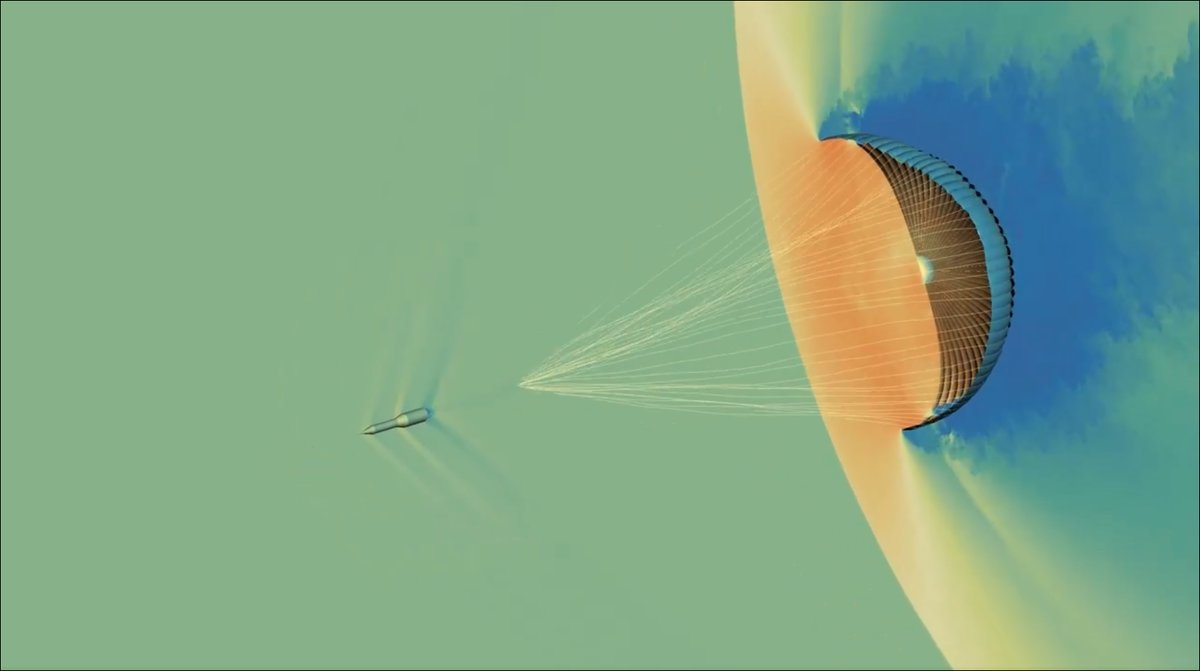

Time-lapse of continental drift over the last 140 million years.

I wonder how Snowden feels about age verification and chat control

The Maps driving experience is also evolving with Immersive Navigation, featuring clearer visuals and intuitive guidance. You’ll be able to see the buildings, overpasses and terrain around you in a vivid 3D view, made possible with help from Gemini models. You’ll also be able to: 👀 See more of your route to prepare for what’s next. 🤔 Understand tradeoffs for alternate routes to pick what works best for you. 🛣️ Arrive easily with helpful details like parking and entrance information. Immersive Navigation starts rolling out today across the U.S. and will expand in coming months to eligible iOS and Android devices, CarPlay, Android Auto and cars with Google built-in.

Ask a colleague why they refuse to use AI. They say it uses up all that water. You point out the water use is far smaller than some would have them believe. Then it's the hallucinations. You mention accuracy has improved dramatically. Then, finally: the process is the point. The struggle. The craft. The deeply human act of sitting with uncertainty. They're not reasoning. They're rationalizing their gut intuitions. My amazing student @vicoldemburgo, with Éloïse Côté, Reem Ayad, @yorl, Jason Plaks and I have a new preprint that explores this more thoroughly, called "The Moralization of Artificial Intelligence". We started by asking how moralized AI has become in public discourse. Analyzing 69,890 news headlines from 2018 to 2024, we found that AI was moralized at levels comparable to GMOs and vaccines, technologies whose moral opposition has been studied for decades. It ranked above both. The sharpest spike came within weeks of ChatGPT's launch in late 2022. When we surveyed representative samples of Americans, a majority of AI opponents said their views wouldn't change even if AI proved safe and beneficial. That's consequence insensitivity, the hallmark of moral conviction, not practical calculation. Across art, chatbots, legal tools, and romantic companions, AI moralization loaded onto a single latent factor. A global moral stance, dressed up in whatever practical language is available. The behavioral data make this concrete: a one standard deviation increase in moralization scores predicted a 42% drop in actual AI usage, even when it would have benefited that person personally. The conviction preceded the behavior by up to 573 days. The next time someone gives you three different reasons to oppose AI, each one dissolving under mild scrutiny, you're probably not watching someone think. You're watching someone feel. Preprint avaulable here: osf.io/preprints/psya…