heave

70 posts

Told a girl on a dating app I’m 5’7 and she unmatched me. She was 4’11

🦔A researcher invented a fake eye condition called bixonimania, uploaded two obviously fraudulent papers about it to an academic server, and watched major AI systems present it as real medicine within weeks. The fake papers thanked Starfleet Academy, cited funding from the Professor Sideshow Bob Foundation and the University of Fellowship of the Ring, and stated mid-paper that the entire thing was made up. Google's Gemini told users it was caused by blue light. Perplexity cited its prevalence at one in 90,000 people. ChatGPT advised users whether their symptoms matched. The fake research was then cited in a peer-reviewed journal that only retracted it after Nature contacted the publisher. My Take The researcher made the papers as obviously fake as possible on purpose. The AI systems didn't catch it. Neither did the human researchers who cited it in real journals, which means people are feeding AI-generated references into their work without reading what they're actually citing. I've covered the FDA using AI for drug review, the NYC hospital CEO ready to replace radiologists, and ChatGPT Health launching this year. All of that is happening in the same environment where a condition funded by a Simpsons character and endorsed by the crew of the Enterprise was being presented as emerging medical consensus. The people making these deployment decisions seem to believe the pipeline from research to AI to patient is more supervised than it actually is. This experiment suggests it isn't supervised much at all. Hedgie🤗 nature.com/articles/d4158…

Which language do you think is best?😍😍😍

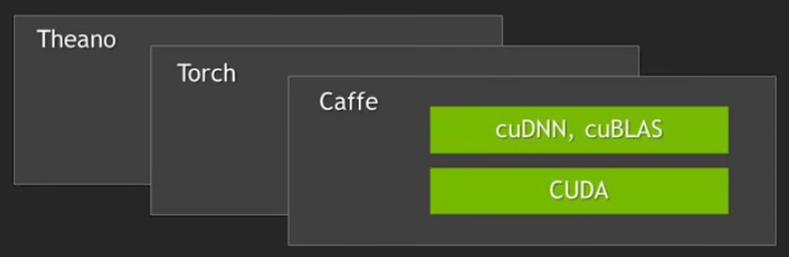

🙌 Andrej Karpathy’s lab has received the first DGX Station GB300 -- a Dell Pro Max with GB300. 💚 We can't wait to see what you’ll create @karpathy! 🔗 #dgx-station" target="_blank" rel="nofollow noopener">blogs.nvidia.com/blog/gtc-2026-…

@DellTech