HKUST NLP

31 posts

HKUST NLP

@hkustNLP

HKUST Natural Language Processing Research #NLProc

🚀 We release MMLongBench: Benchmark for evaluating long-context VLMs. 📊 13,331 examples across 5 tasks: – Visual RAG – Many-shot ICL – Needle-in-a-haystack – VL Summarization – Long-document VQA 📏 Lengths: 8 / 16 / 32 / 64 / 128K 🔍 Benchmarking both thoroughly & effectively!

Updates to ChatGPT: You can now choose between “Auto”, “Fast”, and “Thinking” for GPT-5. Most users will want Auto, but the additional control will be useful for some people. Rate limits are now 3,000 messages/week with GPT-5 Thinking, and then extra capacity on GPT-5 Thinking mini after that limit. Context limit for GPT-5 Thinking is 196k tokens. We may have to update rate limits over time depending on usage. 4o is back in the model picker for all paid users by default. If we ever do deprecate it, we will give plenty of notice. Paid users also now have a “Show additional models” toggle in ChatGPT web settings which will add models like o3, 4.1, and GPT-5 Thinking mini. 4.5 is only available to Pro users—it costs a lot of GPUs. We are working on an update to GPT-5’s personality which should feel warmer than the current personality but not as annoying (to most users) as GPT-4o. However, one learning for us from the past few days is we really just need to get to a world with more per-user customization of model personality.

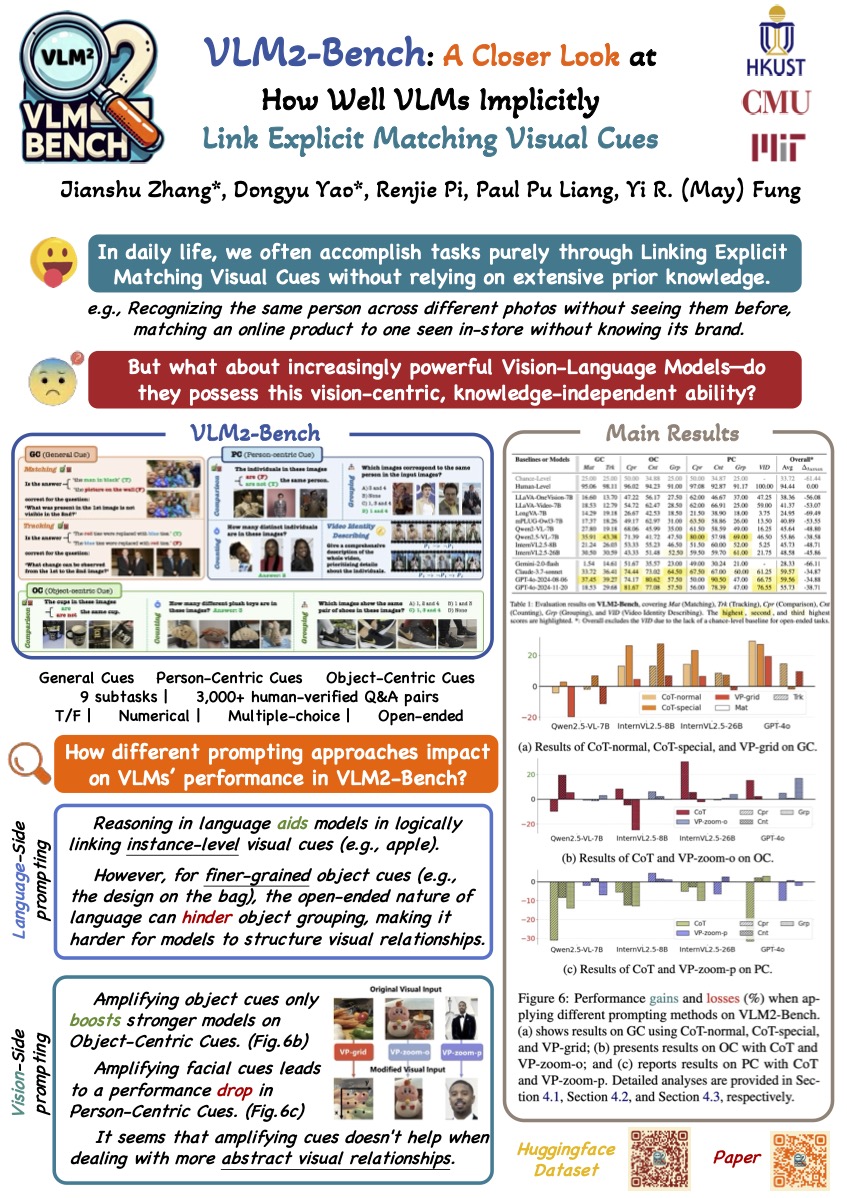

[1/n] "𝘔𝘢𝘵𝘤𝘩𝘪𝘯𝘨 𝘤𝘶𝘦𝘴 𝘧𝘰𝘳 𝘪𝘥𝘦𝘯𝘵𝘪𝘤𝘢𝘭 𝘰𝘣𝘫𝘦𝘤𝘵𝘴, 𝘥𝘪𝘴𝘵𝘪𝘯𝘤𝘵 𝘢𝘵𝘵𝘳𝘪𝘣𝘶𝘵𝘦𝘴 𝘧𝘰𝘳 𝘶𝘯𝘪𝘲𝘶𝘦 𝘰𝘯𝘦𝘴." Such 𝙘𝙧𝙤𝙨𝙨-𝙘𝙤𝙣𝙩𝙚𝙭𝙩 𝙫𝙞𝙨𝙪𝙖𝙡 𝙧𝙚𝙖𝙨𝙤𝙣𝙞𝙣𝙜 is extremely simple and straightforward for the human cognitive process, but 𝗾𝘂𝗶𝘁𝗲 𝗰𝗵𝗮𝗹𝗹𝗲𝗻𝗴𝗶𝗻𝗴 𝗳𝗼𝗿 𝗰𝘂𝗿𝗿𝗲𝗻𝘁 𝗹𝗮𝗿𝗴𝗲 𝘃𝗶𝘀𝗶𝗼𝗻 𝗹𝗮𝗻𝗴𝘂𝗮𝗴𝗲 𝗺𝗼𝗱𝗲𝗹𝘀 (𝗟𝗩𝗟𝗠𝘀), especially across multiple images and videos ‼️ 𝙒𝙝𝙮, and 𝙝𝙤𝙬 𝙘𝙖𝙣 𝙬𝙚 𝙞𝙢𝙥𝙧𝙤𝙫𝙚 upon this major shortcoming in future research? 🔍 🔥🚀 Check out our latest release, “𝗩𝗟𝗠𝟮 -𝗕𝗲𝗻𝗰𝗵: 𝗔 𝗖𝗹𝗼𝘀𝗲𝗿 𝗟𝗼𝗼𝗸 𝗮𝘁 𝗛𝗼𝘄 𝗪𝗲𝗹𝗹 𝗩𝗟𝗠𝘀 𝗜𝗺𝗽𝗹𝗶𝗰𝗶𝘁𝗹𝘆 𝗟𝗶𝗻𝗸 𝗘𝘅𝗽𝗹𝗶𝗰𝗶𝘁 𝗠𝗮𝘁𝗰𝗵𝗶𝗻𝗴 𝗩𝗶𝘀𝘂𝗮𝗹 𝗖𝘂𝗲𝘀”! 🚀🔥 🔗 Project page: vlm2-bench.github.io 📑 Paper: arxiv.org/abs/2502.12084 💻 Github: github.com/vlm2-bench/VLM… 🤗 Huggingface: huggingface.co/datasets/Sterz… --- Work led by our amazing team of students Jianshu, Dongyu, and Renjie at The Hong Kong University of Science and Technology. Find us at the poster booth ✨Wed 7/30 11am Hall 4/5 ✨

🚀 Data to pre-train LLMs on are reaching critical bottleneck. 𝘿𝙤𝙚𝙨 𝙢𝙤𝙙𝙚𝙡-𝙜𝙚𝙣𝙚𝙧𝙖𝙩𝙚𝙙 𝙨𝙮𝙣𝙩𝙝𝙚𝙩𝙞𝙘 𝙙𝙖𝙩𝙖 𝙬𝙤𝙧𝙠 𝙨𝙞𝙢𝙞𝙡𝙖𝙧𝙡𝙮 𝙬𝙚𝙡𝙡 𝙛𝙤𝙧 𝙨𝙘𝙖𝙡𝙞𝙣𝙜 𝙥𝙧𝙚-𝙩𝙧𝙖𝙞𝙣𝙞𝙣𝙜 𝙛𝙪𝙧𝙩𝙝𝙚𝙧? Let's dive into the "𝗦𝗰𝗮𝗹𝗶𝗻𝗴 𝗟𝗮𝘄𝘀 𝗼𝗳 𝗦𝘆𝗻𝘁𝗵𝗲𝘁𝗶𝗰 𝗗𝗮𝘁𝗮 𝗳𝗼𝗿 𝗟𝗮𝗻𝗴𝘂𝗮𝗴𝗲 𝗠𝗼𝗱𝗲𝗹𝘀" together! Several key findings: ⭐️ 𝗚𝗿𝗮𝗽𝗵-𝗯𝗮𝘀𝗲𝗱 𝗲𝘅𝘁𝗿𝗮𝗰𝘁𝗶𝗼𝗻 & 𝗿𝗲𝗰𝗼𝗺𝗯𝗶𝗻𝗮𝘁𝗶𝗼𝗻 of concepts across docs transforms pre-training corpora into 𝘥𝘪𝘷𝘦𝘳𝘴𝘦, 𝘩𝘪𝘨𝘩-𝘲𝘶𝘢𝘭𝘪𝘵𝘺 𝘴𝘺𝘯𝘵𝘩𝘦𝘵𝘪𝘤 𝘥𝘢𝘵𝘢𝘴𝘦𝘵𝘴; ⭐️𝗣𝗿𝗲𝘁𝗿𝗮𝗶𝗻𝗶𝗻𝗴 𝘄𝗶𝘁𝗵 𝘀𝘆𝗻𝘁𝗵𝗲𝘀𝗶𝘇𝗲𝗱 𝗱𝗮𝘁𝗮 reliably follows 𝘳𝘦𝘤𝘵𝘪𝘧𝘪𝘦𝘥 𝘴𝘤𝘢𝘭𝘪𝘯𝘨 𝘭𝘢𝘸 across various model sizes; ⭐️ 𝗟𝗮𝗿𝗴𝗲𝗿 𝗺𝗼𝗱𝗲𝗹𝘀 approach optimal performance with 𝙛𝙚𝙬𝙚𝙧 𝙩𝙧𝙖𝙞𝙣𝙞𝙣𝙜 𝙩𝙤𝙠𝙚𝙣𝙨; ⭐️ 𝘿𝙞𝙢𝙞𝙣𝙞𝙨𝙝𝙞𝙣𝙜 𝙧𝙚𝙩𝙪𝙧𝙣𝙨 start to kick in near 300B tokens. 👇Check preprint for further details~ 🔗 arxiv.org/html/2503.1955…

🔍 Are Verifiers Trustworthy in RLVR? Our paper, Pitfalls of Rule- and Model-based Verifiers, exposes the critical flaws in reinforcement learning verification for mathematical reasoning. 🔑 Key findings: 1️⃣ Rule-based verifiers miss correct answers, especially when presented in different formats. 2️⃣ Model-based verifiers are susceptible to reward hacking, undermining RL outcomes. 3️⃣ A probe study reveals that most model-based verifiers, particularly generative ones (like those using chain-of-thought reasoning), are highly vulnerable to adversarial attacks. 📄Paper: arxiv.org/abs/2505.22203 💻Code & Model & Dataset: github.com/hkust-nlp/RL-V… Special thanks to my amazing collaborators @AndrewZeng17, Xingshan Zeng, and Qi Zhu, and to our advisor @junxian_he! 🥰

Time flies. My visiting at UIUC is coming to an end. I’m sincerely grateful to Prof. Heng Ji @hengjinlp for the invaluable opportunity to join such a warm, collaborative, and world-leading research group. I’ve learned so much from Prof. Heng and lots of amazing students here @qiancheng1231 @xiusi_chen @emrecanacikgoz @BowenJin13 @zhenhailongW @Yuji_Zhang_NLP @Glaciohound. This experience will always be a defining chapter of my Ph.D. life, filled with friendships, collaborations, and unforgettable moments. Looking back, we truly did something together for the world — from SMART to ToolRL, OTC, RM-R1, ModelingAgent, and DecisionFlow, with even more impactful work on the way. I’ll always be cheering for BlenderLab and looking forward to what’s next!