Xingyue Huang

68 posts

@hxyscott

3rd Year DPhil in Computer Science, University of Oxford. I like Graph neural networks, knowledge graphs, and graph representation learning in general.

Sir, we built this. A RL environment for learning reasoning at scale. GitHub: github.com/camel-ai/loong HF dataset: huggingface.co/datasets/camel… We extracted seed datasets from sources like textbooks, code libraries like sympy, networkX, Gurobi (math programming lib), rdkit (chemistry), prolog (logic) and so on. We gathered 8,729 questions spanning 12 diverse domains. Moreover, next data can be synthesized by generating question with few shot examples and questions by coding with given libraries. The programmatic approach brings strong verification signals to the synthetic data. The data is difficult enough for the SoTA LLMs. It is a good environment for learning long CoT reasoning by generating countless data points and let the agent practice in it.

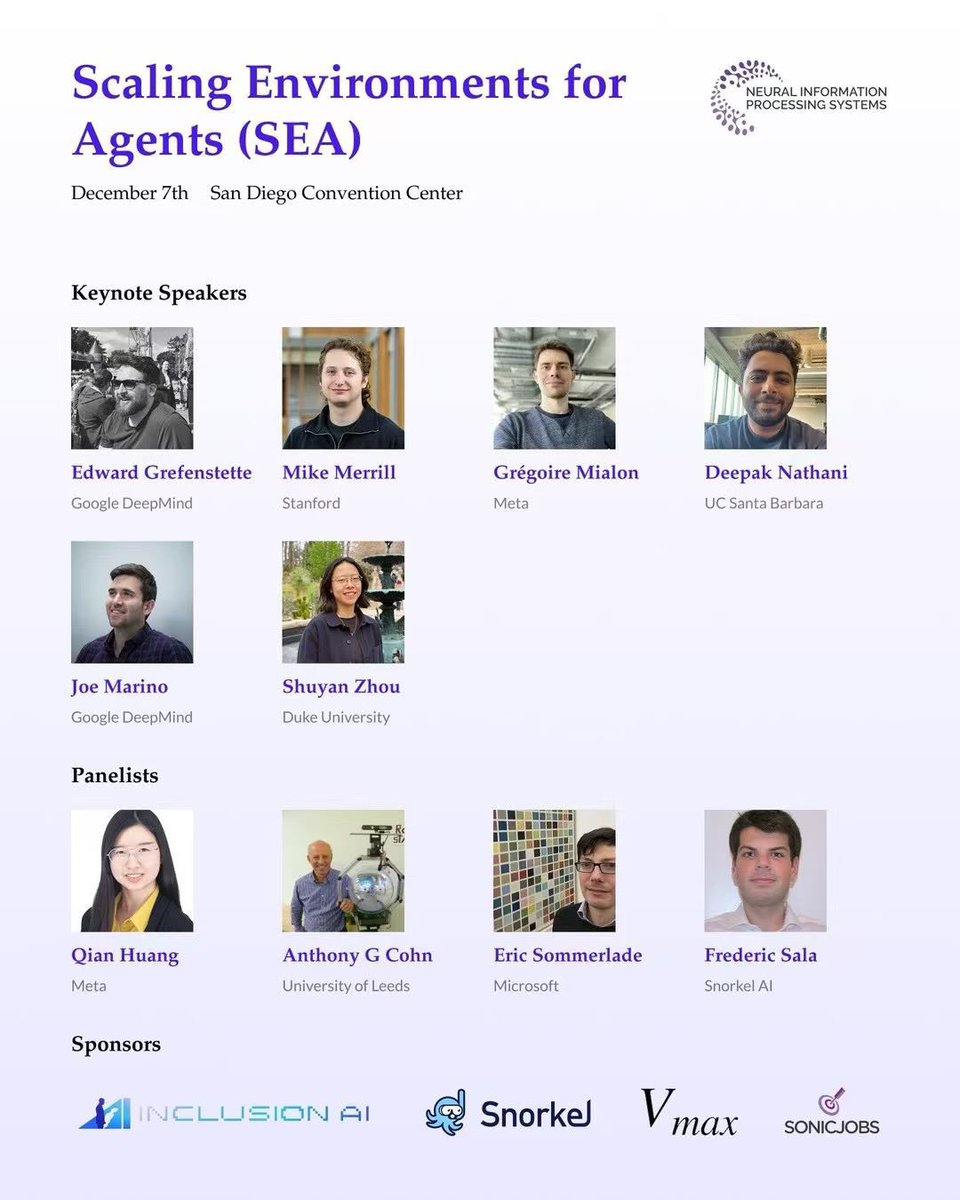

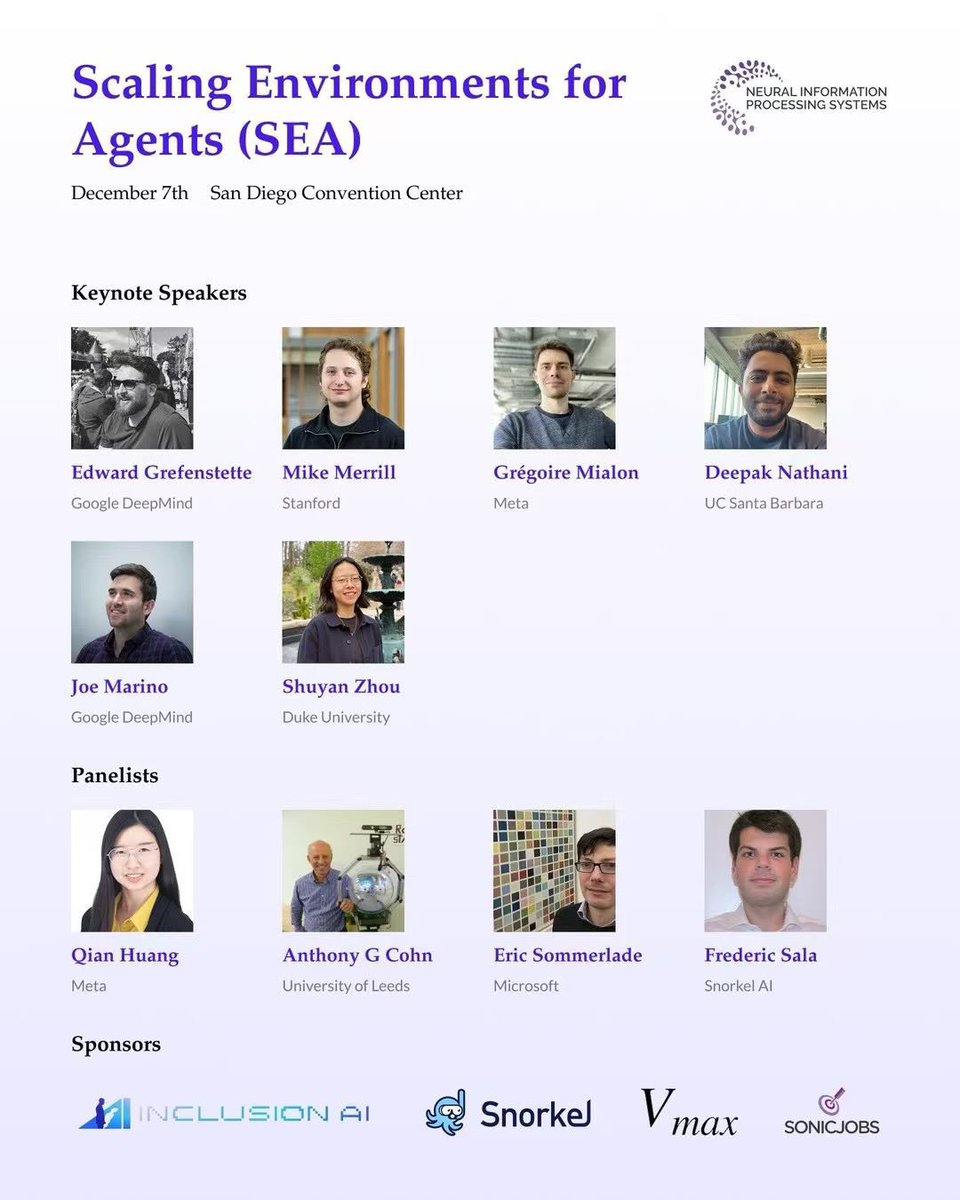

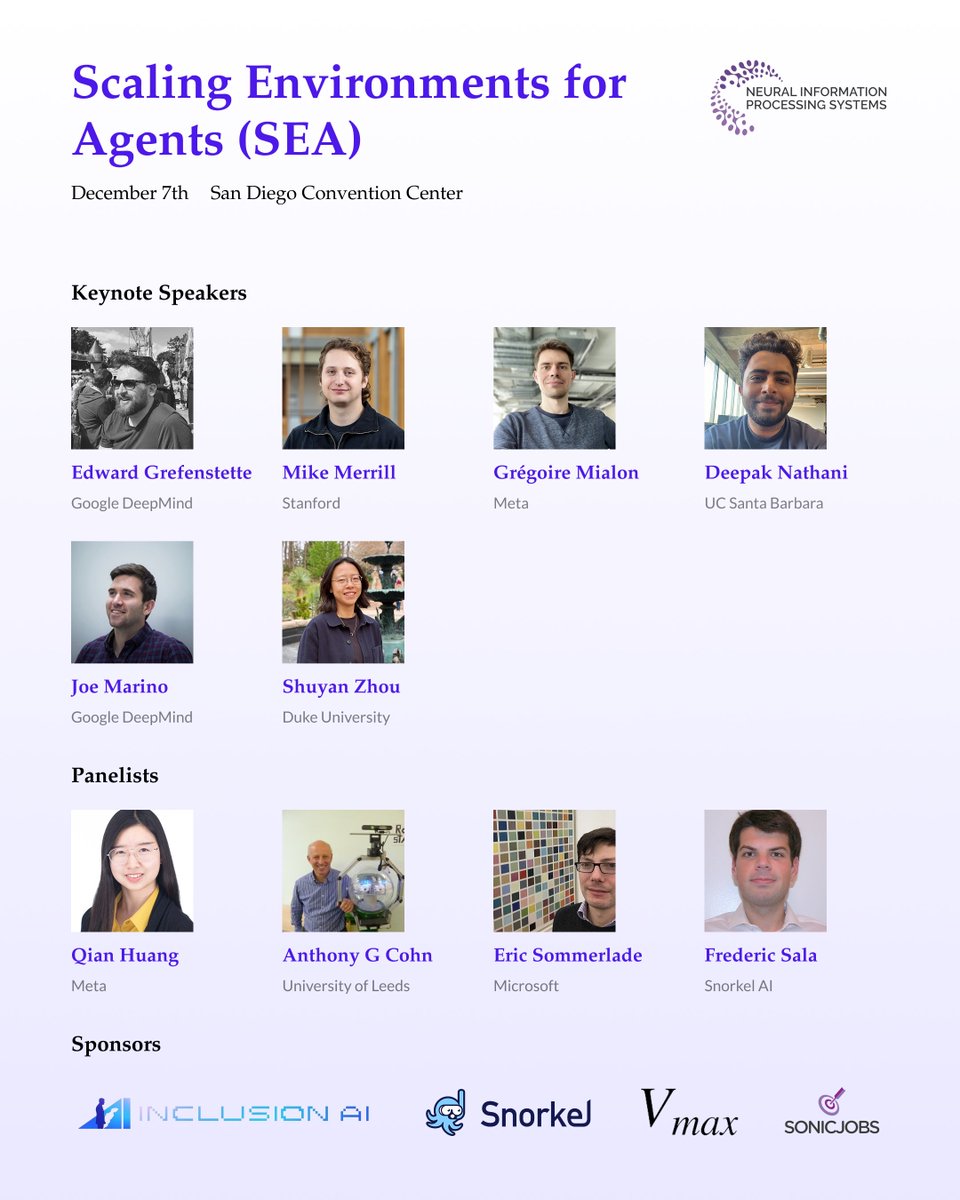

We need more 𝗼𝗽𝗲𝗻, 𝗿𝗲𝗮𝗹𝗶𝘀𝘁𝗶𝗰 𝗮𝗴𝗲𝗻𝘁 𝗲𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀 for training and evaluating agents! 💡 🚀But what are the 𝗶𝗺𝗽𝗼𝗿𝘁𝗮𝗻𝘁 𝗲𝗻𝘃𝗶𝗿𝗼𝗻𝗺𝗲𝗻𝘁𝘀 𝘁𝗼 𝗯𝘂𝗶𝗹𝗱? 📈What are the 𝗶𝗻𝗳𝗿𝗮𝘀𝘁𝗿𝘂𝗰𝘁𝘂𝗿𝗲 𝗯𝗼𝘁𝘁𝗹𝗲𝗻𝗲𝗰𝗸𝘀 for these environments in training and evaluation, and how can we 𝘀𝗰𝗮𝗹𝗲 𝘂𝗽 the number of available environments? 🌟Most importantly, how should we utilize these environments: 𝗥𝗟 𝗼𝗿 𝗯𝗲𝘆𝗼𝗻𝗱? If you’re interested in discussing together, come join us at our workshop on “𝙎𝙘𝙖𝙡𝙞𝙣𝙜 𝙀𝙣𝙫𝙞𝙧𝙤𝙣𝙢𝙚𝙣𝙩𝙨 𝙛𝙤𝙧 𝘼𝙜𝙚𝙣𝙩𝙨” @NeurIPSConf tmr (7th Dec)! We have an amazing lineup of invited speakers and panelists, including 𝐄𝐝𝐰𝐚𝐫𝐝 𝐆𝐫𝐞𝐟𝐞𝐧𝐬𝐭𝐞𝐭𝐭𝐞 from 𝐆𝐨𝐨𝐠𝐥𝐞 𝐃𝐞𝐞𝐩𝐌𝐢𝐧𝐝 and 𝐒𝐡𝐮𝐲𝐚𝐧 𝐙𝐡𝐨𝐮 from 𝐃𝐮𝐤𝐞. Also check out our latest 𝐬𝐮𝐫𝐯𝐞𝐲 𝐩𝐚𝐩𝐞𝐫 on the topic led by Yuchen Huang: arxiv.org/abs/2511.09586 🎯

New preprint: Flock, a foundation model for link predictions on knowledge graphs that zero-shot generalizes to novel entities and relations. Instead of message passing, Flock operates on anonymized random walks, processed by sequence neural nets. Paper: arxiv.org/abs/2510.01510

New preprint: Flock, a foundation model for link predictions on knowledge graphs that zero-shot generalizes to novel entities and relations. Instead of message passing, Flock operates on anonymized random walks, processed by sequence neural nets. Paper: arxiv.org/abs/2510.01510

🚨 [Call for Papers] SEA Workshop @ NeurIPS 2025 🚨 📅 December 6, 2025 | 📍 San Diego, USA 🌐: sea-workshop.github.io Environments are the "data" for training agents, which is largely missing in the open source ecosystem. We are hosting Scaling Environments for Agents (SEA) Workshop at NeurIPS 2025. We're calling for submissions in, but not limited to: - Environment Infrastructure Design - Benchmarks and Evaluation - LLMs in Interactive Environments - Tool-Use and Software Environments - Multi-Agent Systems and Simulation Environments - Embodiment and Grounding - Sim2Real and Deployment An amazing lineup of speakers is set to share their latest insights on agent environments, including the authors of GAIA (@mialon_gregoire), WebArena (@shuyanzhxyc), Terminal-Bench (@Mike_A_Merrill), MLGym (@robertarail), MLAgentBench (@qhwang3), Genie (@_rockt ), Cogbench(@janexwang), Writing as a Testbed (@egrefen) and panelists (Anthony G Cohn, Eric Sommerlade, @Diyi_Yang, @animesh_garg). Kudos to our organizing team (@lawhy_X, @May_F1_, @eagle_hz, @FangruLin99, @hxyscott, @AlisiaLupidi, @thu_yushengsu, Ziyú Ye, @Wade_Yin9712, @ZiyiYang35007, Jialin Yu, Sunando Sengupta, @agarwl_, @BernardSGhanem, @AnimaAnandkumar, @philiptorr) for putting this workshop together.

Fun times at ICML. Graph learning dinner, position poster gang, theory, and graph learning hike. :)

Knowledge Graph Foundation Models (KGFMs) are at the frontier of graph learning - but we didn’t have a principled understanding of what we can (or can’t) do with them. Now we do! 💡🚀 🧵 with Pablo Barcelo, @ismaililkanc, @mmbronstein, @michael_galkin, @JuanLReutter, @OrthMiguel