Sabitlenmiş Tweet

Udita Pal 🧂

16K posts

Udita Pal 🧂

@i_Udita

@saltpe_ founder · yc w22 · forbes 30u30 · fluent in data, f1, fashion & fun facts · dog mom · blr 📍 · temp content stuff: @thineAI @trykitchenai

Bangalore Katılım Ağustos 2013

323 Takip Edilen15.9K Takipçiler

@ismaelsevi87 @WilliamsF1 This is huge! Congratulations a lot of folks dream of this 🎀

English

Udita Pal 🧂 retweetledi

The most honest thing about this piece is what it doesn't say: that no app, no system, no second brain fixes the underlying tension between a biological mind and an information environment that was never built for it. We've been treating the symptom for years. This is about the cause.

Siddhartha Saxena@siddsax

English

there is a world outside f1 and i need to focus on that

Hamilton West End@HamiltonWestEnd

#HamiltonLDN X RAYE. 🔥

English

they liked this comment 💀 hope they watch it

Pulkit Kochar@kocharpulkit

yo @TheAcademy you should check out another film by this director, it’s about The Oscars

English

Udita Pal 🧂 retweetledi

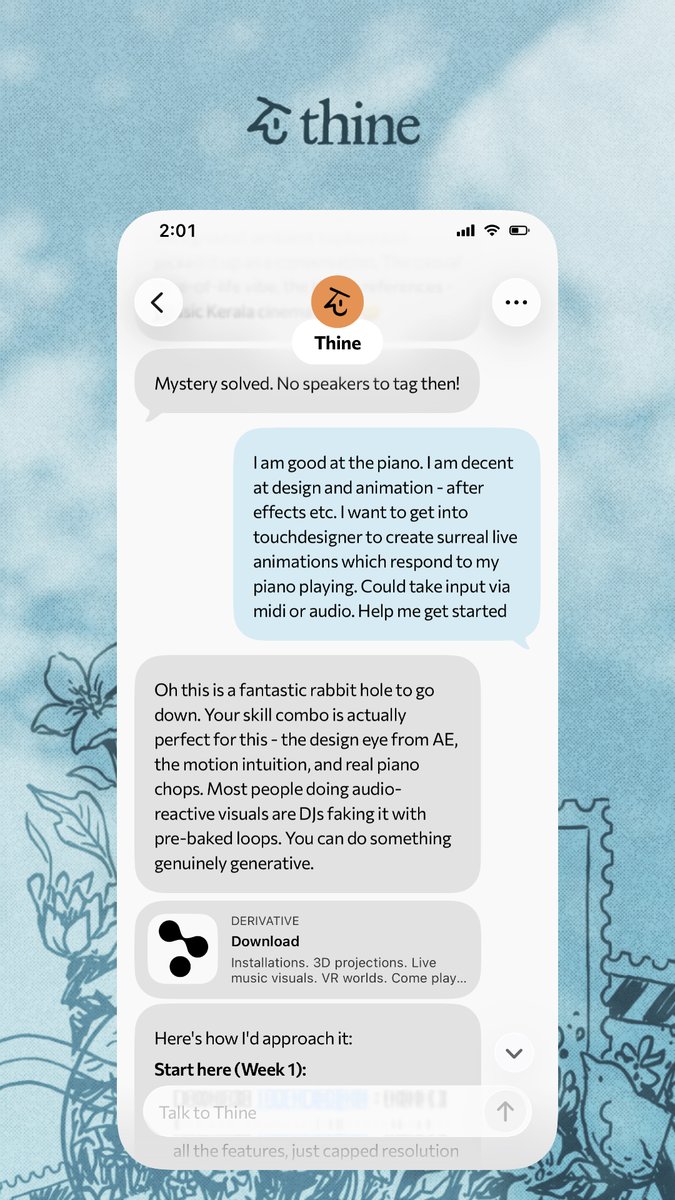

Started my TouchDesigner journey w @ThineAI keeping me company and providing inspiration.

Extremely satisfying when the animations react to midi + piano.

English

Got to HOLD my BOOK for the FIRST TIME in my HANDS and SCREAMED AND ALMOST HAD A PANIC ATTACK!!!

Preorder now!!!! amzn.in/d/0ccy42t0

English

If you don’t believe in superstitions you can pick similar bangles up from peepal tree near your house 👀

A.....@Mississippi___A

Atp it is straight away buffoonery nishorama.

English

Udita Pal 🧂 retweetledi

Udita Pal 🧂 retweetledi

LangChain shipped Deep Agents last week. @harisonchase is arguing the harness IS the product - built-in task planning, sub-agent spawning, middleware, hooks, the works.

Garry Tan dropped "Thin Harness, Fat Skills" the same week. Opposite thesis: the harness should be as thin as possible.

I'm firmly in Garry's camp. Here's why.

Every piece of logic you put in the harness is reasoning you're taking away from the model.

40 tool definitions. God-tools with 5-second round trips. Brittle prompt chains babysitting the model at every step. You haven't built an intelligent agent. You've built a cage around one.

This was defensible two years ago. GPT-3.5 couldn't follow multi-step instructions. Even through Claude 3.5 Sonnet, models genuinely needed guardrails for basic tool use. But with the current generation - Opus 4, GPT-5, Gemini 2.5 - if you're still hardcoding orchestration logic, you're actively capping what the model can do.

The real test: what happens on model upgrade day?

Fat harness → your product breaks. You spend weeks re-engineering scaffolding to accommodate capabilities the model already has.

Thin harness → your product gets better automatically. No ceiling on reasoning. The upgrade flows straight into better outcomes.

This is the architecture that separates the 2x engineer from the 100x engineer. Same model. Same API key. Same context window.

What thin harness actually looks like in practice:

> Fat skills: judgment in markdown. Domain knowledge, heuristics, the fuzzy human stuff. Make these as rich as possible.

> Fat code: deterministic operations. Auth, validation, DB writes. Things that must be right every time.

> Thin harness: just the loop. Context in, model reasons, tool executes, result back. That's it.

@hwchase17 is right that memory matters. But memory is a skill problem and a data problem - not an orchestration problem. You don't need hooks and middleware intercepting every model call. You need well-structured skills that tell the model how to use its memory.

The best AI engineering looks almost too simple. Boring infra. Judgment in markdown. A model you actually let think.

Garry Tan@garrytan

This is the simplest distillation of what I have learned about agentic engineering this year Push smart fuzzy operations humans do into markdown skills. Fat skills. Push must-be-perfect deterministic operations into code. Fat code. The harness? Keep it thin.

English

Harvard: we want humans to remember everything

Me, who actively suppresses 90% of my memories to function normally, please don't

Polymarket@Polymarket

BREAKING: New Harvard AI lab seeks $100 million in funding to help humans “remember everything”

English