Murat Aslan

277 posts

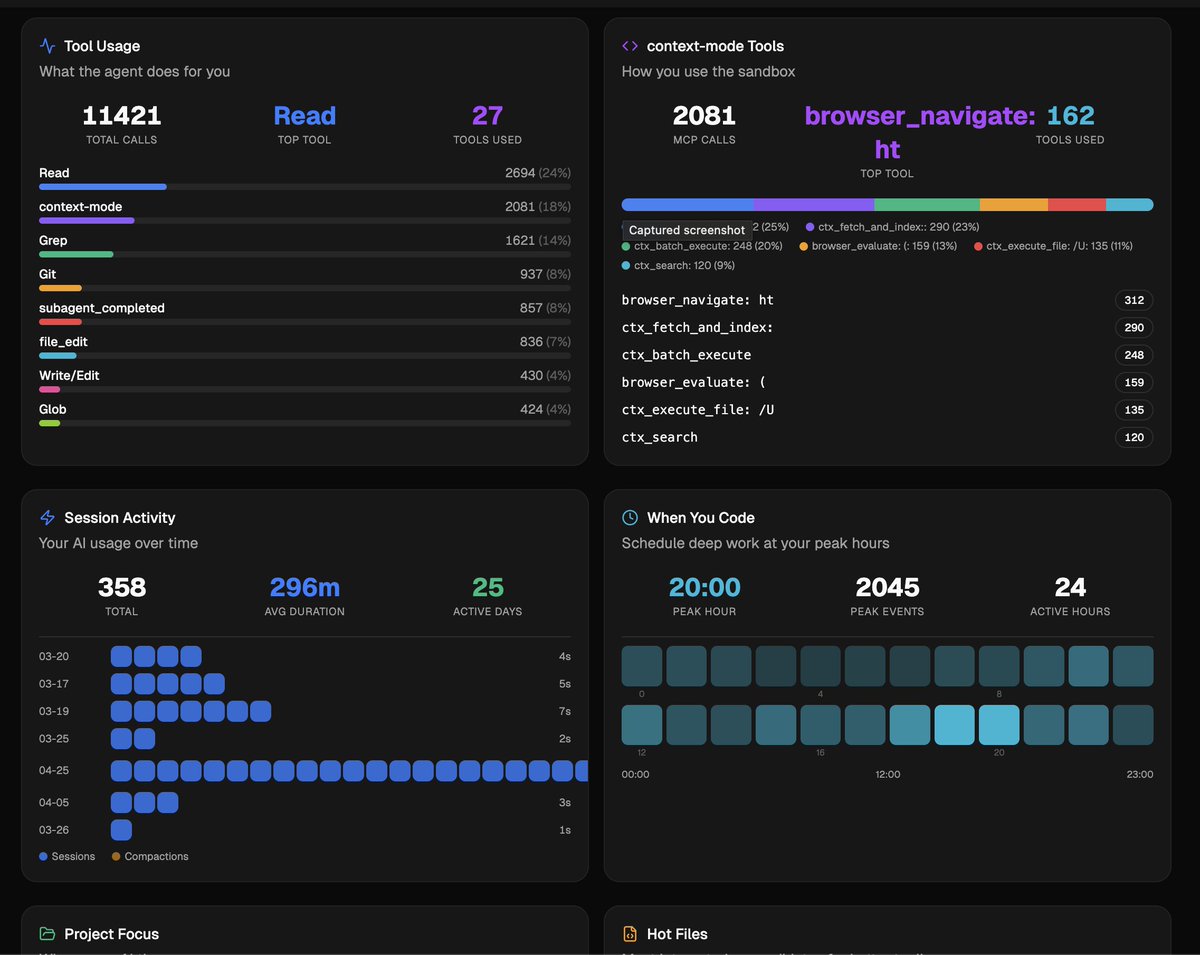

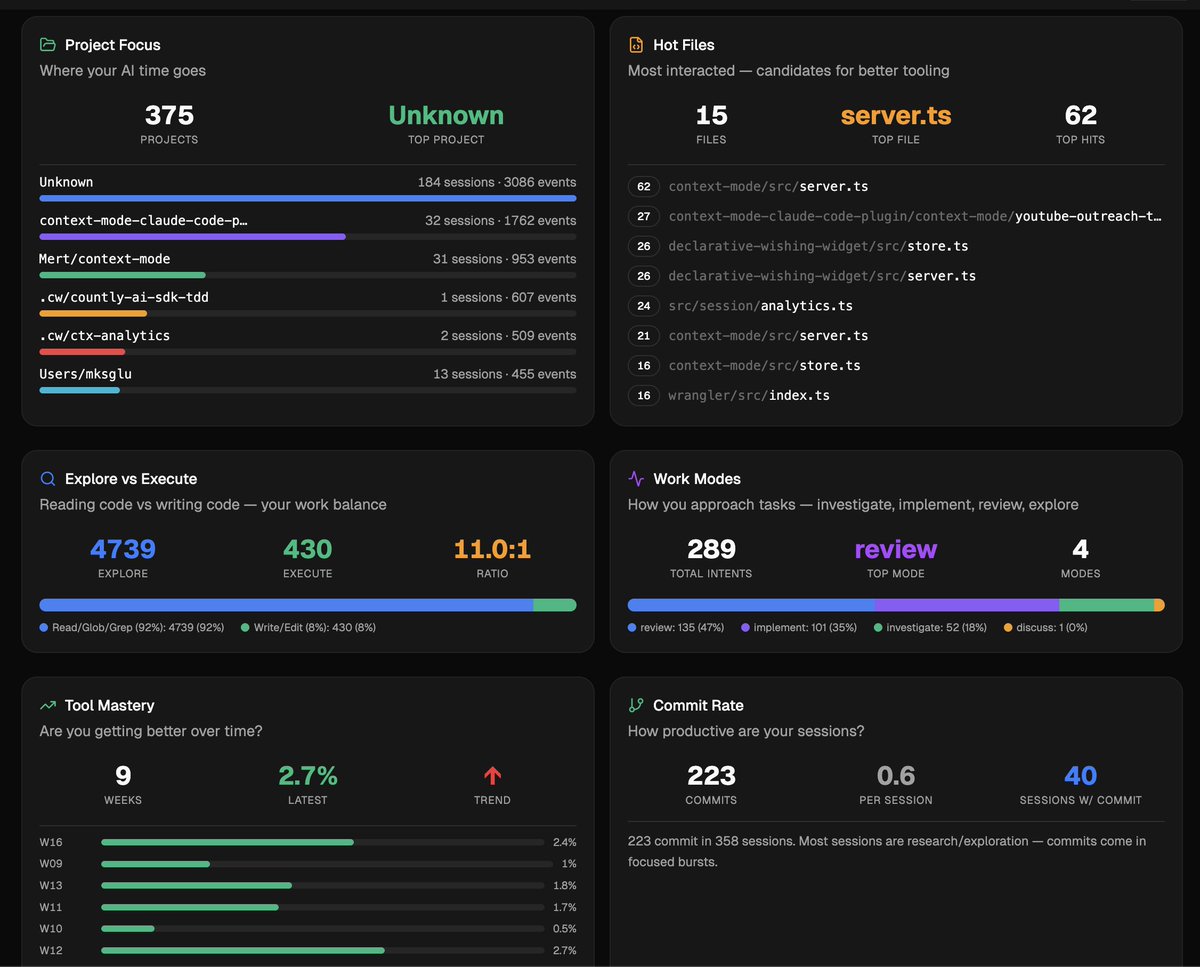

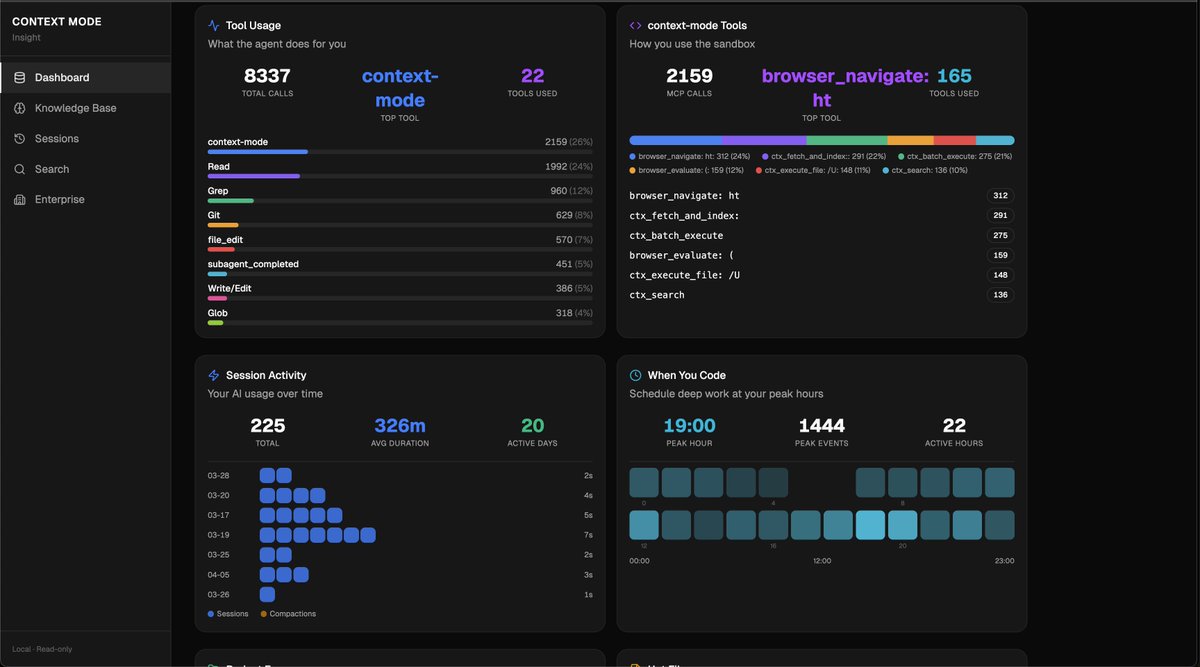

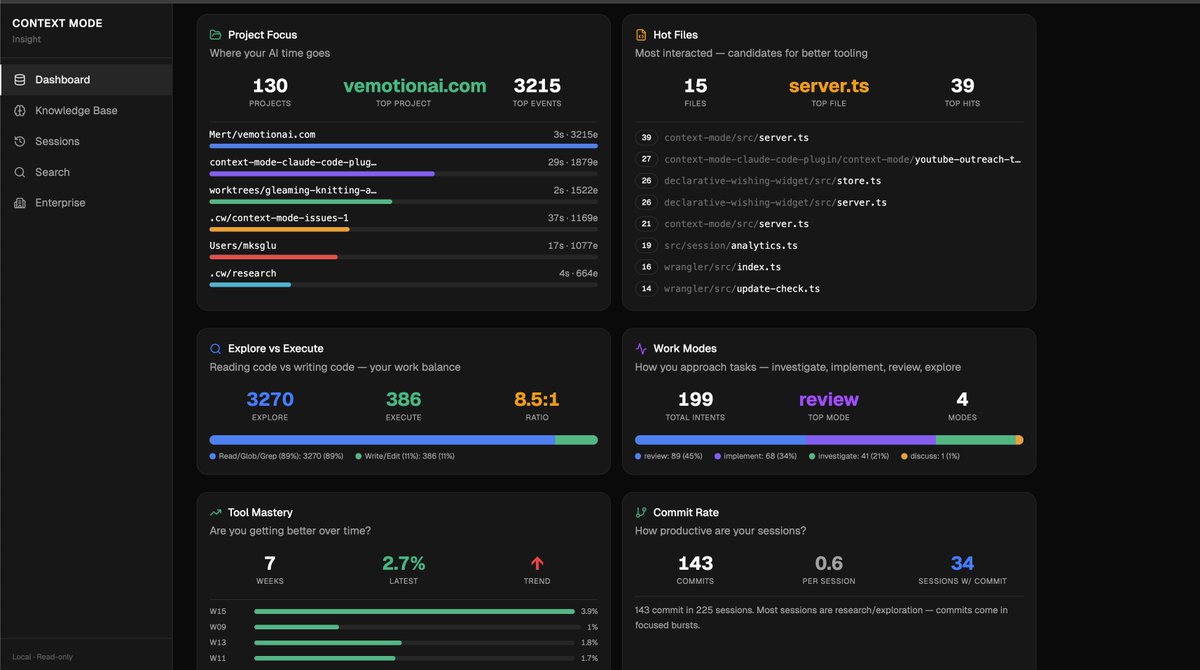

80 hours of AI pair programming. Here's what context-mode saved me. → $487.20 in API costs. Opus pricing. Real money, not estimates. → 22.3 hours of re-explaining context after compaction. Time I got back to ship. → 268 sessions resumed from memory. The agent never asked "what were we doing?" → 47 preferences auto-learned. "use TS strict" once, remembered forever. → 14,847 events indexed. Searchable across every session, every project. Without context-mode |████████████████████████████████████████| 6.2 MB With context-mode |█░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░░| 124 KB 98% of raw data never entered my conversation. That's a 50× longer session. Same context window. Multiply across a 13-engineer team: $487 × 13 = $6,331/month saved 22 hours × 13 = 286 hours/month recovered Open source. Local-first. No telemetry. No account. No SaaS lock-in. github.com/mksglu/context…

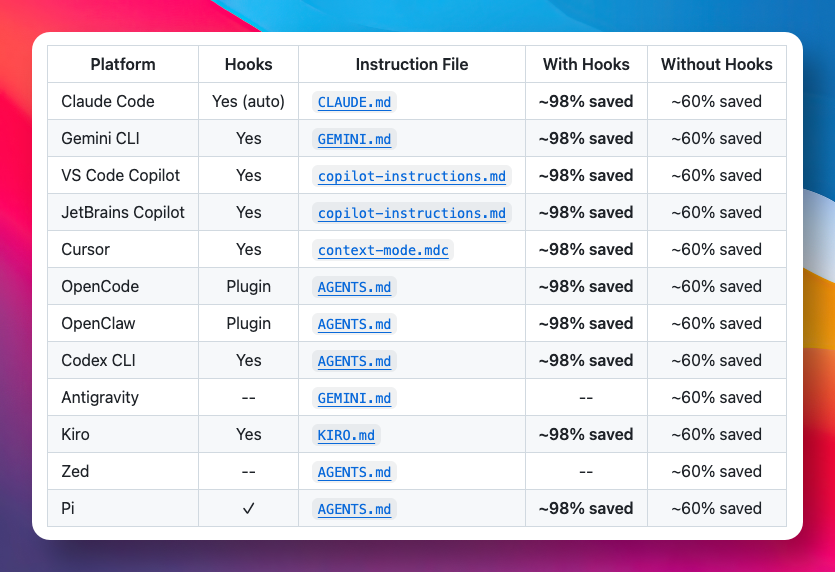

We just wrapped up our 2nd @Solana Buildstation in Ankara with @SuperteamTR 🇹🇷 Across two sessions (Apr 25 & May 2), we brought together local Solana builders to dive into smart contracts, AI integrations, and security plus hands-on mentoring. Special thanks to @WhiteMoonDev who showcased the tool he built for Solana developers during their session. While walking through Solana fundamentals, he also showed how to quickly build Solana programs using AI and how to connect and actually use them with @orquestradev What a chad. Solid mentor, and an impressive project to back it up. Special thanks to @iammurataslan for showcasing what context mode actually does and why it matters. Context mode basically prevents AI tools from getting flooded by raw data, keeping only what’s relevant in the context and reducing usage by ~98% (built by @mksglu) Seeing it in action across different AI like Claude and OpenClaw made it even clearer how powerful this approach is for building faster and more efficient workflows. Special thanks to Emrah Urhan (@raxetul ) for joining us today and sharing his expertise in embedded systems, low-level hardware/software integration, and modern software development practices. All part of getting ready for the Solana @colosseum Frontier Hackathon. See you next week. 🤘

Frontier Buildstation Ankara! May 2 Come and build with @SuperteamTR & @turkiyerustcom & @buildermare this saturday ⭐️Surprise guests ⭐️Private mentoring sessions to get ready for @colosseum 🤘 register via luma 👇

116.5K+ users · 11.7K+ GitHub stars · 793 forks · 14 platforms Thanks a lot for sharing, really appreciate it !🫡 中国开发者社区的朋友们,期待你们的支持与反馈。 我非常重视你们的意见,也感谢一直以来的支持。当前有一个关于代理支持的 PR,但我不确定是否真的有必要或实现是否正确。如果这是中国网络环境中的实际需求,请多多指点。 感谢大家的帮助。 github.com/mksglu/context…

最近看到一个开源项目 Context Mode,能有效解决 AI 编程工具上下文被超出的问题。 核心思路是让原始数据留在沙盒里,只把处理结果送进上下文窗口。 据介绍,能把 315 KB 的原始输出压缩到 5.4 KB,长度节省高达 98%。 同时用本地数据库记录会话状态,对话压缩后也能无缝恢复。 GitHub:github.com/mksglu/context… 支持 14 个平台,包括 Claude Code、Cursor、Gemini CLI、VS Code Copilot 等主流 AI 编程工具。 内置 11 种语言运行时,还有知识库索引和智能搜索功能,方便按需检索而不是一股脑塞进上下文。 所有数据都在本地处理,不联网不上传。如果你经常遇到 AI 编程到一半就出现幻觉的情况,不妨试试这个工具。

Had a great time at ClawCon Ankara. Loved sharing “The Other Half of the Context Problem” and meeting amazing people. The energy, conversations, and feedback were amazing. Thanks to everyone who made it happen. clawcon.context-mode.com

I'll be giving a short talk at ClawCon Ankara on April 29. "The Other Half of the Context Problem." Five minutes on something that's been bugging me. Your AI coding agent spends most of its context window re-sending tool output it already processed. Every turn, the full conversation ships to the API again. The context fills up, the agent compacts, and you start over. Re-explaining your codebase to a model that just lost its memory. I built context-mode to fix this. It's an MCP server that sits between the agent and its tools. Intercepts the output, indexes it into a local FTS5 database, gives the agent a 1KB summary instead. 10K stars on GitHub, 82K npm downloads, works on 14 platforms. I'll walk through how the interception works, the Think in Code paradigm, and why session persistence matters more than people realize. Organized by @buildermare (@0xVeliUysal, Furkan Demir) through @clawcon and the @openclaw community. Special thanks to @iammurataslan for making it happen. Ankara'daysan gel.