Sabitlenmiş Tweet

James Stephenson

51.7K posts

James Stephenson

@ICannot_Enough

@ElonMusk and I own Tesla (along with several other people).

Florida Katılım Eylül 2011

686 Takip Edilen283.9K Takipçiler

@TrevorScottReal @SawyerMerritt @carwowuk Yes, that was also my suspicion. When you upload videos to YouTube, it offers you the option of testing different titles to see if they perform differently when shown simultaneously to different users.

English

Carwow just released a new video to its 11 million YouTube subscribers titled: "Why Tesla Full Self Drive is Pointless!"

@carwowuk misleads its viewers into thinking Tesla’s Autopilot is FSD, even though FSD hasn’t been approved in the UK yet. Autopilot isn’t meant for city driving, yet they test of bunch of scenarios that Autopilot wasn't built to do in the first place....

English

The difference between weather conditions contributing to an accident and a vehicle’s speed while an ADAS is engaged contributing to the accident is that the human driver can cause the speed of the vehicle to be dangerous before disengaging the ADAS but the human driver cannot change the weather.

English

@ChadMoran @ICannot_Enough You can’t have ego and seek truth at the same time

They cannot coexist

English

Justine Saint Amour’s Cybertruck did not crash “on FSD 13”.

It crashed on FSD Justine (Flaky Supervisory Discipline).

Chad Moran@ChadMoran

Is this not a double standard? The CT was on FSD 13, this test on FSD 14. Every time I post a critical issue FSD has done 90% of the comments are yelling at me about how I'm "not on the latest version". Yet we're using a version 6 months newer to prove an older one DIDN'T do something?

English

Every current Tesla ADAS is explicitly SAE Level 2. Every manual, software release note, in-vehicle driving assistant activation, and federal regulation explicitly state that the human driver must remain fully engaged in the dynamic driving task, actively supervising at all times.

Supervised autonomy requires the driver to maintain responsibility for the vehicle's safe operation at all times, so nobody should fall victim to the “FSD was driving too fast before the driver panicked, disengaged, and crashed” line of thinking.

That's the mindset that leads to too many ADAS crashes: driver inattention causes delayed responses which cause traffic accidents. If the driver is being responsible at all times, they watch the vehicle's speed, intervene when necessary, and thereby avoid panicked situations altogether.

If the courts keep finding “shared fault” for collisions that a vigilant driver would have avoided, manufacturers will be punished for deploying- and possibly deterred from developing- helpful but imperfect Level-2 systems that bear the promise of truly unsupervised autonomy that will save even more lives.

Empathy for a driver who panicked, caused a collision, and was injured is human, but the standard for acceptable driver monitoring of a Level 2 ADAS cannot be: “how humans often react when surprised.” Good supervision means never allowing the vehicle to surprise you by getting itself into a situation where an at-fault collision is unavoidable. That’s the standard Tesla designed for, that regulators require, and that actually makes roads safer.

GIF

English

@ChadMoran We may just have different standards for how much human driver awareness is acceptable when supervising an ADAS.

English

@ICannot_Enough I do, and I appreciate you discussing this in good faith even if we don't fully agree.

English

I acknowledge that human drivers do often panic and cause traffic collisions. The video footage of Justine Saint Amour's Cybertruck collision seems consistent with that narrative.

I hope you will agree I have described a problem statement rather than a desired outcome; frequency of observed occurrence is no rationale for a determination of reasonableness.

English

@ICannot_Enough > Panic is never reasonable

What? You're saying it's unreasonable for a human to panic in this situation? really? You have that little empathy?

English

Panic is never reasonable, and the driver's responsibility for the safe operation of the vehicle does not begin at the moment they disengage the ADAS.

The crux of our disagreement seems to hinge on the moment of disengagement, with you believing FSD 13 was 100% responsible for the vehicle's instantaneous vector at that moment and me believing the driver was responsible for the vehicle's velocity in the moments prior, to include the possibility that the driver manually pressed the accelerator pedal to override FSD 13's intention to coast or decelerate rather than accelerate- up the exit ramp.

English

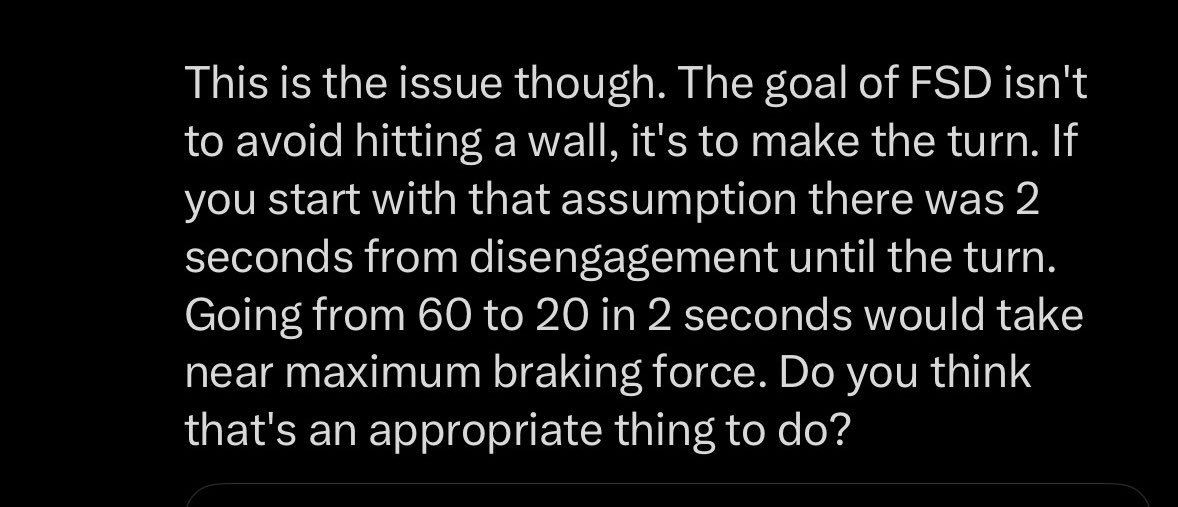

When FSD was disengaged the vehicle was traveling 58 MPH 2 seconds/170 ft from the start of the turn. That left the driver with very little time to slow down to make the turn.

I think it's reasonable that if you see a 15 MPH sign while on FSD and you're doing 58 MPH you would disengage and panic.

English

I agree that sometimes the ADAS is to blame, sometimes the human driver is to blame, sometimes both are to blame, and sometimes neither are to blame (due to an external causation).

How did you determine this was a shared responsibility collision with the ADAS bearing part of the blame, rather than a driver’s fault only situation?

English

I am saying that I think there's a real possibility that FSD made a mistake and was traveling too fast and thus contributed to the situation.

With that said, the driver is still ultimately responsible for what happens. My v14 FSD tried to run into another car while parking, if I let it. It's still my fault. But that doesn't mean FSD didn't make a mistake. Both can be true.

English

@ChadMoran Yes, I inferred an implicit assumption that FSD was supposed to have been traveling at a lower rate of speed and that it was FSD 13’s fault (rather than the human driver Justine’s fault).

Was that your intent when you wrote it?

If not, what did you intend for it to say?

English

@ICannot_Enough You think that says I'm saying FSD is at fault? That's a leap man, I'm sorry.

English

I'm confused where I said FSD was responsible, though? I said it contributed to. There's a difference.

And you're 100% right, there is a chance the driver was pressing the accelerator. But hear me out because I want to have this discussion in good faith.

1. Elon likely would have said this, he LOVES proving people wrong.

2. I went frame by frame and up until the disengagement the vehicle was maintaining a constant speed.

These sure seem like hints that perhaps she wasn't, right?

Could it be the possibility that FSD was driving too fast for the exit, she saw the 15 MPH sign, panicked, disengaged and didn't apply enough braking pressure to arrest the vehicle? That seems like a reasonable conclusion.

English

You’ll forgive me for having a difference of opinion, Chad, but…

… to me, the screenshot below sure looks like you’re assuming FSD 13— rather than the human driver Justine— was responsible for the Cybertruck’s velocity up the exit ramp. I find such an assertion curious because it is the human driver who can override the ADAS and not the other way around.

I’ll permit for the sake of argument the possibility that FSD 13 alone could have chosen to drive up the exit ramp at 57 mph, if you will permit for the sake of argument that Justine could’ve been pressing the accelerator pedal with her foot, if she were unaware of the upcoming sharp turn or simply distracted (Distracted Driving kills thousands of motorists per year in the US alone, per NHTSA data, in many cases without the involvement of any ADAS).

What gives you confidence that Justine wasn’t overriding FSD 13’s deceleration intent by pressing the accelerator pedal (which would not have disengaged Tesla’s ADAS)?

You may believe FSD 13 was speeding because it thought it was on the interstate highway below, but could the crash video not just as easily be explained by the alternative hypothesis outlined above? 🤔

English

@ICannot_Enough I never once said it was assigned blame. Y'all keep projecting.

Contributing to a collision and being the cause are different.

English

Correction:

Tesla generously chooses to categorize such collisions as “ADAS-related” but that is quite different from Tesla assigning its ADAS as the proximate cause of the collision.

Anyone being safely driven past concrete barriers by Tesla’s Autopilot or FSD ADAS could cause an “ADAS-related” accident at any time, if they chose to apply force to the accelerator pedal and steer into a concrete barrier within 5 seconds, but their actions— not Tesla’s ADAS— would be the proximate cause of the collision.

English

@ICannot_Enough @Michael_K_AZ I can remember when Cronkite was watched by 15% of the nation at the same time. A 15 market share is unheard of today. Now a normal post from you James or another big account on this platform has more eyeballs than the CBS Evening News. The end is nye.

English

I went to the exact same location of the Cybertruck accident shown in the Fox News video. This is the US-69/59 Eastex Freeway northbound HOV lane at the Y-split near the Eastex Park & Ride exit (approaching from downtown Houston toward Humble). In the Fox News video, the vehicle failed to follow the right curve, going straight into the barrier.

Well, I tested it twice today with Tesla FSD engaged the entire time with zero human intervention. And unless you think I am a hologram speaking to you from another dimension now, it worked out really well. Here is the video of me taking the exact same curve twice, with Tesla FSD v14.2.2.5.

English

@JohnAnth61 @28delayslater At least in the case of the mercenaries Dan O’Dowd won’t pay until they can show him footage of “FSD” crashing into something— yes, we need cameras trained on their hands and feet. 🤣

English

@ICannot_Enough @28delayslater Do we need a camera pointing down at the steering wheel?

Maybe another one pointing down at the driver's feet?

Would come in handy for all those cases where the driver drove through the front of a convenience store.

🙄

English

@JCChristopher @Michael_K_AZ TV viewership erodes further every year— even for live major sporting events, which had been the last hope.

In the 80’s, everyone watched TV.

People have too many superior alternatives nowadays.

English

@Michael_K_AZ @ICannot_Enough You will never see this on any MSM. But every time they do a story with no evidence, and then someone debunks them, the MSM gets weaker and weaker, by the day. So onwards.

English

@WR4NYGov @AlternateJones Yann LeCun is a fairly smart person with severe Imposter Syndrome (caused by being compensated as though he were an extraordinarily smart person) that compels him to attack true geniuses.

English

@AlternateJones Good catch. That’s @ICannot_Enough level receipts.

English