Igor Kulakov

1.3K posts

Igor Kulakov

@ihorbeaver

Building MicroFactory — assembling robot units that bring Factorio into the real world. Seed soon.

Robbing your competition of sleep and/or focus is the oldest trick in the books. I knew a scientist in a race to publish that would suggest The Wire to his competitors.

5 million humanoid robots working 24/7 can build Manhattan in ~6 months. now just imagine what the world looks like when we have 10 billion of them by 2045. now imagine the year 2100.

[1/5] Fine-grained teleop is slow, error-prone, and frustrating even for experts. We introduce a real2sim2real shared autonomy framework that learns a residual copilot for low-level corrections. It enables: 🎮 fine-grained teleop for novices 🤖 a copilot learned from <5 min of teleop data 📈 higher-quality demonstrations for imitation learning 🔗 residual-copilot.github.io

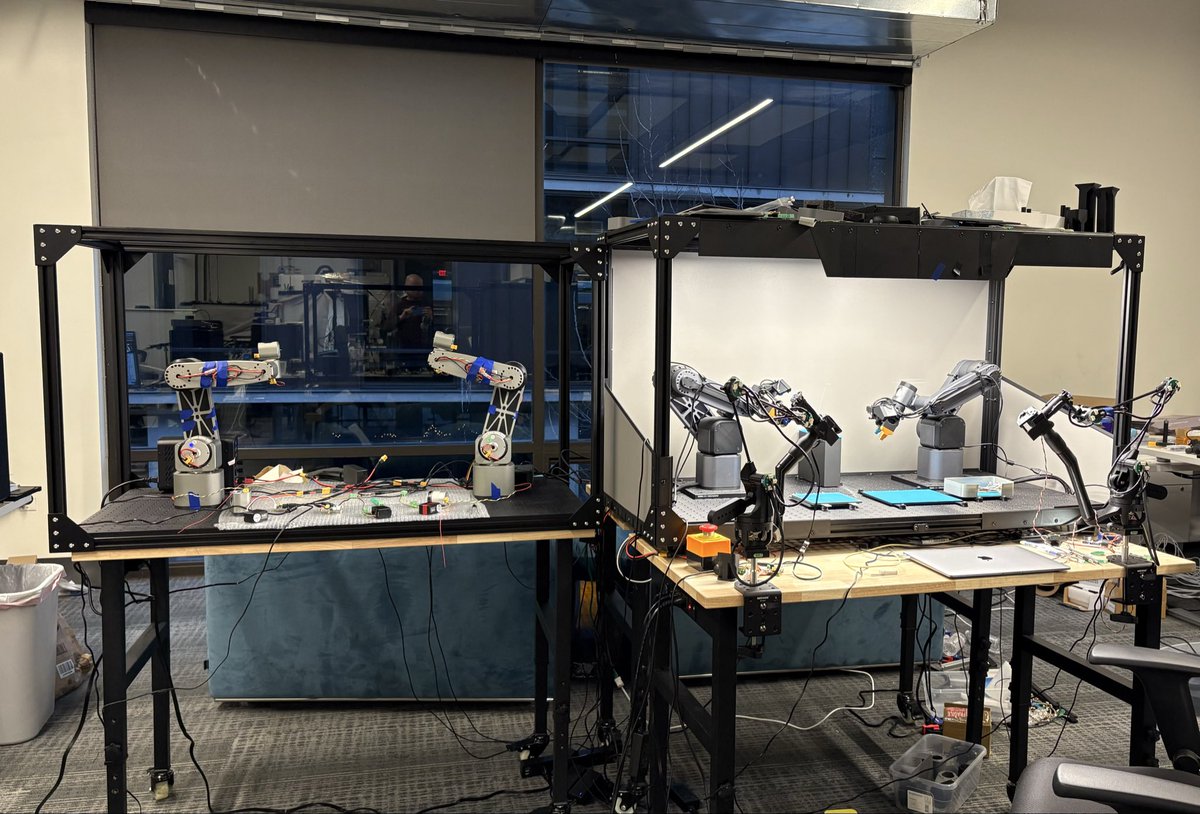

Here is the full run of MicroFactory, that autonomously assembles a photo frame - a real product sold on Amazon. This is a good example of how to deal with complex tasks, while we do not yet have a large set of robotic data to train a big general ai model. Thread:

Intelligence is becoming too cheap to meter. We're on track for AI reasoning to be 100x cheaper by 2027. When hypothesis generation is free and experimental design is automated, the bottleneck isn't ideas—it's the physical world. Science is about to go exponential. Get ready for all frontier labs to start building "Science Factories" to mine new data out of nature.

Robotic insight 2: One undocumented feature of VLA models is that they can generalize pretty well from very little data, if you run them on good hardware. Here, with 35 demonstrations (5 mins in total), robot can pick up small pcb from any position and at any rotation angle.

The advantage of arms with industrial internals is that they don’t wobble, so the AI model can control it faster by just multiplying frames per second. Here’s we multiplied the FPS by 3× compared to teleoperation (180 instead of 60).

Building upon SimpleVLA-RL, we have implemented real-world RL on long-horizon dexterous tasks and witnessed a non-trivial (~relatively 300%) performance improvement over the SFT model, along with surprising capabilities on auto-recovery. Blog coming soon. The entire process uses very little data and training compute—basically costing no more than a single robotic arm—hinting that real-world generality for machines is actually within sight.