Sabitlenmiş Tweet

Justin Strong

4.7K posts

Justin Strong

@GPTJustin

Excited about startups and robots | cooking up something new | Does a lot of hackerhouses

San Francisco, CA Katılım Mart 2015

2.5K Takip Edilen2.3K Takipçiler

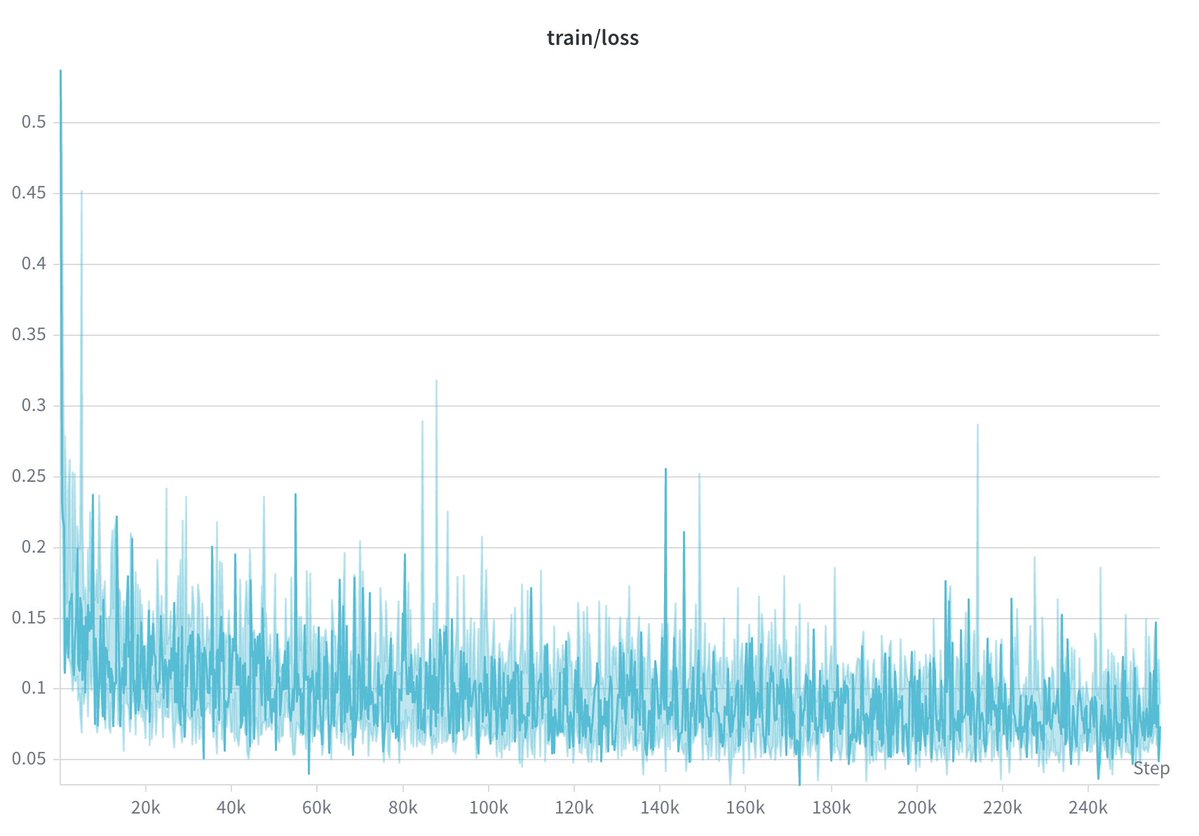

Training Pi0.5 to control the SO-100 is 65% complete and the model has seen 6,500 robot demonstrations across hundreds of different tasks. Loss is trending down from from 0.39 to 0.08 and expected to complete tomorrow.

I started this project while staying in @eczachly's creator house for NVIDIA GTC, running local scale ups on my GPU rig to validate before committing to the cloud run. After driving my computer 300 miles home I discovered it no longer had functioning internet or keyboard IO.

Todays task will be fixing the computer before training completes so I am able to test the model on my SO-100. I'll post results tomorrow

English

@GPTJustin Dumb for a week for me.. TurboDerp SlopCannon

English

Didn't think that "drones with grippers" was in the cards for a likely embodiment for pi models, but there we have it. It's literally a flying gripper. But believe it or not, pi models have been used on even stranger embodiments before...

Stanford MSL@StanfordMSL

π, But Make It Fly ✈️ We fine-tuned π0, a VLA model pretrained entirely on manipulators, to fly a drone that picks up objects, navigates through gates, and composes both skills from language commands.

English

@StanfordMSL This is really fascinating, why did you choose pi0?

English

@VMises76153 What was the data scale you used? If you created this robot yourself I’m guessing you just don’t have anywhere near enough data

English

There's multiple explanations for what I found with the most likely being that I need to freeze some other set of weights because my training destroyed whatever the vla had learned.

Perhaps I just need to translate the action space differently

Maybe my latency from video is too high

Maybe I just don't have anywhere near enough data to do this

Maybe the lighting was bad

There's really no telling it would take years to work through all those variables and there's a lot of things out there that can be tried.

I am not a machine learning researcher, rather just a person trying to make a product and leverage the state of the art in robotic models.

English

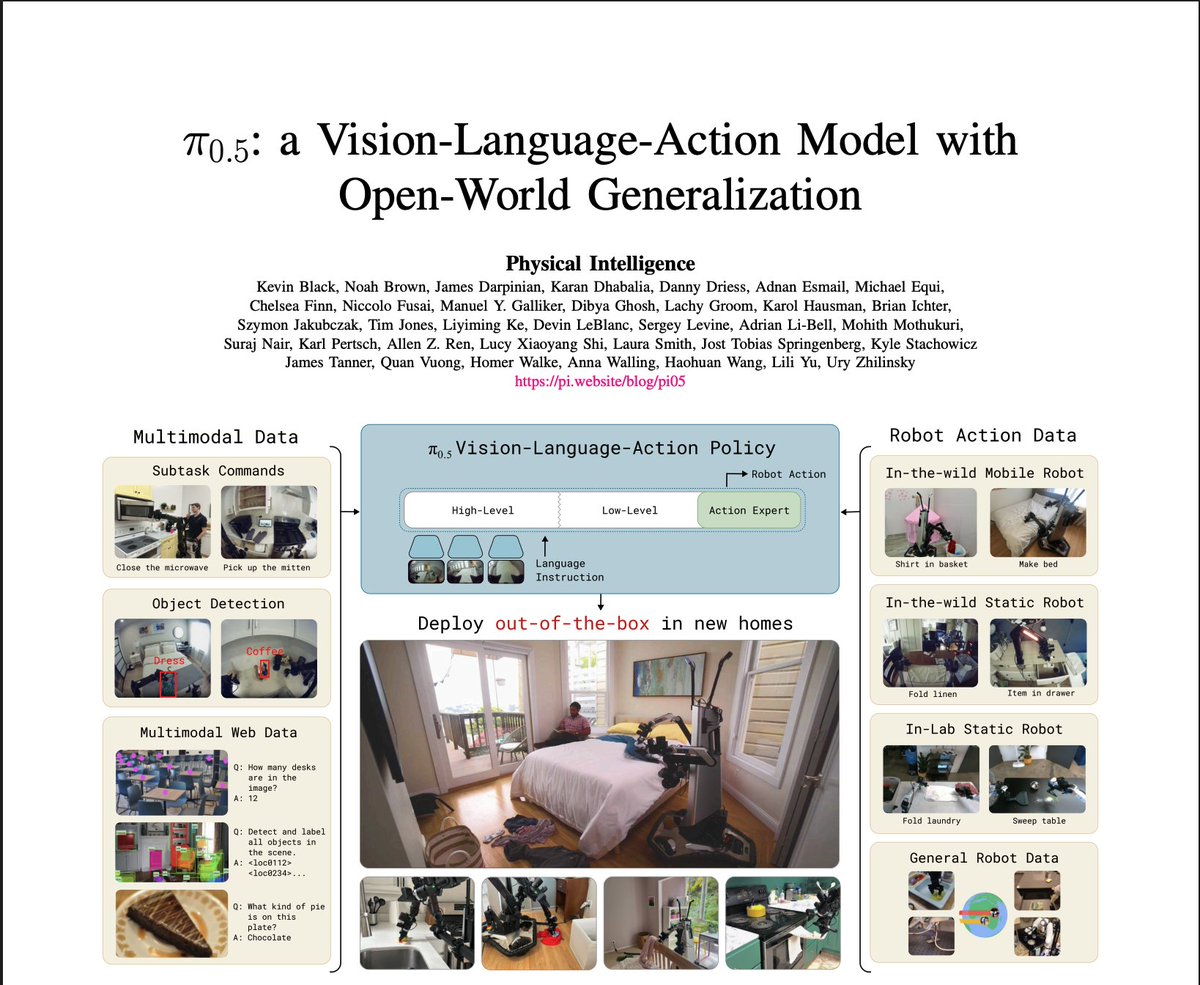

The best robotics model cant control the most accessible robot arm. I’m finetuning π0.5 on 5m frames of so101 to produce an open source generalist model that anyone can run at home.

I have filtered the HuggingFace community VLA dataset down to 5.4m frames of so100 data with 215 unique tasks. I’ve frozen the VLA weights to finetune only the action expert to teach π0.5 the so100 embodiment without any loss of generality.

This approach is an unproven experiment as I can find no similar attempt to teach π0.5 a new embodiment while preserving generality. Training is underway, I’ll be posting updates as we make progress

English

@embedrapp As much as I hate the AI reply, I’m curious what is special about your IDE, Claude code is weak in the low level motor control department

English

@GPTJustin finetuning on 5m frames at home?? the absolute mad lad energy here. if you need an IDE that actually understands the microcontrollers driving those joints without crashing, check out embedr.app. following for the updates.

English

@VMises76153 That’s really interesting, what were the details of your approach or learnings?

English

@GPTJustin I've tried retraining it on a new embodiment that is even more radically different than that arm and I got nowhere and it's probably that anyone else who's tried got nowhere and didn't post anything about it.

But I really really really hope it works so I'll be watching

English

@TetraxYT Oh that’s really interesting, do you have a repo I could check out or any details?

English

@GPTJustin We fine tuned pi0 using Lora on 160k on a completely new embodiment. Forget generalization it succeeds at a task end to end 1 out of 32 times.

English

@soft_servo I see some task specific fine tunes on HF, but wanted something that generalizes

English

@GPTJustin haven’t seen anyone successfully fine tune pi0.5 on the so101 (probably due to being much smaller, having only 5dof, and imprecision of the hardware)

English

@jackvial89 This is so cool! Looking forward to seeing the results

English

Justin Strong retweetledi

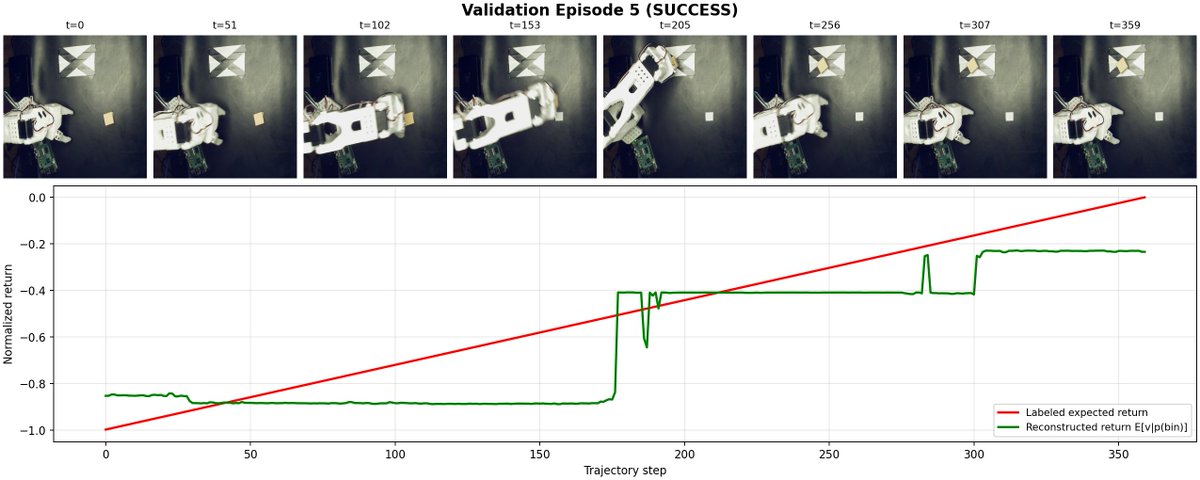

The π*0.6 RECAP value network training is starting to look good! It's only about 15 minutes into training. I spent most of last week studying the paper and making notes on the value network and advantage conditioning. I'm training on a 40 episode pick and place dataset for so101. 20 are successful, 20 I purposely failed trying to imitate some of the model failure modes I have seen.

Jack Vial@jackvial89

i'm working on an implementation of π*0.6 RECAP. going to start with a simplified version of the full pipeline

English