Ankit Goyal

212 posts

Ankit Goyal

@imankitgoyal

Foundation Models for Robotics, Senior Research Scientist @ NVIDIA

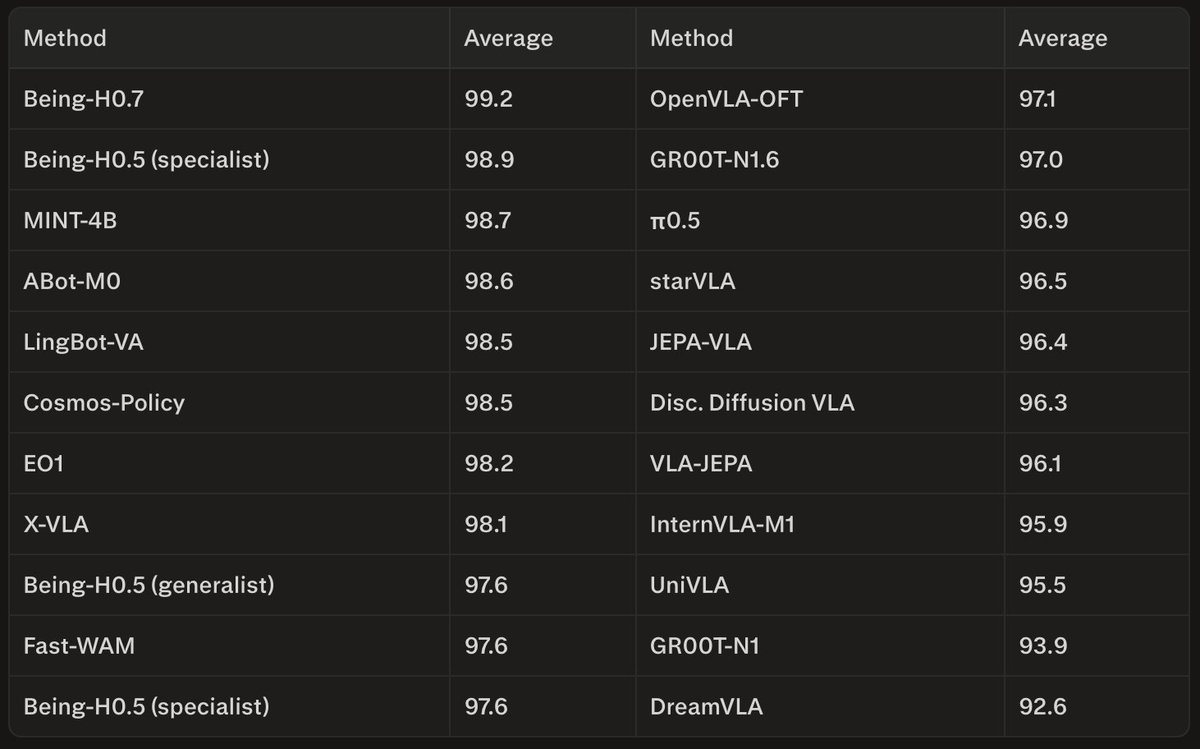

When every generalist robot model scores 95%+ on a benchmark, the numbers become meaningless. What if we built a photorealistic benchmark that never saturates and can generate new scenes and tasks with AI Workflows in minutes? We introduce RoboLab! 🧵(1/6)

What's the right architecture for a VLA? VLM + custom action heads (π₀)? VLM with special discrete action tokens (OpenVLA)? Custom design on top of the VLM (OpenVLA-OFT)? Or... VLM with ZERO modifications? Just predict action as text. The results will surprise you. VLA-0: Outperforms π₀, GR00T-N1, MolmoAct, SmolVLA. With ZERO changes to the VLM. 🧵⬇️

Are you interested in Vision-Language-Action (VLA) models? We had an excellent guest lecture today by Ankit Goyal @imankitgoyal from NVIDIA on VLAs and their role in robot manipulation 🎥 Watch the recording here 👇 youtube.com/watch?v=IeNwXw… Slides: yuxng.github.io/Courses/CS6341…