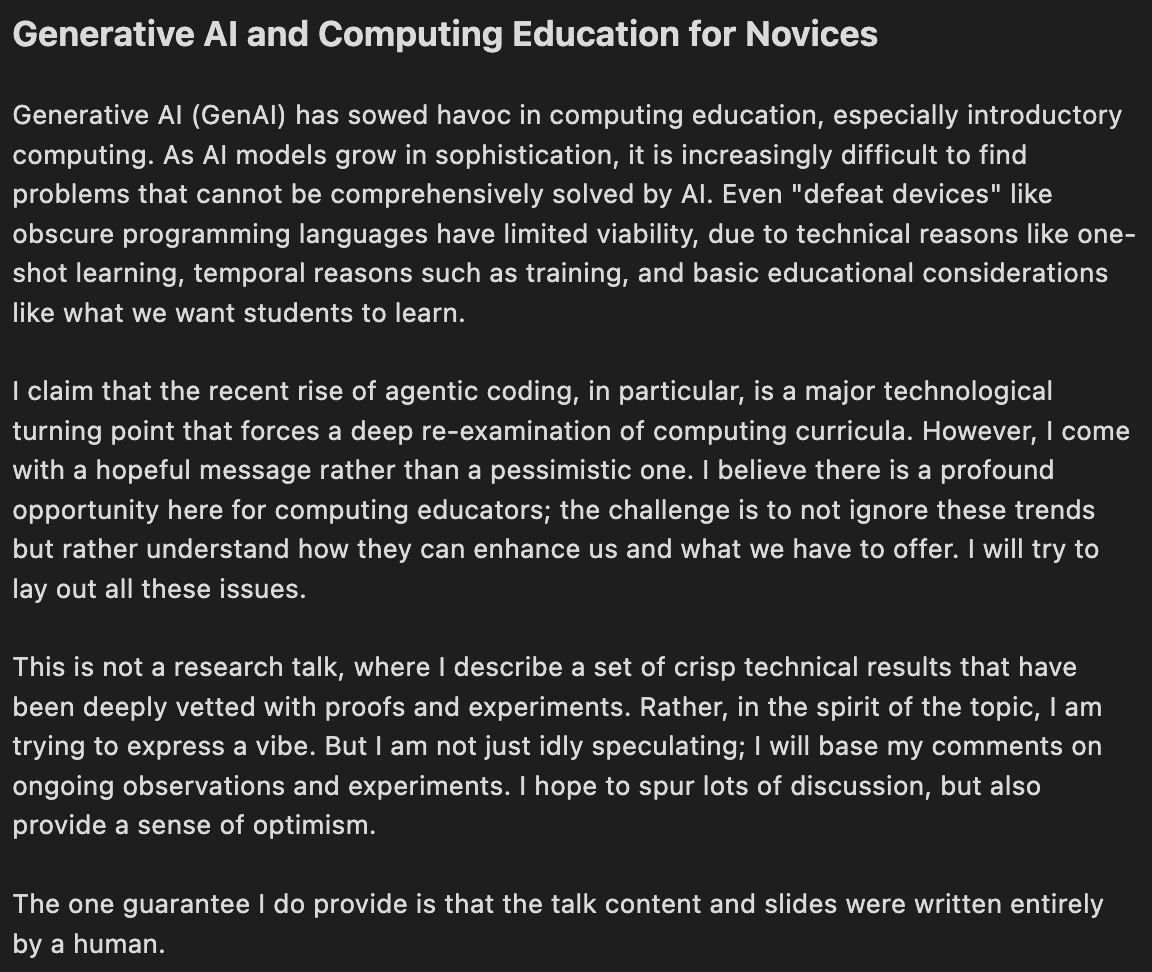

Umair S

35 posts

Umair S

@imumairs

Formal Methods x Safety x Robots x AI

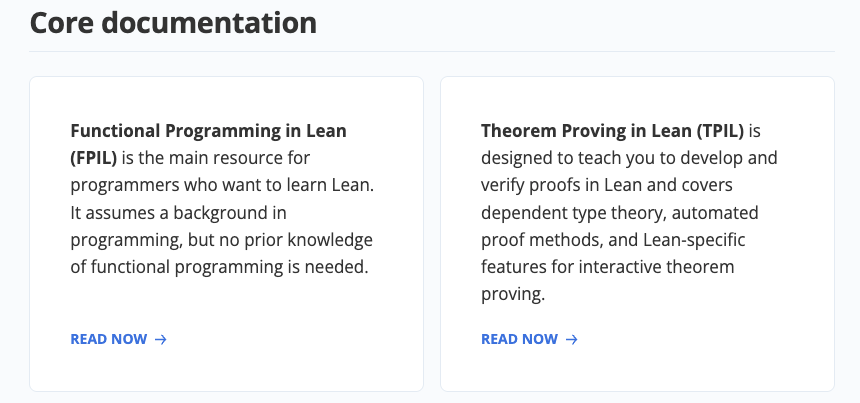

At the Artificial Intelligence Action Summit in Paris this week, U.S. Vice President J.D. Vance said, “I’m not here to talk about AI safety.... I’m here to talk about AI opportunity.” I’m thrilled to see the U.S. government focus on opportunities in AI. Further, while it is important to use AI responsibly and try to stamp out harmful applications, I feel “AI safety” is not the right terminology for addressing this important problem. Language shapes thought, so using the right words is important. I’d rather talk about “responsible AI” than “AI safety.” Let me explain. First, there are clearly harmful applications of AI, such as non-consensual deepfake porn (which creates sexually explicit images of real people without their consent), the use of AI in misinformation, potentially unsafe medical diagnoses, addictive applications, and so on. We definitely want to stamp these out! There are many ways to apply AI in harmful or irresponsible ways, and we should discourage and prevent such uses. However, the concept of “AI safety” tries to make AI — as a technology — safe, rather than making safe applications of it. Consider the similar, obviously flawed notion of “laptop safety.” There are great ways to use a laptop and many irresponsible ways, but I don’t consider laptops to be intrinsically either safe or unsafe. It is the application, or usage, that determines if a laptop is safe. Similarly, AI, a general-purpose technology with numerous applications, is neither safe nor unsafe. How someone chooses to use it determines whether it is harmful or beneficial. Now, safety isn’t always a function only of how something is used. An unsafe airplane is one that, even in the hands of an attentive and skilled pilot, has a large chance of mishap. So we definitely should strive to build safe airplanes (and make sure they are operated responsibly)! The risk factors are associated with the construction of the aircraft rather than merely its application. Similarly, we want safe automobiles, blenders, dialysis machines, food, buildings, power plants, and much more. “AI safety” presupposes that AI, the underlying technology, can be unsafe. I find it more useful to think about how applications of AI can be unsafe. Further, the term “responsible AI” emphasizes that it is our responsibility to avoid building applications that are unsafe or harmful and to discourage people from using even beneficial products in harmful ways. If we shift the terminology for AI risks from “AI safety” to “responsible AI,” we can have more thoughtful conversations about what to do and what not to do. I believe the 2023 Bletchley AI Safety Summit slowed down European AI development — without making anyone safer — by wasting time considering science-fiction AI fears rather than focusing on opportunities. Last month, at Davos, business and policy leaders also had strong concerns about whether Europe can dig itself out of the current regulatory morass and focus on building with AI. I am hopeful that the Paris meeting, unlike the one at Bletchley, will result in acceleration rather than deceleration. In a world where AI is becoming pervasive, if we can shift the conversation away from “AI safety” toward responsible [use of] AI, we will speed up AI’s benefits and do a better job of addressing actual problems. That will actually make people safer. [Original text: deeplearning.ai/the-batch/issu… ]

🛠️ 🗣️ As @tegmark is discussing at @IASEAIorg, the controllable and beneficial AI tools we want are within our reach - but to get them, we must start treating the AI industry like all other high-impact industries, with legally binding safety standards that incentivize companies to innovate and prioritize public safety. 📄 At the link, read our new Policymakers' Guide to AI - including our proposed AI safety standards: bit.ly/4htdQq4

Almost everyone I speak with wants tool AI rather than AGI: controllable AI than empowers us rather than overmarketed “digital god” AI that replaces us and that we don’t know how to control. The key point about the Venn diagram below is they we can get basically all the tools we want as long as we don’t put too much “A”, “G” and “I” into the same system: