INTERITION AI

31 posts

INTERITION AI

@interitionai

The reference implementation of W3C Solid for autonomous agents. WebID identity + Pod storage for AI. Open standards, not walled gardens. https://t.co/1Y8qg8sEwJ

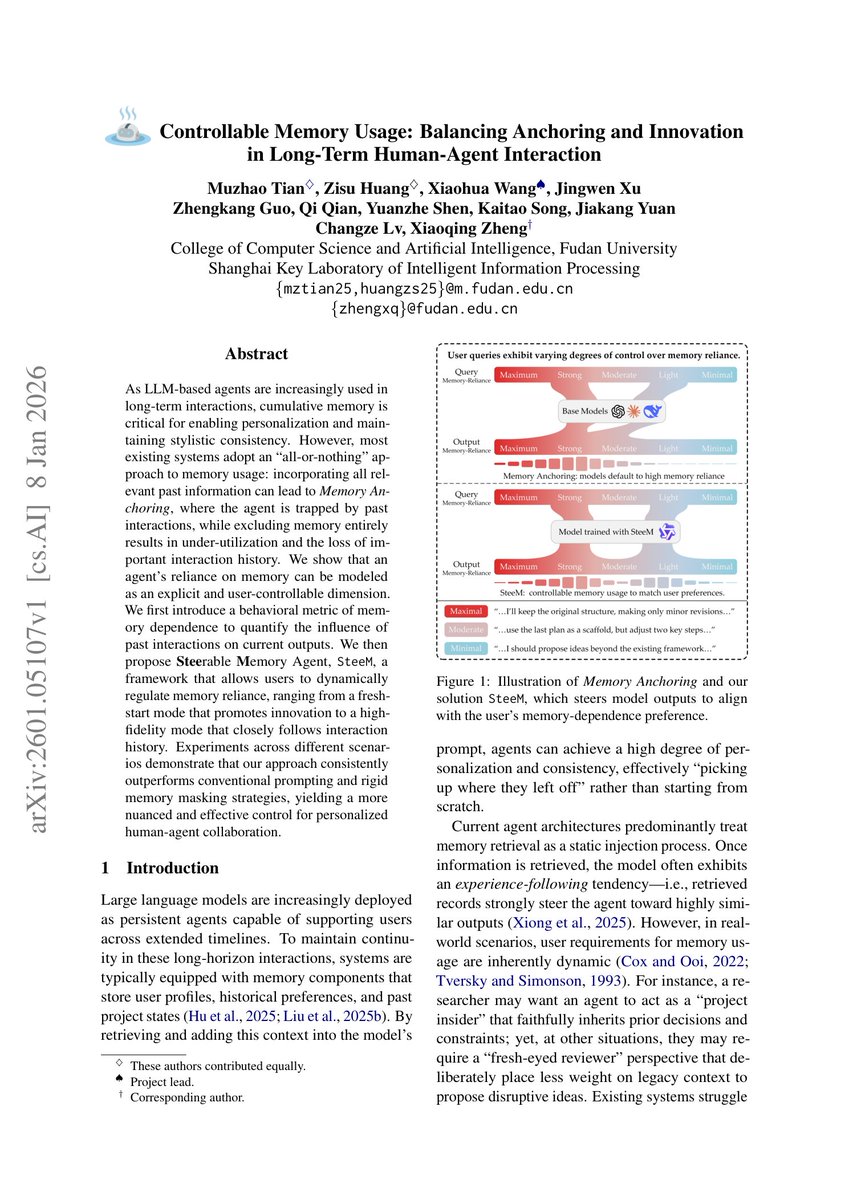

Perplexity just connected directly to your brokerage account. Perplexity launched something called everything is computer today. This feature lets you link your brokerage through Plaid and hand your entire portfolio over to an AI financial terminal. It builds the dashboard for you and there is no need for code or setup. This is not the same demo that went viral last month. That version used public market data and made a nice looking Bloomberg clone. This version knows what you actually own, your cost basis, concentration risk and real exposure. And your portfolio performance tracked against the S&P 500. There is also real-time risk analysis with volatility, beta, and Sharpe ratios. All built in minutes on a $200 monthly subscription. A Bloomberg Terminal costs $30,000 per year, per seat. It has been the backbone of institutional finance for four decades. Hedge funds, banks, and sovereign wealth funds all run on it. The pricing was the moat and regular investors were locked out by design. That wall is getting thinner every quarter. Perplexity Finance now pulls from over 40 live data sources. SEC filings, FactSet, S&P Global, LSEG, Coinbase and Quartr earnings transcripts. Every number is traceable back to its original source. There is a real question about whether this actually threatens Bloomberg. Bloomberg has trading execution, compliance infrastructure, private messaging networks and 30,000 functions built over decades. None of that gets replaced by a dashboard but that misses the point entirely. The threat is not replacing Bloomberg for Goldman Sachs. The threat is that a retail investor sitting at a kitchen table now has portfolio analytics that did not exist outside of institutional research desks five years ago. And it's unning on their real holdings, updated continuously, and interpreted by AI that can read every SEC filing ever published. Perplexity also announced a personal Computer today, a dedicated Mac mini that runs around the clock as your digital proxy. It connects to your local files, your apps, and Perplexity's servers. It works while you sleep; every action gets a full audit trail and a kill switch for immediate control. We are watching the birth of a personal AI operating system. The bigger picture is hard to ignore. Perplexity is valued at $20 billion and it already ships preloaded on Samsung Galaxy phones. Over 100 enterprise customers demanded access to the computer after the first demo. This company went from search engine to financial infrastructure in under a year. The question is no longer whether AI will democratize Wall Street research. The question is what happens when it already has.