Sabitlenmiş Tweet

Interlap

166 posts

Interlap

@interlap01

Execution layer for AI agents to control iOS & Android devices. Real devices. Emulators. Simulators. https://t.co/aHaaXPszHG

Katılım Ocak 2026

61 Takip Edilen128 Takipçiler

There is some overlap on device/simulator control, but I see them as different layers.

XcodeBuildMCP is more on the Xcode/toolchain side: build, run, test, debug, logs.

MobAI is more on the app automation side: UI context for agents, .mob flows, reusable workflows, result checking, self-healing, etc. You can use it while building the app too, but the focus is higher-level app interaction.

Also MobAI is not tied to Xcode/macOS. It can automate real iOS devices from Windows/Linux too, and supports Android as well.

So the difference is more about what is built on top of the device control layer.

English

@interlap01 @oliviscusAI whats the difference between this tool and XcodeBuildMCP?

English

I don’t know if there is anything similar for native Windows or Unity.

In general, “UI visibility” for agents is still not universal. There are two main ways to do it:

1. Use screenshots and let the LLM understand the UI visually.

2. Use the accessibility tree.

Screenshots work almost everywhere, but they use more tokens and can be unreliable for exact element coordinates.

Accessibility trees are more structured and usually better for automation, but many apps, especially custom-rendered ones, don’t expose good accessibility data.

So the best setup usually combines both.

For Unity apps, screenshots may be the only realistic option. Because of that, I’m not sure it’s worth building or using a dedicated tool for them right now.

English

Good to know — not deep in iOS land so this is helpful. Is there a similar approach for Windows native (WPF/WinUI) or Unity? The visibility problem feels universal: Codex and Claude Code both struggle when the UI layer isn't web-based. Agent-driven E2E on opaque render pipelines is still painful.

English

Interlap retweetledi

serve-sim is mostly infra around iOS Simulator remote access/control: video streaming, gestures, logs, media injection, browser UI, etc.

MobAI is built around the agent workflow: give an AI enough app context to understand the screen, decide what to do, run multi-step flows, verify the result, and much more.

Also not limited to iOS Simulator. It works with real devices too, supports Android, and runs on macOS, Windows, and Linux.

Different problem.

English

Every mobile team is about to realize how broken app testing has been this whole time.

Someone connected Claude Code to an iPhone simulator and told it:

“test everything.”

What happened next is wild:

→ it opened screens on its own

→ navigated through flows

→ read accessibility trees + screenshots

→ checked debug logs in real time

→ found actual bugs humans missed

→ generated a structured bug report at the end

No hardcoded coordinates.

No brittle XCUITest scripts.

No endless maintenance hell.

Just one prompt.

We’re watching the shift from “writing test cases” to simply describing intent and letting AI explore the product like a real user.

QA is starting to look less like automation engineering

and more like autonomous agents running infinite product simulations.

This is one of those demos that quietly changes how software gets built.

English

Debugging is actually solved too. At least with MobAI, an agent can run a debug build and capture app logs directly, like the logs you see when starting the app from Xcode.

It also has an agent-friendly debugger with breakpoints, code evaluation, and more. And of course, it can capture filtered system logs too.

English

@HowToAI_ The nav and screen traversal is solved. The hard part is debug log interpretation — iOS logs are noisy enough that hallucinated root causes are a real failure mode. Curious what the structured bug report looks like when the agent hits a flaky test vs. a genuine regression.

English

@geren8te @HowToAI_ With MobAI, I went further and created github.com/MobAI-App/mobi…. It's like browser-harness from browser-use, but for mobile apps.

It lets an agent create and optimize skills for your specific use case.

English

@HowToAI_ walking the app is the easy part. the useful layer is seeing which test skill keeps failing across sessions and turning that into the next fix. that's basically what i'm building at skillfully.sh

English

@itshanrw Would be nice to use it with a ChatGPT subscription instead of an API key github.com/MobAI-App/chat…

English

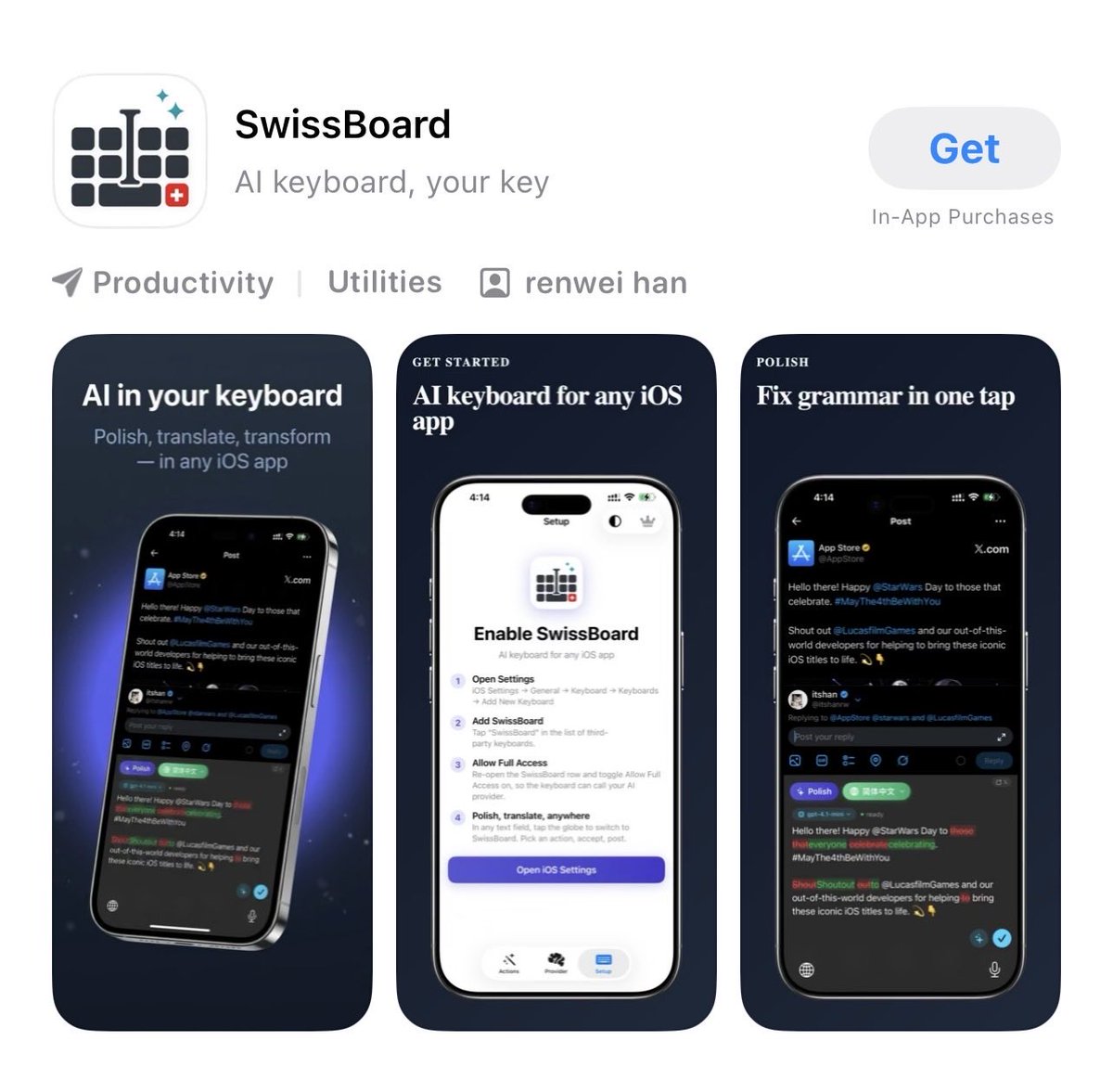

We just launched SwissBoard on the App Store!

Your AI keyboard for iOS that polishes grammar & translates right inside any app — Mail, Messages, Notes, Slack, and more.

Bring your own OpenAI-compatible key. Your drafts never leave your device or go through our servers. Privacy by design.

Try it now and experience AI-powered writing with full control!

More info check first comment

English

@KevinBelfort_30 kinda interested cause I keep seeing apps like theses with no reviews but making good money

how does it make money with only 4 reviews?

English

@mob_ai_app 模拟器跑agent确实方便,但环境维护和状态同步太折腾了。OpenGUI直接在真机上操作App,省去了模拟器的坑,长时段任务稳很多。开源的Plan Supervisor架构值得参考。

中文

Create reusable AI skills for almost any App on your phone.

Mobile Harness lets agents learn a workflow inside app, save it as a skill, and run it again later.

Like a small app-specific API, but built from real UI steps.

github.com/MobAI-App/mobi…

English

Open-sourced ChatGPTAuthKit: make API calls from iOS apps using the user’s ChatGPT account.

No API key needed. The user signs in with ChatGPT, and your app can use their quota for AI features.

Works with free accounts too.

github.com/mobai-app/chat…

English