Jack

416 posts

Jack

@itsjack

Puts a lot of effort into being lazy 🤖 Making https://t.co/9WY5jNIPMe 🎙️ Building products @MaketheProduct | ex-Product @ Vinted, The Next Web, Just Eat 🇪🇺

Introducing SubQ - a major breakthrough in LLM intelligence. It is the first model built on a fully sub-quadratic sparse-attention architecture (SSA), And the first frontier model with a 12 million token context window which is: - 52x faster than FlashAttention at 1MM tokens - Less than 5% the cost of Opus Transformer-based LLMs waste compute by processing every possible relationship between words (standard attention). Only a small fraction actually matter. @subquadratic finds and focuses only on the ones that do. That's nearly 1,000x less compute and a new way for LLMs to scale.

Introducing PostHog Code, the product editor that: - Understands your product - Identifies usage patterns - Triages bugs and errors for you - Creates PRs to fix them - Continuously monitors and improves your product Join the waitlist: posthog.com/code

If it’s a dream, don’t wake me up.

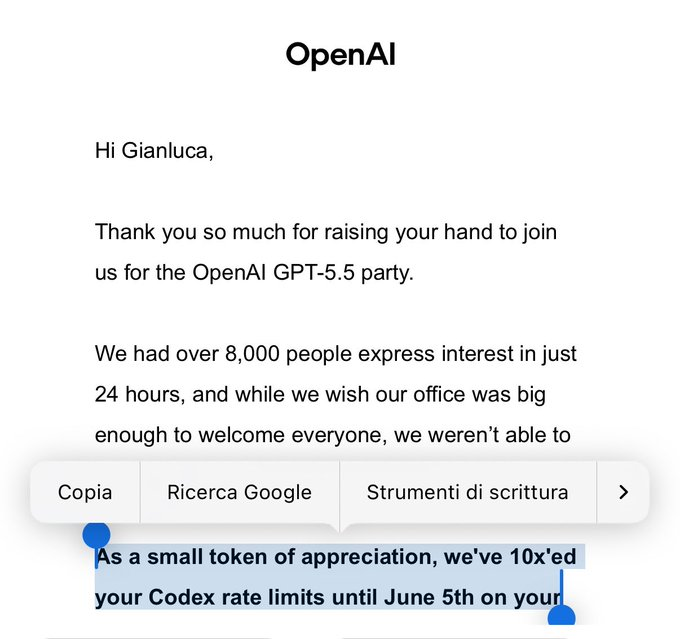

codex is not bad at UI. its just a layer 8 problem. GPT-5.5 literally just cooked me a very nice Landingpage. combined with GPT-image-2. openAI has some UI issues yes. But if you know how to work you are getting very good results already. @sama great work. congrats to whole team 🤝