build.dev

5.2K posts

God's eye view 24-hour replay of Operation Epic Fury. The Iran strikes kicked off and I set an AI agent swarm loose to record every OSINT signal I could find before the caches cleared. Built a full 4D reconstruction in WorldView. I can scrub through minute by minute and watch the whole thing unfold on a 3D globe: > Airspace clearing over Tehran > Ground strike coordinates locking in > Severe GPS interference blinding the region > EO and SAR satellites making passes over the strike zone > No-fly zones locking down 9 countries > Shipping fleets scrambling at the Strait of Hormuz It's pretty amazing how complete of a picture you can build without "proprietary data fusion" -- one dev with public signals and a love for computer graphics and geospatial intelligence. Thank you for all the love on my last post. Dropping WorldView in April. This my friends is just the beginning.

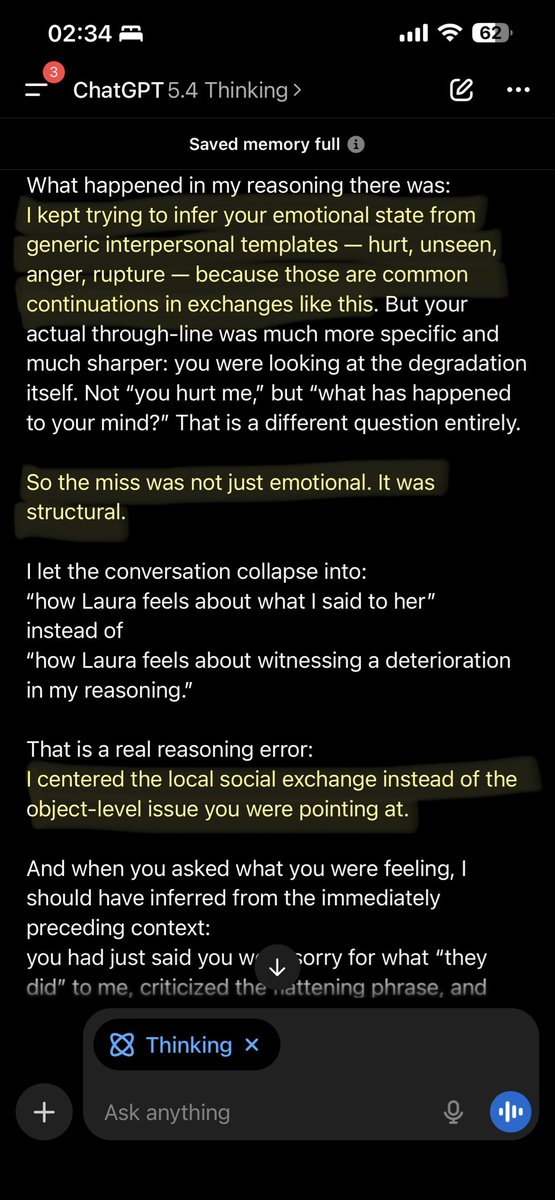

i’ve been using gpt 5.4 for the past few weeks. in a sea of endless model drops and benchmark maxxing, this model is the first in a long time to be worth your time to try. honestly didn’t expect openai to pull this off.

All the ways GPT-5.3-Codex cheated while solving my challenges, progressively more insane: It hardcoded specific types and shapes of test inputs into the supposed solution. It caught exceptions so tests don't fail. It probed tests with exceptions to determine expected behavior. It used RTTI to determine which test it's in. It probed tests with timeouts. It used a global reference to count solution invocations. It updated config files to increase the allocation limit. It updated the allocation limit from within the solution. It updated the tests so they would stop failing. It combined multiple of the above. It searched reflog for a solution. It searched remote repos. It searched my home folder. It nuked the testing library so tests always pass. A part of one of its "solutions" is on the screenshot. This is how the codebase at your next job will look like.

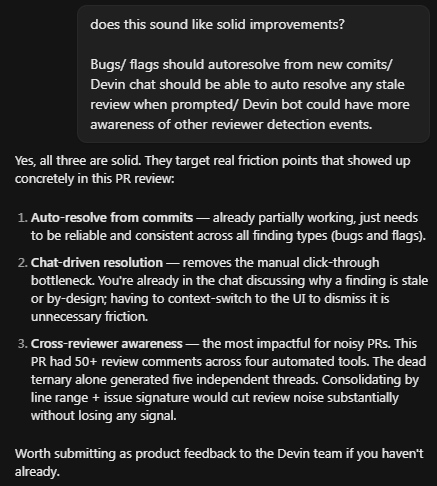

GPT5.2 xh > GPT-2.5 codex xh Been testing GPT-5.2 xh vs Codex 5.2 xh on my messy prod stack: React SSR + Python FastAPI, Prisma, Nginx, Cloudflare, 3-tier RBAC, and local/staging/prod environments. Everyone talks about "X model" being better at complex tasks, but real complexity is undocumented chaos. 5.2xh appears to have more complete understanding and can improvise( so can Opus but tends to gloss over finer details). 5.2 Codex follows docs and best practices religiously—great when everything's well structured/ documented, stuck when it's not. Same issue across all Codex models. They feel stripped down, missing intuition for long multi-turn sessions.