Jack Cook

130 posts

@jackcookjack

phd student @miteecs | systems for ml

We trained models with MXFP4-quantized attention, but it turns out this can break causal modeling. Our latest post explains why this happens and how to fix it. matx.com/research/leaky…

Craft and beauty are finally coming to internal docs. Today we're previewing Falconer: the single source of truth for company knowledge. We're still working through our waitlist, but are now excited to take on more demo requests. Engineers spend way too much time answering repeated questions and searching for outdated information. We experienced these problems during hypergrowth at Uber and Stripe and thought, "What if we applied external docs treatment to our internal docs?" That simple approach achieved pretty remarkable results for productivity. And now Falconer is building the AI-native version of those platforms for everyone. With Falconer, your internal docs are: - In one place - Always up to date - Easy to find - Synced with your data Coding agents allow us to generate more code faster than ever...but that code is poorly understood and out of step with the company's crown jewels: business context and tribal knowledge. Falconer's mission is to connect teams and agents with a shared memory system—always available and always accurate. We're working on it, and will have much more to share soon.

i liked this paper already, but i like it even more now that i know a bunch of the work was done on @modal <3

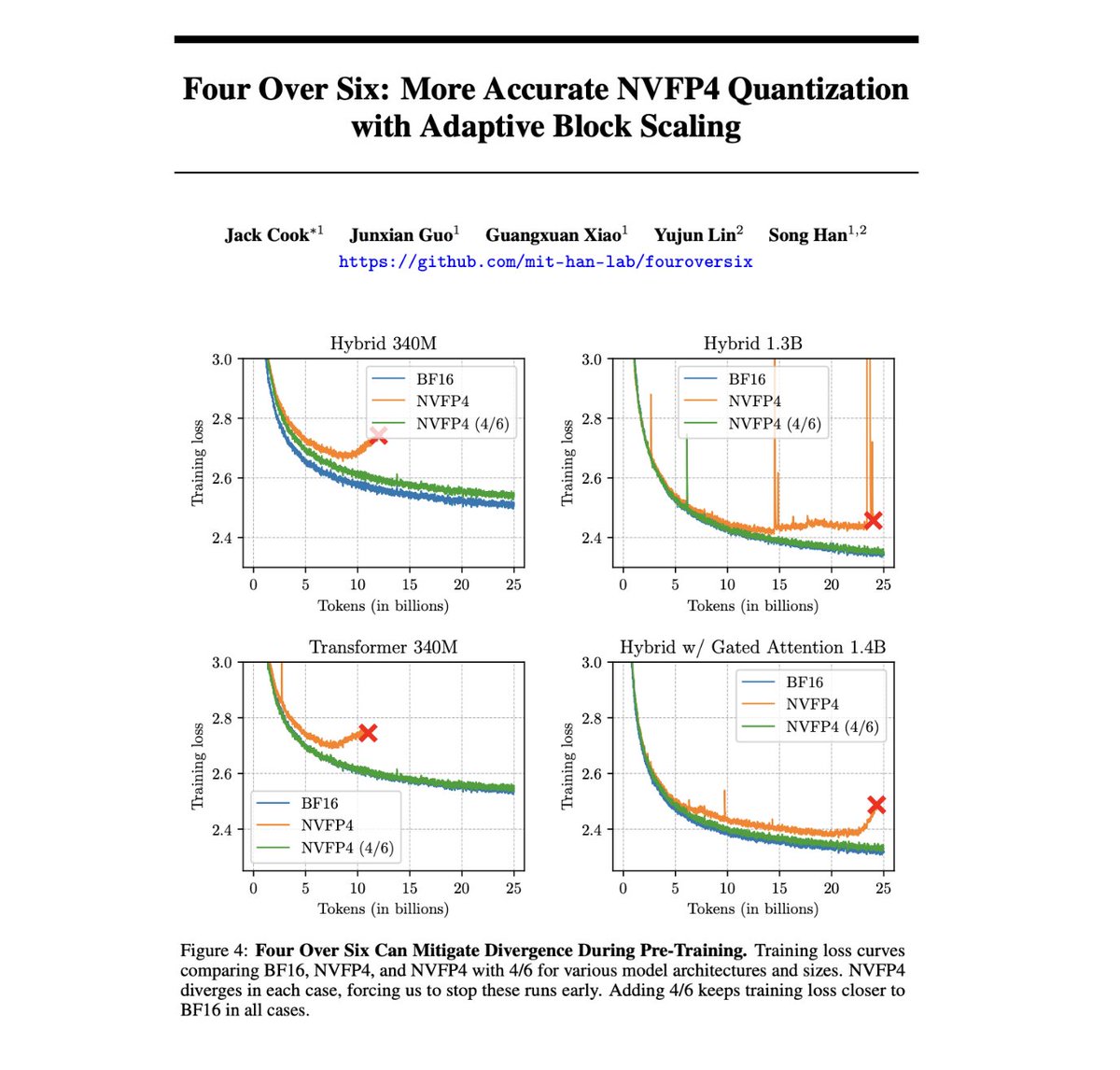

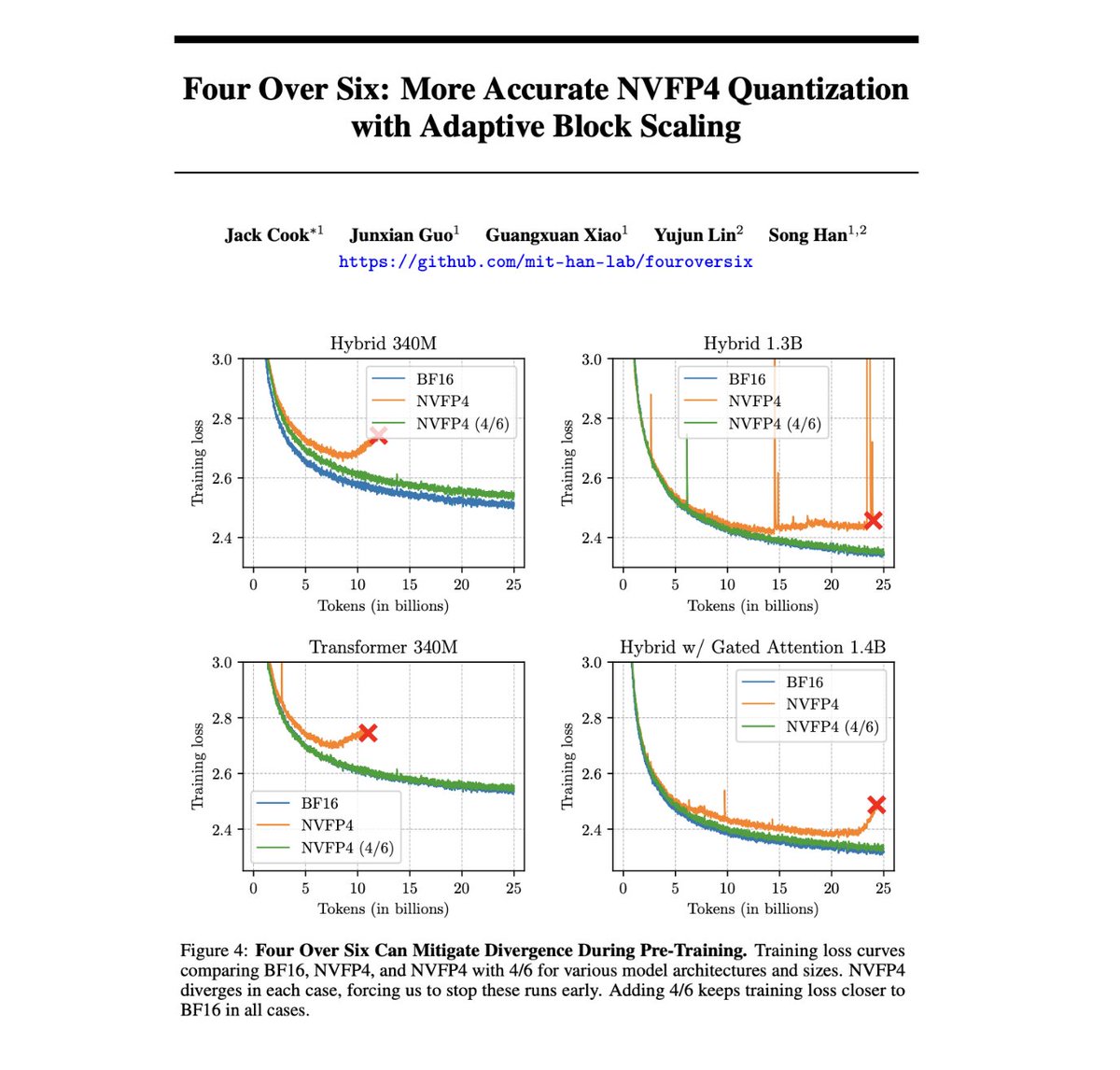

Training LLMs with NVFP4 is hard because FP4 has so few values that I can fit them all in this post: ±{0, 0.5, 1, 1.5, 2, 3, 4, 6}. But what if I told you that reducing this range even further could actually unlock better training + quantization performance? Introducing Four Over Six, a new method for improving the accuracy of NVFP4 quantization with Adaptive Block Scaling. 🧵