Jie-Ying Lee 李杰穎

17 posts

@jayinnn

CS @ NYCU | SWE @ Google

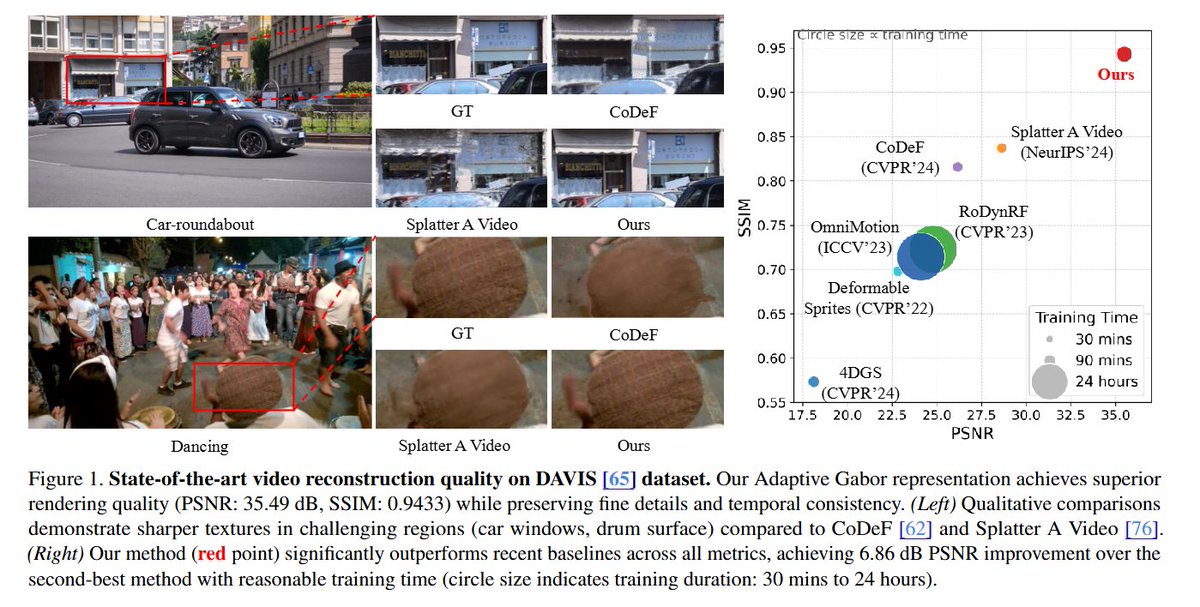

🛰️ Excited to share Skyfall-GS - the FIRST method to create real-time navigable 3D cities from satellite imagery alone! We transform multi-view satellite images into immersive 3D scenes you can freely fly through! 🚁✨ 🌐 Project Page: skyfall-gs.jayinnn.dev 1/5