Daniel Lichy

60 posts

@daniel_lichy

PhD student. Computer Vision/Machine Learning

Proud advisor moment 😊 Congrats @Songwei_Ge for winning the Larry S. Davis Doctoral Dissertation Award @umdcs! Songwei is now cooking as a research scientist at @reve. Looking forward to amazing work!

🛰️ Excited to share Skyfall-GS - the FIRST method to create real-time navigable 3D cities from satellite imagery alone! We transform multi-view satellite images into immersive 3D scenes you can freely fly through! 🚁✨ 🌐 Project Page: skyfall-gs.jayinnn.dev 1/5

🎉 We’re excited to announce our paper Depth Any Camera (DAC), accepted to 𝗖𝗩𝗣𝗥 𝟮𝟬𝟮𝟱! 🚀 Along with this, we have a few exciting updates! To support NeRF & Gaussian Splatting on fisheye inputs, we now provide DAC’s depth estimation results for #ZipNeRF on fisheye images. 📥 Download depth maps: 🔗 yuliangguo.github.io/depth-any-came… Methods like #SMERF, #FisheyeGS, & #EVER can leverage this fisheye depth prior! #CVPR2025 #NeRF #GaussianSplatting #3DReconstruction #ComputerVision

🎉 Thrilled to introduce nvTorchCam, our new #PyTorch library designed to support the development of models using camera geometry like plane-sweep volumes (PSV) and related concepts like sphere-sweep volumes or epipolar attention, in a camera model-agnostic way! 🚀 🔗 Code: github.com/NVlabs/nvTorch… (1/6)

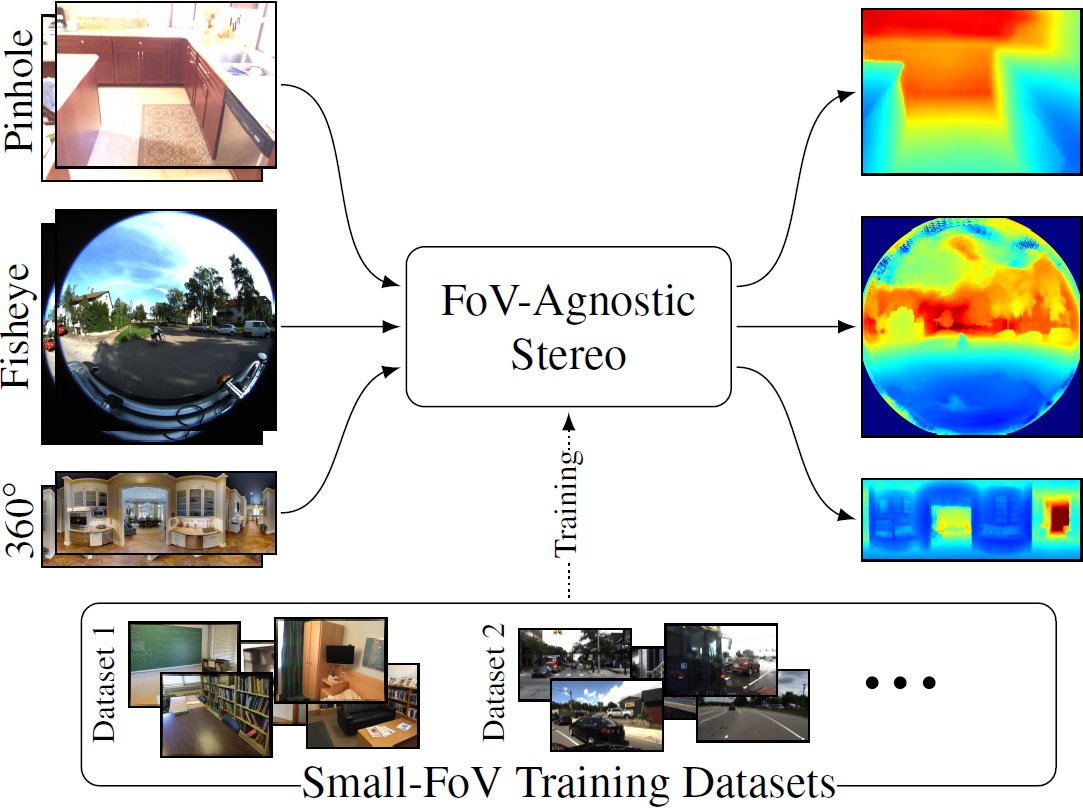

🚀 Excited to release the code from our #3DV2024 oral presentation: FoVA-Depth: Field-of-View Agnostic Depth Estimation for cross-dataset generalization! 📊 🔗 Project details: research.nvidia.com/labs/lpr/fova-… 🔗 Code: github.com/NVlabs/fova-de… (1/8)