Jędrzej Maczan

85 posts

Jędrzej Maczan

@jedmaczan

Low level deep learning: torch-webgpu / tiny-vllm / pytorch compiler / @pagedout_zine https://t.co/zM6gQ2L7VL cover art by https://t.co/rs0nMCNnN6

I built a tiny-vllm in C++ and CUDA - paged attention - continuous batching - educational - 100% human-written™ And now I writing a course where you will build your own vLLM yourself. Still work in progress, I'll finish by the end of April. All for free ofc, just a GitHub repo

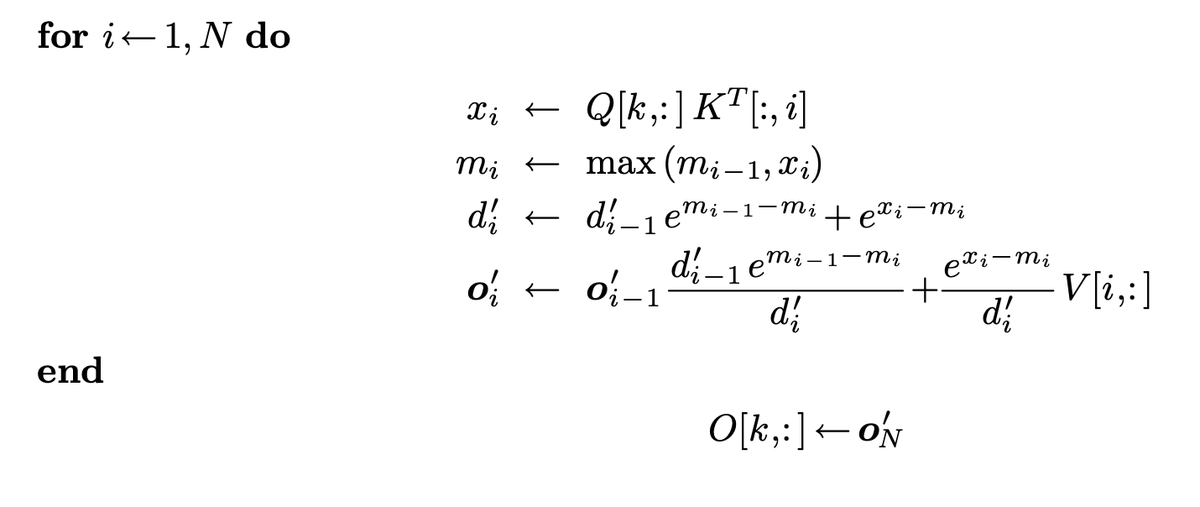

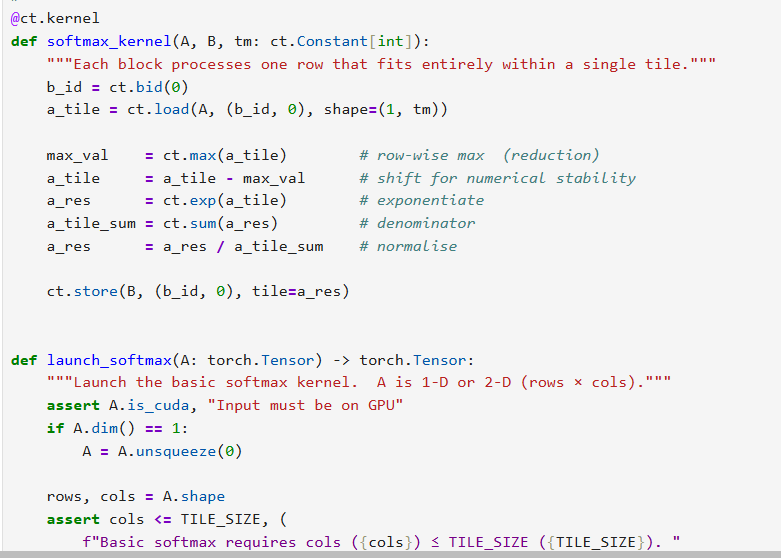

Asymmetric hardware scaling is here. Blackwell tensor cores are now so fast, exp2 and shared memory are the wall. FlashAttention-4 changes the algorithm & pipeline so that softmax & SMEM bandwidth no longer dictate speed. Attn reaches ~1600 TFLOPs, pretty much at matmul speed! joint work w/ Markus Hoehnerbach, Jay Shah(@ultraproduct), Timmy Liu, Vijay Thakkar (@__tensorcore__ ), Tri Dao (@tri_dao) 1/