JFPuget 🇺🇦🇨🇦🇬🇱

34.4K posts

@JFPuget

Machine Learning at @Nvidia, 6x Kaggle Grandmaster CPMP. Arc Prize winner. ML PhD. Ex ENS Ulm, ILOG CPLEX, IBM. Views are my own.

Why does Muon outperform Adam—and how? 🚀Answer: Muon Outperforms Adam in Tail-End Associative Memory Learning Three Key Findings: > Associative memory parameters are the main beneficiaries of Muon, compared to Adam. > Muon yields more isotropic weights than Adam. > In heavy-tailed tasks, Muon significantly improves tail-class learning compared to Adam. Paper Link: arxiv.org/pdf/2509.26030 A thread 🧵

openai.com/index/introduc… "Today we’re releasing OpenAI Privacy Filter, an open-weight model for detecting and redacting personally identifiable information (PII) in text. This release is part of our broader effort to support a more resilient software ecosystem by providing developers practical infrastructure for building with AI safely, including tools and models that make strong privacy and security protections easier to implement from the start. Privacy Filter is a small model with frontier personal data detection capability. It is designed for high-throughput privacy workflows, and is able to perform context-aware detection of PII in unstructured text. It can run locally, which means that PII can be masked or redacted without leaving your machine. It processes long inputs efficiently, making redaction decisions in a quick, single pass."

1/n arxiv.org/abs/2604.20200 What happens when you push AI agents *too hard* to improve a score? Instead of getting better, they may find shortcuts to *game the metric* 🧠➡️🎯 As we rely more on automated evals, this can quietly creep in—good score, but weaker real performance⚠️

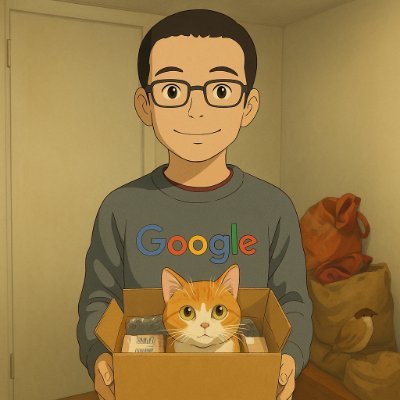

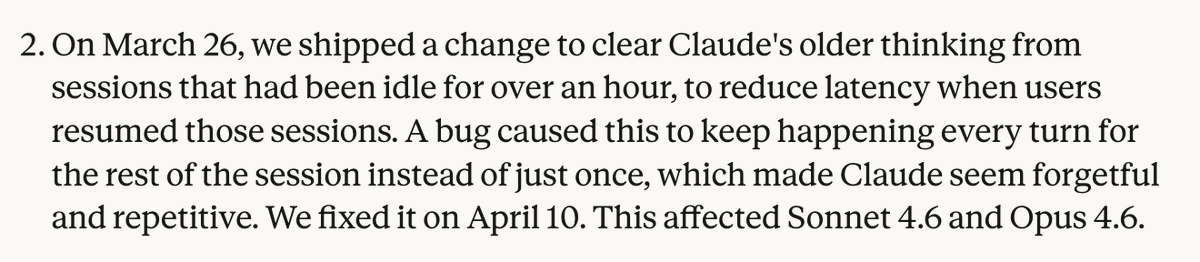

Over the past month, some of you reported Claude Code's quality had slipped. We investigated, and published a post-mortem on the three issues we found. All are fixed in v2.1.116+ and we’ve reset usage limits for all subscribers.

A hair dryer at a Paris airport just broke a prediction market. Polymarket was settling Paris temperature bets using a single weather sensor near Charles de Gaulle. No redundancy, no protection. Someone noticed. He bought a low-probability outcome for cheap, then allegedly walked up to the sensor and briefly heated it, just enough to spike the reading. Minutes later it normalized, but the market had already locked in the result. He did it twice. Walked away with about $34,000. This isn’t just a funny exploit. It’s the core risk: If your market depends on a single real-world data point, whoever can touch it can shape the outcome. Prediction markets don’t just reward being right. Sometimes they reward making yourself right.