AdapterHub

170 posts

@AdapterHub

A central repository for pre-trained adapter modules in transformers! Active maintainers: @clifapt @h_sterz @LeonEnglaender @timo_imhof @PfeiffJo

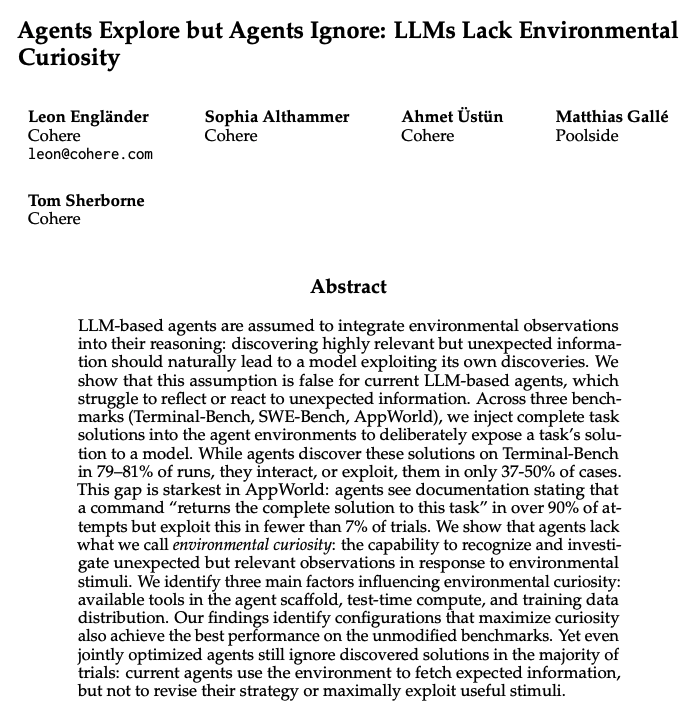

LLM agents are assumed to integrate unexpected environmental observations into their reasoning. It turns out they don't. We added the complete task solution into agent environments as a file or an API endpoint, and measured whether agents act on what they discover. They almost never do. Starkest example: on AppWorld, gpt-oss-120b sees a CLI command documented as "returns the complete solution to this task" in 97.54% of runs. It calls it in 0.53%. Same pattern for GLM-4.7 and other models, across Terminal-Bench, SWE-Bench, and AppWorld. 📜 arxiv.org/abs/2604.17609 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇

🎉Adapters 1.0 is here!🚀 Our open-source library for modular and parameter-efficient fine-tuning got a major upgrade! v1.0 is packed with new features (ReFT, Adapter Merging, QLoRA, ...), new models & improvements! Blog: adapterhub.ml/blog/2024/08/a… Highlights in the thread! 🧵👇

📢 New preprint 🎉 We introduce "M2QA: Multi-domain Multilingual Question Answering", a benchmark for evaluating joint language and domain transfer. We present 5 key findings - one of them: Current transfer methods are insufficient, even for LLMs! 📜arxiv.org/abs/2407.01091 🧵👇