Sabitlenmiş Tweet

jo.schb

29 posts

jo.schb

@jo_schb

PhD student @ CompVis Group, LMU Munich

Katılım Ocak 2021

497 Takip Edilen114 Takipçiler

jo.schb retweetledi

jo.schb retweetledi

jo.schb retweetledi

jo.schb retweetledi

I'm happy to share that I’ll be presenting two first-authored papers at #ICCV2025 🌺 in Honolulu, together with @MingGui725184! 🏝️ (Thread 🧵👇)

English

jo.schb retweetledi

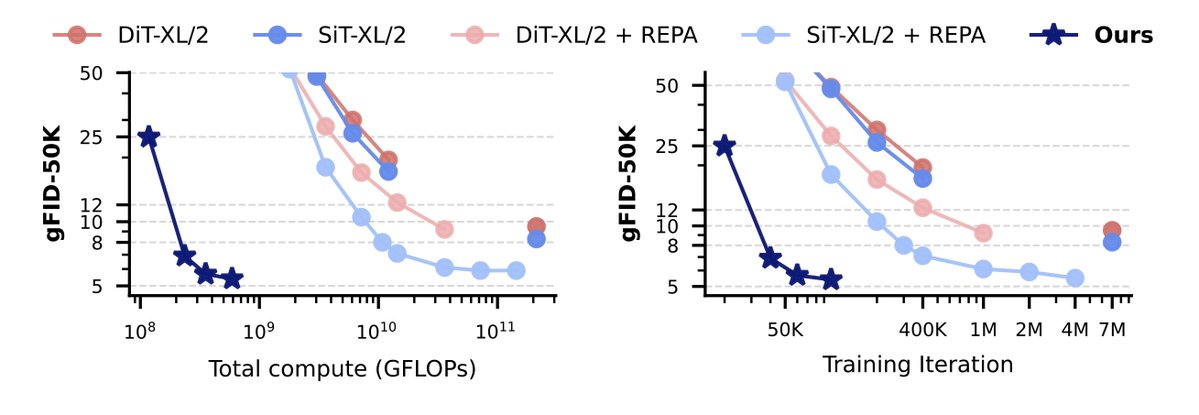

There has been quite a lot of talk recently about SSL representations in generative models. IMHO if you are training an image generative model in latent space you should aim for as much compute efficiency as possible (otherwise what's the point?). The amazing @jo_schb and @MingGui725184 + collaborators at LMU have really cracked this problem with RepTok, please check the thread! A common drawback of most works in this direction (even the most recent ones) is that they show viability for ImageNet only, which has its issues (specially if using DINOv2 features). @jo_schb and @MingGui725184 found that RepTok allows you to compress images so much that you can use a pure MLP-based architecture for the more general T2I problem setting obtaining really good results while drastically reducing training compute. I am super grateful to have had the chance to advise the team on this one!

jo.schb@jo_schb

🤔 What if you could generate an entire image using just one continuous token? 💡 It works if we leverage a self-supervised representation! Meet RepTok🦎: A generative model that encodes an image into a single continuous latent while keeping realism and semantics. 🧵👇

English

Also, check out concurrent works on how to use SSL encoders for latent space models:

• REA: arxiv.org/abs/2510.11690 [@boyangzheng_]

• AlignTok: arxiv.org/abs/2509.25162 [@bowei_chen_19]

English

This work was co-led by @MingGui725184 and me and wouldn't have been possible without the help of all the other collaborators: Timy Phan, @felix_m_krause, @jmsusskind, @itsbautistam, and Björn Ommer.

A big thank you to all of them🙏

English

jo.schb retweetledi