Giosue Migliorini retweetledi

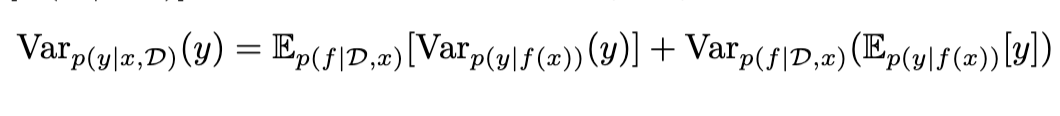

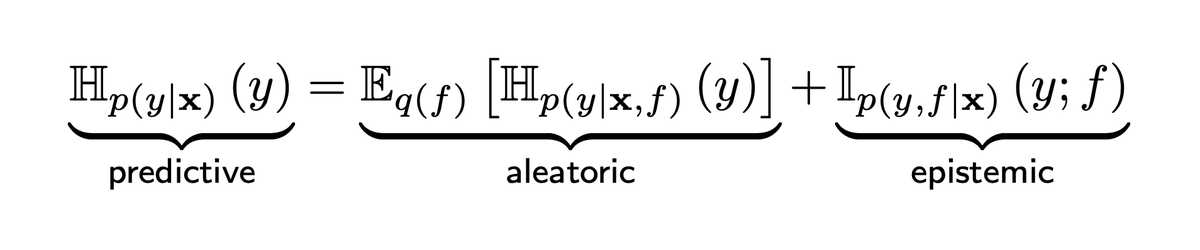

LLMs are autoregressive and slow? No! Parallel Token Prediction decodes multiple consistent tokens in one model call. PTP allows arbitrary dependencies in one call, unlike discrete diffusion. Practical: 2.4x speedup

github.com/mandt-lab/ptp

ICLR: Apr 23, morning poster P3-#608

English