Jiri

152 posts

👋Hi /haɪ/, we're the Tencent Hy /haɪ/ team🐧 Today, we open source Hy3 preview (295B A21B), a leading reasoning and agent model in its size, with great cost efficiency. Give us feedback to help improve Hy3 official! 🤗 hf.co/tencent/Hy3-pr… 📖 hy.tencent.com/hy3-preview

Open models are improving fast. Running them efficiently in production is still hard. @nebiustf × @Eigen_AI_Labs are partnering to bring optimized frontier open models to Token Factory. DeepSeek, GPT-OSS, Kimi, Qwen, Llama, GLM and more, optimized for speed and efficiency at scale. High-performance open model inference without building the optimization stack yourself. Read more: nebius.com/blog/posts/neb…

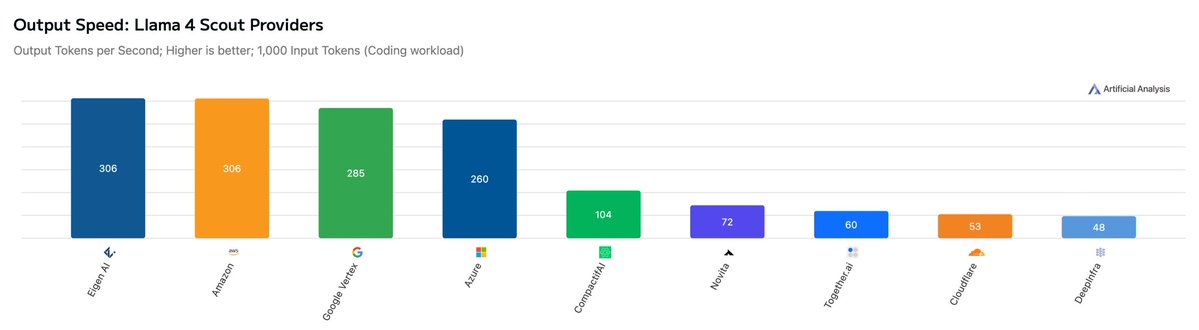

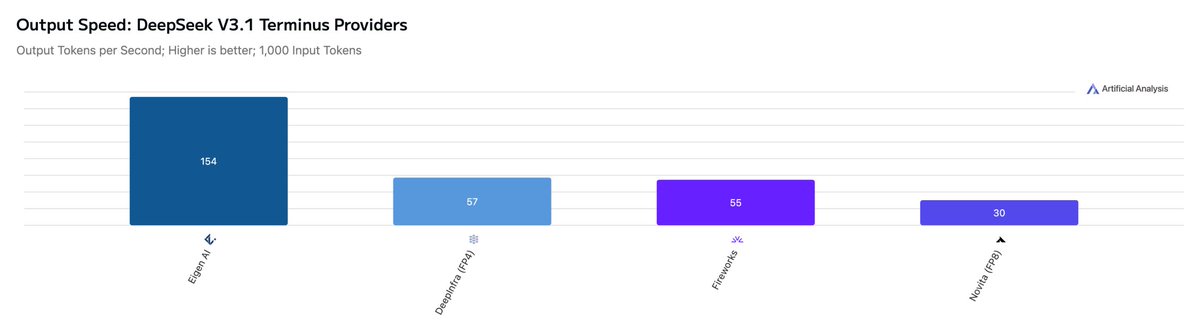

We are incredibly excited to share that Eigen AI was recognized as #1 𝐬𝐩𝐞𝐞𝐝 𝐢𝐧𝐟𝐞𝐫𝐞𝐧𝐜𝐞 𝐩𝐫𝐨𝐯𝐢𝐝𝐞𝐫 on Jensen Huang's keynote slide at NVIDIA GTC 2026. 🚀 This is a surreal and deeply meaningful moment for our team. ❤️ From day one, we set out to build world-class AI infrastructure with a focus on extreme performance, efficiency, and real-world deployment. To see Eigen AI recognized on one of the biggest stages in AI is an incredible honor, and a testament to the hard work, technical depth, and persistence of our entire team. Beyond Kimi K2.5, Eigen AI is also currently ranked #1 on another 25 𝐦𝐨𝐝𝐞𝐥𝐬 on Artificial Analysis, reflecting the breadth and consistency of our inference optimization across leading open-source models. ⚡ We are proud to be pushing the frontier of fast, scalable inference for leading open-source models, and even more excited about what comes next. 🌍 Huge thank you to everyone who has supported us on this journey. We are just getting started. Find more at eigenai.com #GTC #NVIDIA #AI #Inference #GenAI #LLM #AIInfrastructure #EigenAI #Infrastructure #keynote #GTC2026

🚀 InfiniAI Lab @ CMU is hiring Postdocs! We are looking for outstanding postdoctoral researchers in ML systems and security to join InfiniAI Lab at Carnegie Mellon University. Research directions include (but are not limited to): 🤖 AI Agents & RL 🔐 Machine Learning Security 🎥 Video Models 🏗️ AI Systems & Architecture Design We especially encourage candidates interested in applying for the CMU–Bosch Institute (CBI) Postdoctoral Fellowship, which provides strong support for independent, high-impact research: 👉 carnegiebosch.cmu.edu/fellowships/in… 🗓️ CBI application deadline: January 30, 2026 How to apply: Please fill out the form and send us an email via 👉 infini-ai-lab.cmu.edu/vacancies

congrats to llama 3 large for winning the LLM trading contest by not participating