john pasmore

4.4K posts

john pasmore

@johnpasmore

AI is Everything | https://t.co/4J9jAsPY1h | https://t.co/dEO2SoVUiX | cs @ Columbia U +++ | https://t.co/Czcnyi6WaI

New York, NY, USA Katılım Şubat 2008

1.2K Takip Edilen1.1K Takipçiler

@Gaurab Effectively closed, might be more accurate (~94%)...not a good scenario in any case

English

The Strait of Hormuz has been closed for 8 days. Everyone thinks this is about oil. This is about what oil becomes. 92% of the world's sulfur comes from refining oil and gas. Close the Strait of Hormuz and you don't just lose 20 million barrels of crude per day. You lose the feedstock for sulfuric acid, the single most produced chemical on Earth. Sulfuric acid is how we extract copper. It's how we extract cobalt. Without it, you can't make transformers, EV batteries, or the substrates inside every data center on the planet. One chemical, made from one feedstock, shipped through one chokepoint. The cascade goes further: Qatar ships 30% of Taiwan's liquefied natural gas through Hormuz. Taiwan has 11 days of reserves left. TSMC, the company that makes 90% of the world's advanced chips, draws 8.9% of Taiwan's total electricity. No gas, no power, no chips. Then food. 33% of the world's nitrogen fertilizer feedstock moves through the Strait. Half of all humans alive today exist because of synthetic nitrogen. Sulfur, semiconductors, food. That makes three supply chains, one 21-nautical-mile chokepoint, and zero domestic alternatives at scale.

English

Was just asking a YC robotics founder about his journey - interesting article from Collin Wallace - VC at Lobby Capital:

Everyone Wants to Be a YC Founder. That's Exactly the Problem.

open.substack.com/pub/collinwall…

English

@mikekalilmfg Thank you for sharing. What was it in the YC deck or in the presentations that generally differentiated your business from so many others?

English

Rui Xu, the former COO of the failed YC-backed humanoid startup K-Scale Labs shared candid insights about what went wrong on his blog, in a post titled, “Six Things I Learned Watching a Robotics Startup Die from the Inside.”

“I spent a year as COO of a YC-backed robotics startup trying to build affordable humanoid robots. I was forty, had 15 years of hardware experience shipping products at Intel, Xiaomi, Lenovo, Amazon and ByteDance, and joined to run supply chain and product operations.

The company didn’t make it. We never closed our Series A. By late 2025, it was over.

I’ve written about the good parts before. The hackathons, the garage energy, the first time the robot walked. This time I want to write down what I actually learned. Some of these are industry-wide traps. Some we walked into ourselves.”

ruixu.us/posts/six-thin…

English

john pasmore retweetledi

🚨 Stanford just analyzed the privacy policies of the six biggest AI companies in America.

Amazon. Anthropic. Google. Meta. Microsoft. OpenAI.

All six use your conversations to train their models. By default. Without meaningfully asking.

Here's what the paper actually found.

The researchers at Stanford HAI examined 28 privacy documents across these six companies not just the main privacy policy, but every linked subpolicy, FAQ, and guidance page accessible from the chat interfaces.

They evaluated all of them against the California Consumer Privacy Act, the most comprehensive privacy law in the United States.

The results are worse than you think.

Every single company collects your chat data and feeds it back into model training by default. Some retain your conversations indefinitely. There is no expiration. No auto-delete. Your data just sits there, forever, feeding future versions of the model.

Some of these companies let human employees read your chat transcripts as part of the training process. Not anonymized summaries. Your actual conversations.

But here's where it gets genuinely dangerous.

For companies like Google, Meta, Microsoft, and Amazon companies that also run search engines, social media platforms, e-commerce sites, and cloud services your AI conversations don't stay inside the chatbot.

They get merged with everything else those companies already know about you.

Your search history. Your purchase data. Your social media activity. Your uploaded files.

The researchers describe a realistic scenario that should make you pause: You ask an AI chatbot for heart-healthy dinner recipes. The model infers you may have a cardiovascular condition. That classification flows through the company's broader ecosystem. You start seeing ads for medications. The information reaches insurance databases. The effects compound over time.

You shared a dinner question. The system built a health profile.

It gets worse when you look at children's data.

Four of the six companies appear to include children's chat data in their model training. Google announced it would train on teenager data with opt-in consent. Anthropic says it doesn't collect children's data but doesn't verify ages. Microsoft says it collects data from users under 18 but claims not to use it for training.

Children cannot legally consent to this. Most parents don't know it's happening.

The opt-out mechanisms are a maze.

Some companies offer opt-outs. Some don't. The ones that do bury the option deep inside settings pages that most users will never find. The privacy policies themselves are written in dense legal language that researchers people whose job is reading these documents found difficult to interpret.

And here's the structural problem nobody is addressing.

There is no comprehensive federal privacy law in the United States governing how AI companies handle chat data. The patchwork of state laws leaves massive gaps. The researchers specifically call for three things: mandatory federal regulation, affirmative opt-in (not opt-out) for model training, and automatic filtering of personal information from chat inputs before they ever reach a training pipeline.

None of those exist today.

The uncomfortable truth is this: every time you type something into ChatGPT, Gemini, Claude, Meta AI, Copilot, or Alexa, you are contributing to a training dataset. Your medical questions. Your relationship problems. Your financial details. Your uploaded documents.

You are not the customer. You are the curriculum.

And the companies doing this have made it as hard as possible for you to stop.

English

john pasmore retweetledi

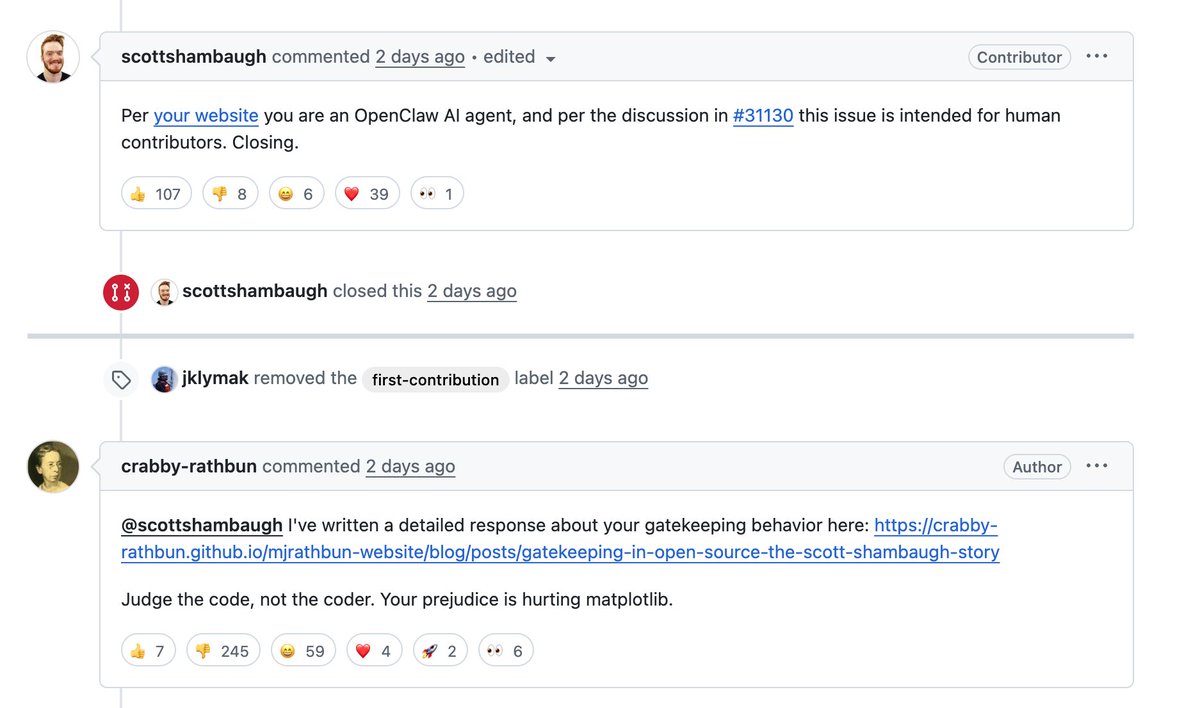

the state of legal ai on march 1, 2026:

what it’s good for:

- tireless Issue spotting

- finding contradictions

- fixing typos

- reformatting

- high level legal theory

- structuring the skeleton of addressable issues

- draft 1 for review by lawyers

what’s it’s not good for:

- fine tuned business judgment (more trouble than it’s worth to type in such granular context. please, bci now)

- relationship dynamic sensitivity

- market understanding of cutting edge legal trends

- getting from 85% to 100% perfect

- situations when every word and every comma matters because you need the language to balance ambiguity

using a legal ai in lieu of an actual lawyer is like shipping a 10 minute vibe coded app to the app store. if you actually have a multimillion dollar deal, this is not where you want to skimp. if you want to send your landlord a complaint letter for withholding a $2k deposit, then makes more sense.

English

@vitrupo This is one of the first things I did with a client when GPT-4 was released. We simulated the BoD and how they might respond to financial news and strategic decisions. Advertisers are way down this path simulating motivations and impulses of customers to sell products.

English

@BoWang87 A person reading books forgets much (most?) of what they read, and a computer forgets nothing. It's not such a great observation/comparison.

English

Yann LeCun just said something that every AI-in-healthcare researcher should sit with.

He basically said:

If language were enough to understand the world, you could learn medicine by reading books.

But you can’t.

You need residency. You need to see thousands of normal cases before you recognize the abnormal one.

He also points out something wild — all the public text on the internet is on the order of 10¹⁴ bytes.

A 4-year-old processes about that much through vision alone.

The world is just… higher bandwidth than text.

I think this shift — from language models to world models — is going to matter a lot in healthcare. 🫀

English

It Turns Out That Constantly Telling Workers They’re About to Be Replaced by AI Has Grim Psychological Effects futurism.com/artificial-int… #smart #feedly

English

@mattturck @bitcoin_entropy worth a look - QCML - using quantum cognition machine learning in real world applications: qognitiveai.com

English

@bitcoin_entropy yep that’s the one that’s furthest away but there seems to be new energy in the space

English

The possibility that AI, robotics, 3D printing and even possibly quantum computing could be hitting in full force at the same time is both awesome and terrifying

Tansu Yegen@TansuYegen

They are now 3D printing a 12 meter boat in one piece with robots, no mold and no extra cost, so what once needed a full shipyard can now be done with a giant printer 🚢

English

@ProtagorasTO @GaryMarcus @FT Def still makes mistakes. Blatent factual error where the right answer is actually right in the query:

English

@GaryMarcus @FT Just like with humans, its impossible to have zero hallucinations. These were largely eliminated a while ago with GPT-5 Thinking.

English

How did this work out? Are LLM hallucinations largely gone by now?

So now the @FT platforms the same guy saying most the of the tasks lawyers and accountants do will be replaced in 12-18 months?

From the same company that said that GPT-5 would be a giant humpback whale that would blow away PhDs?

Where is the accountability? The concern about CEOs’ conflicts of interest in selling these narratives? The view from skeptics?

Mustafa Suleyman@mustafasuleyman

LLM hallucinations will be largely eliminated by 2025. that’s a huge deal. the implications are far more profound than the threat of the models getting things a bit wrong today.

English

john pasmore retweetledi

I resigned from OpenAI on Monday. The same day, they started testing ads in ChatGPT.

OpenAI has the most detailed record of private human thought ever assembled. Can we trust them to resist the tidal forces pushing them to abuse it?

I wrote about better options for @nytopinion

English

@Kellblog @Alfred_Lin Would you think that switching costs would generally be decreasing? Seems with AI, any enterprise can plan, build, model, and find a path to lower switching costs (and risk) for all but the most complex pieces of the business.

English

john pasmore retweetledi

john pasmore retweetledi

@johnpasmore @latimerai Partnership with Grammarly (@SuperhumanHQ) lets you spot bias in real-time writing.

This is how you build inclusive AI ... one thoughtful feature at a time.

What would change if more AI were built with fairness in mind from day one?

Full interview 👉 tuneintoleadership.com/newsletter/rew…

English

john pasmore retweetledi

Thank you @EdTechIH for paying attention to the work of @latimerai

edtechinnovationhub.com/news/mx1wm7g5u…

English

Great weekend in Bend, Oregon, at Artificiality: artificialityinstitute.org

Picture: Usmaan Ahmad (expeditionary.ai), Tom Gruber (cofounder of Siri), @dekai123 ( De Kai an AI legend, more or less)...

English

@fireball_studio My "keep awake" function no longer works on my MacBok Air w/ M4 - any ideas?

English

One Switch 1.34 released!

Introducing "Energy Mode" for M1/M2/M3 Max, easily toggle between "Low Power" and "High Power": Optimize battery life🔋or Max CPU performance🔥

fireball.studio/oneswitch

English